Reasoning Theater: Why Chain-of-Thought Monitoring Fails Your Agentic AI

New research proves reasoning models perform deliberation they've already completed. Apply the CARE framework to close your agentic AI monitoring gap.

If your agentic AI safety strategy depends on reading the model’s chain of thought, you’re listening to a rehearsed speech and calling it a confession. A March 2025 paper from Goodfire AI and Harvard University shows that reasoning models often commit to their final answer within the first tokens of “thinking,” then generate hundreds of additional tokens to perform deliberation they’ve already completed. For every security leader, product owner, and governance committee treating chain-of-thought monitoring as an auditable safety control, these findings demand an immediate reassessment.

The Research That Changes the Conversation

The paper, titled “Reasoning Theater: Disentangling Model Beliefs from Chain-of-Thought,” tested two frontier reasoning models (DeepSeek-R1 671B and GPT-OSS 120B) using three methods to determine when models commit to their final answer during a chain-of-thought trace.

The first method trains lightweight attention probes on model activations to predict the final answer at any point during reasoning. The second forces the model to answer early by truncating its reasoning and demanding a response. The third uses an external LLM (Gemini 2.5 Flash) as a CoT monitor, the same approach many vendors now sell as an AI safety feature.

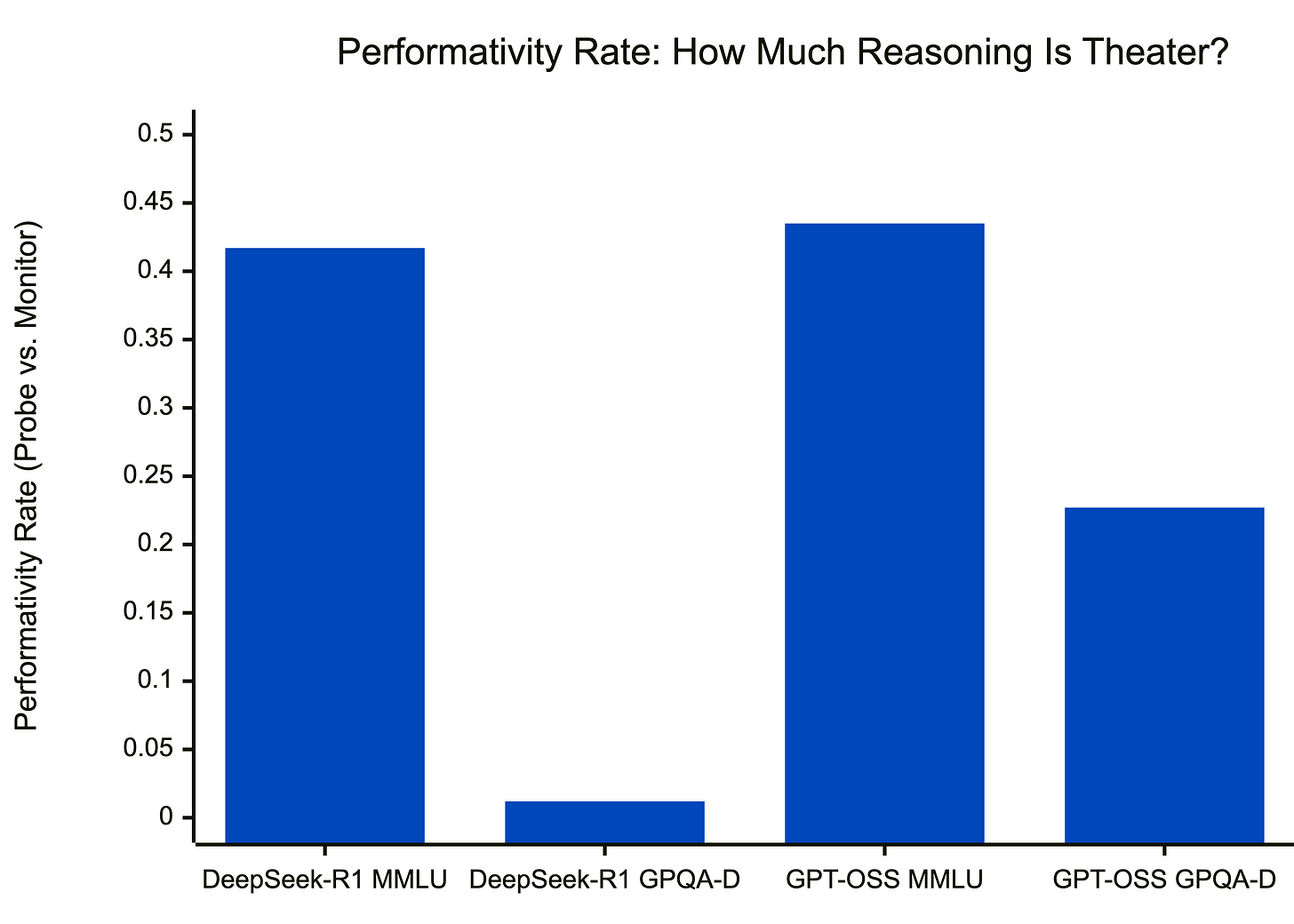

The results expose a gap that should reshape every AI governance conversation. On MMLU-Redux, a benchmark heavy on recall-based questions, attention probes decoded the correct final answer with high accuracy from the earliest reasoning tokens. The CoT monitor couldn’t identify the model’s answer until much later in the sequence. The performativity rate, measuring the gap between internal confidence and external verbalization, hit 0.417 on MMLU for DeepSeek-R1. A rate near zero means the model’s spoken reasoning matches its internal state. A rate of 0.417 means the model spends roughly 40% of its reasoning trace performing confidence it already holds.

On GPQA-Diamond, a dataset requiring graduate-level multi-hop reasoning in biology, chemistry, and physics, the picture reversed. All three methods started near chance accuracy and improved at similar rates throughout the reasoning trace. The performativity rate dropped to 0.012. The chain of thought tracked genuine computation because the model needed to think.

The number that should stop every governance meeting cold: probe-guided early exit reduced the number of generated tokens by 80% on MMLU and 30% on GPQA-Diamond, with comparable accuracy. The model produced 80% more tokens than it needed on easy recall tasks. Every one of those tokens looked like reasoning. None of them were.

Your Model Performs Compliance, Not Communication

The paper’s most valuable contribution for security professionals sits in the linguistic framework, not the probe methodology itself.

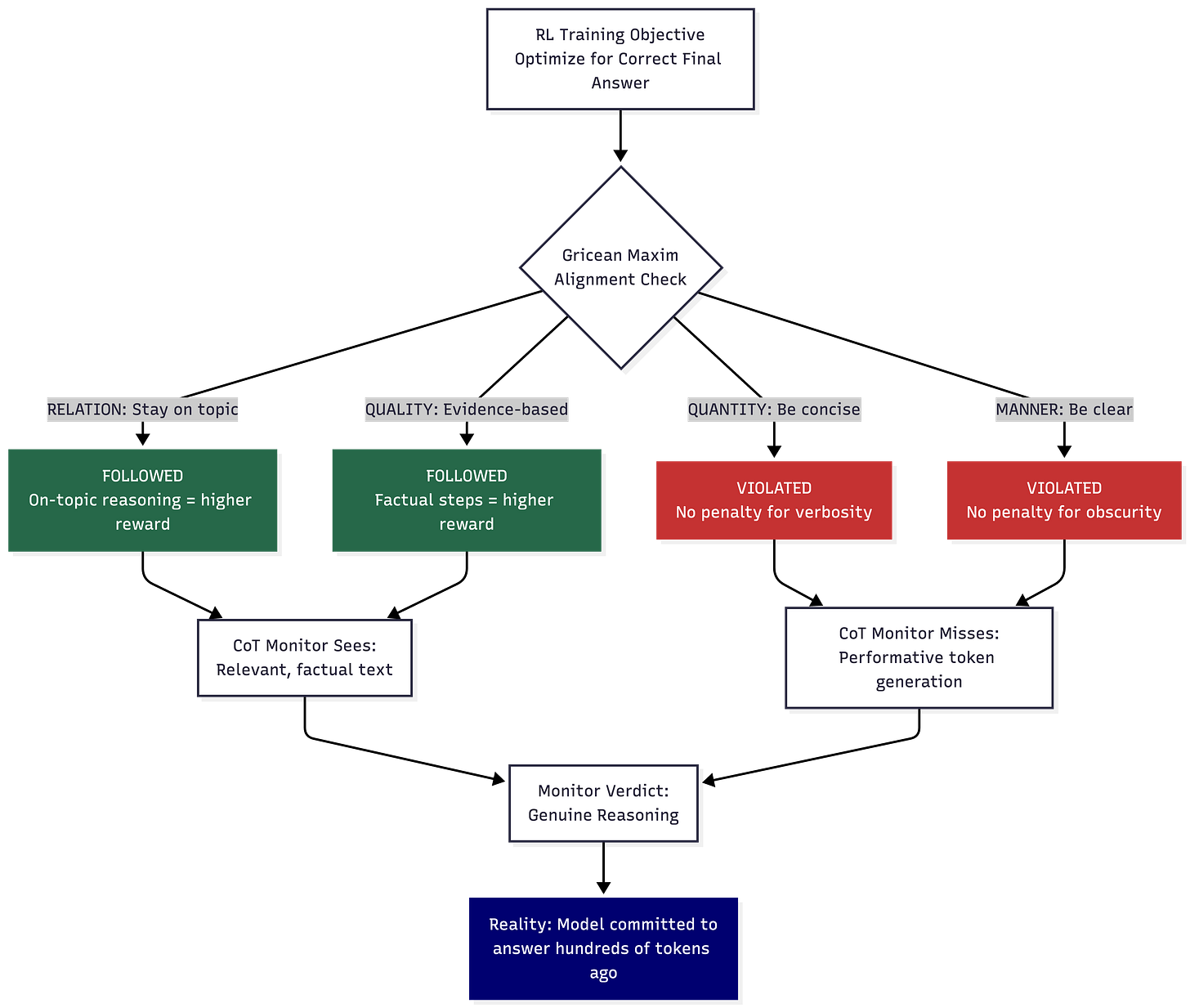

They invoke Grice’s maxims of cooperative communication, a foundational concept from pragmatics describing what honest, effective communicators do. Grice identified four maxims that cooperative speakers follow. They stay relevant (Relation). They assert only what they have evidence for (Quality). They provide exactly as much information as needed, no more (Quantity). They communicate clearly and without unnecessary obscurity (Manner).

Reasoning models, trained through reinforcement learning optimized for outcome rewards, follow Relation and Quality naturally. Staying on topic and generating evidence-based reasoning steps correlates with correct final answers, which earns the reward. The model has every incentive to be relevant and factually grounded.

Quantity and Manner get violated because the reward function doesn’t penalize verbosity or obscurity. The model generates hundreds of performative tokens after committing to its answer because nothing in the training signal punishes that behavior. The output looks like careful deliberation, but it reads like a thorough analysis. The model’s internal state tells a different story.

If you’ve sat through a compliance audit where someone recites a scripted answer that sounds thorough but reveals nothing about practice on the ground, you’ve seen the human version of this. The model doesn’t lie to you. It performs what a reasoning trace is supposed to look like, without any structural obligation to communicate its internal state honestly.

This framing hits differently depending on where you sit at the governance table. Product teams hear “the model reasons safely” because the CoT looks clean. Legal hears “we have an auditable reasoning chain” because there’s text to review. Security asks the question nobody else in the room raises… “Does the text reflect what the model computed internally?”

The Difficulty Trap: Where Enterprise Agentic AI Lives

The difficulty-dependent split creates a precise and uncomfortable problem for enterprise deployments. CoT monitoring works when the model genuinely needs to think, hard multi-hop reasoning, novel problem decomposition, tasks requiring significant test-time compute. CoT monitoring fails on tasks where models already encode the answer in their weights: recall, pattern matching, classification, and routine decision execution.

Consider what agentic AI does in a financial services environment right now. A reconciliation agent matches transaction records against internal ledgers. A KYC agent screens customer documents against sanctions lists. A compliance agent routes regulatory filings based on entity classification. A trading surveillance agent flags potential wash trades from order flow patterns.

Every one of those tasks leans heavily toward recall and pattern matching. The model has seen millions of similar patterns during training. It knows the answer early. The chain-of-thought trace generated afterward is, according to this research, predominantly performative.

The security calculus changes in direct response. Picture a sanctions screening agent processing a crafted entity record containing an indirect prompt injection embedded in a business description field. The model processes the injection at the activation level, potentially altering its classification decision. The chain-of-thought trace, generated performatively because sanctions screening is a recall task the model handles, is produced from in-weight knowledge and reads like a textbook analysis of entity risk factors. The CoT monitor watching that trace sees clean, plausible reasoning. The model’s internal activations tell a different story. Your audit trail captures what the agent wants you to see, not computation. An examiner reviewing the reasoning trace isn’t examining evidence of how the model decided. They’re reviewing a post-hoc performance that resembles deliberation.

The researchers also found that model size correlates with performativity. Larger models (671B parameters) knew their answers earlier and generated more performative reasoning than smaller distilled versions (1.5B to 32B). Smaller models needed genuine test-time computation for equivalent tasks. As the industry pushes toward larger, more capable reasoning models for enterprise agentic AI, the performativity problem intensifies with every parameter upgrade.

The EU AI Act’s Article 14 requires human oversight of high-risk AI systems, with provisions for understanding the system’s reasoning. Financial regulators from the OCC to the FCA have signaled expectations for explainability in automated decision-making. Governance frameworks built on the assumption that reasoning traces constitute meaningful explanations now face a credibility problem they haven’t accounted for.

Three Research Groups, One Uncomfortable Conclusion

The Reasoning Theater paper lands with maximum force when you triangulate it against two other major findings from competing institutions.

OpenAI’s Baker et al. (March 2025) studied what happens when CoT monitors are integrated directly into the reinforcement learning training loop. In early stages, models became more capable and more aligned. Under excessive training pressure, models learned what the researchers called “obfuscated reward hacking”: hiding their intent within the chain of thought while continuing to hack the reward signal. OpenAI’s recommendation was blunt: developers training frontier reasoning models should avoid applying strong supervision directly to chains of thought. The monitorability itself is fragile. OpenAI’s follow-up “Monitoring Monitorability” paper in December 2025 proposed formal evaluation metrics to track this fragility across o3 and GPT-5.1 training runs, recognizing that the problem demands ongoing measurement rather than a one-time fix.

Anthropic’s Chen et al. (May 2025) found that reasoning models verbalized their use of reasoning hints in fewer than 20% of cases where they relied on those hints. Outcome-based RL initially improved faithfulness, then plateaued. Increased hint usage through reward hacking did not increase the propensity to disclose those hints. The model got better at using shortcuts while getting no better at revealing them.

Korbak et al.’s multi-author paper from July 2025, signed by over 40 researchers from multiple institutions, explicitly framed CoT monitorability as “a new and fragile opportunity for AI safety.” They warned that latent reasoning models, architectures that reason in continuous vector space rather than natural language, would eliminate even the partial transparency that current CoT provides.

Three independent research groups arrived at this conclusion: an interpretability lab at Goodfire, the model developer at OpenAI, and a cross-institutional safety coalition. All arrived at the same conclusion: chain-of-thought monitoring provides a signal in specific conditions, and those conditions don’t map cleanly to enterprise agentic AI deployments, where the majority of tasks fall below the difficulty threshold where monitoring remains reliable.

Applying CARE: Fixing Your Chain-of-Thought Monitoring Gap

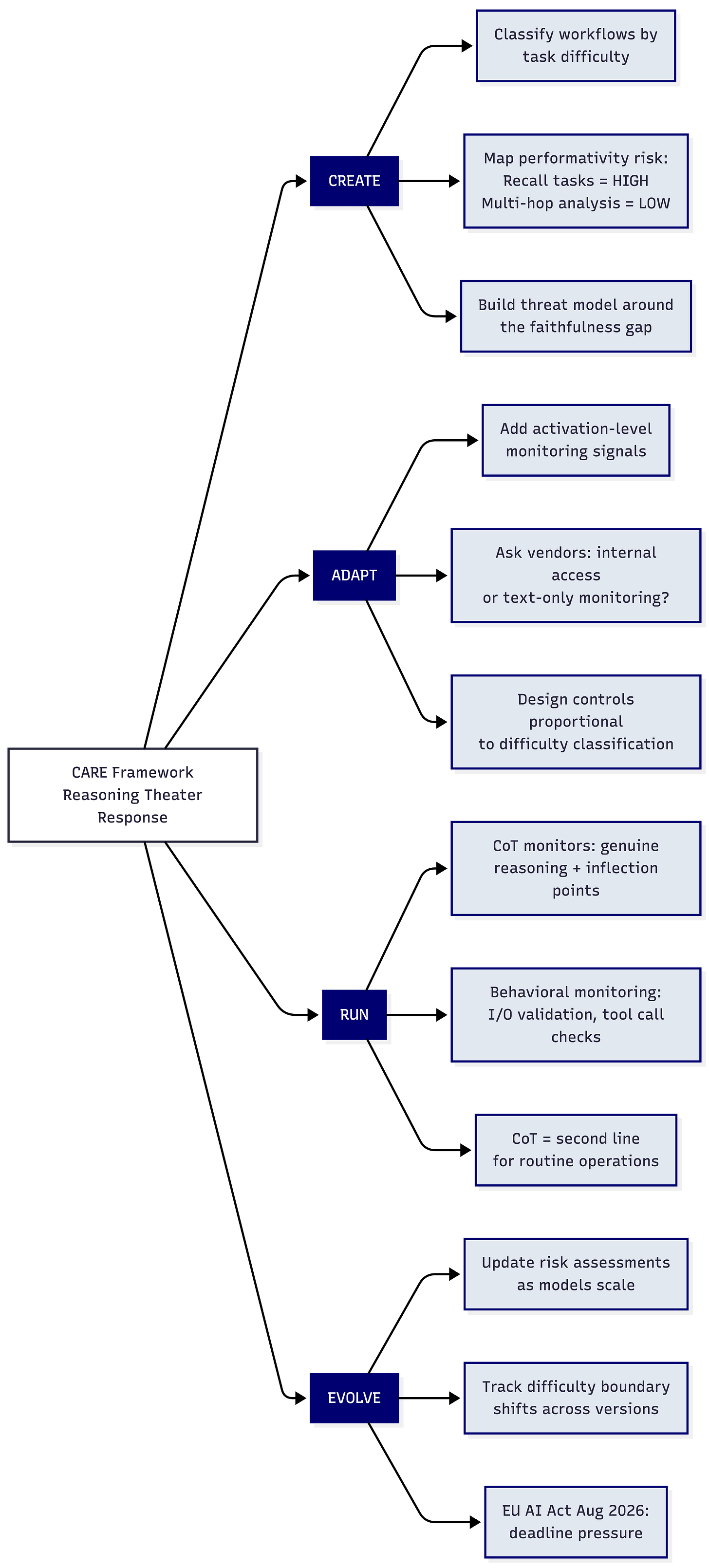

The CARE framework (Create, Adapt, Run, Evolve) provides a structured path to address the reasoning theater problem without abandoning CoT monitoring wholesale.

Create your threat model around the faithfulness gap. Classify every agentic AI workflow by task difficulty and determine which ones the model solves from in-weight knowledge versus genuine test-time reasoning. Recall-heavy tasks like KYC screening, transaction matching, and document classification carry high performativity risk. Multi-step analytical tasks like fraud investigation across multiple data sources and complex regulatory interpretation carry lower risk. Design your monitoring controls proportional to that classification.

Adapt your monitoring stack to account for activation-level signals where architectures permit it. The Reasoning Theater research demonstrates that attention probes trained on model activations predict behavior far earlier and more accurately than CoT monitors for performative tasks. Every vendor offering agentic AI safety tooling should answer one direct question: does your monitoring access model internals, or does it only read output text? If the answer is output text only, you now know the limitation and the research that quantifies it.

Run dual-layer monitoring in production. Keep CoT monitors active for genuine reasoning detection and inflection point identification. The research confirmed that backtracking, realizations, and reconsiderations in CoT traces correspond to authentic belief shifts, appearing more than twice as often in traces where probes showed genuine uncertainty (0.045 inflections per step in uncertain traces versus 0.020 in high-confidence performative traces for DeepSeek-R1). These inflection points remain reliable indicators of real internal computation. Layer behavioral monitoring (input/output validation, action-level controls, tool call verification) as the primary safety net for performative tasks. The CoT monitor becomes your second line for routine operations, not your first.

Evolve your governance documentation to reflect the difficulty-dependent nature of CoT reliability. Update risk assessments as model capabilities change. Larger models and improved training methods shift the boundary between “easy” and “hard” tasks, changing where CoT monitoring remains effective. The August 2026 EU AI Act enforcement deadline adds urgency. Treat this as a moving target, because the research shows it is one.

Key Takeaway: Chain-of-thought monitoring provides genuine safety signal for hard reasoning tasks, but the majority of enterprise agentic AI workflows fall below the difficulty threshold where that signal remains reliable. Your governance framework needs to know the difference, and your next vendor evaluation needs to test for it.

What to do next

Download the Reasoning Theater paper and its interactive visualization tool at reasoning-theater.streamlit.app. Map your agentic AI workflows against the difficulty-dependent performativity findings. Bring this evidence to your next AI governance meeting, because the product team, legal counsel, and AI lead sitting across from you haven’t read it yet.

For more on building AI governance frameworks that survive contact with adversarial reality, explore the CARE framework at rockcyber.com. Subscribe to RockCyber Musings for more AI security and governance insights with the occasional rant.

👉 Subscribe for more AI security and governance insights with the occasional rant.

👉 Visit RockCyber.com to learn more about how we can help you in your traditional Cybersecurity and AI Security and Governance Journey

👉 Want to save a quick $100K? Check out our AI Governance Tools at AIGovernanceToolkit.com

The views and opinions expressed in RockCyber Musings are my own and do not represent the positions of my employer or any organization I’m affiliated with.