Open-Weight Models Eat Closed Governance: The Half-Perimeter Problem

Closed-vendor AI governance breaks at the open-weight boundary. Sign the weights, build the runtime perimeter. We walk the gap and the build.

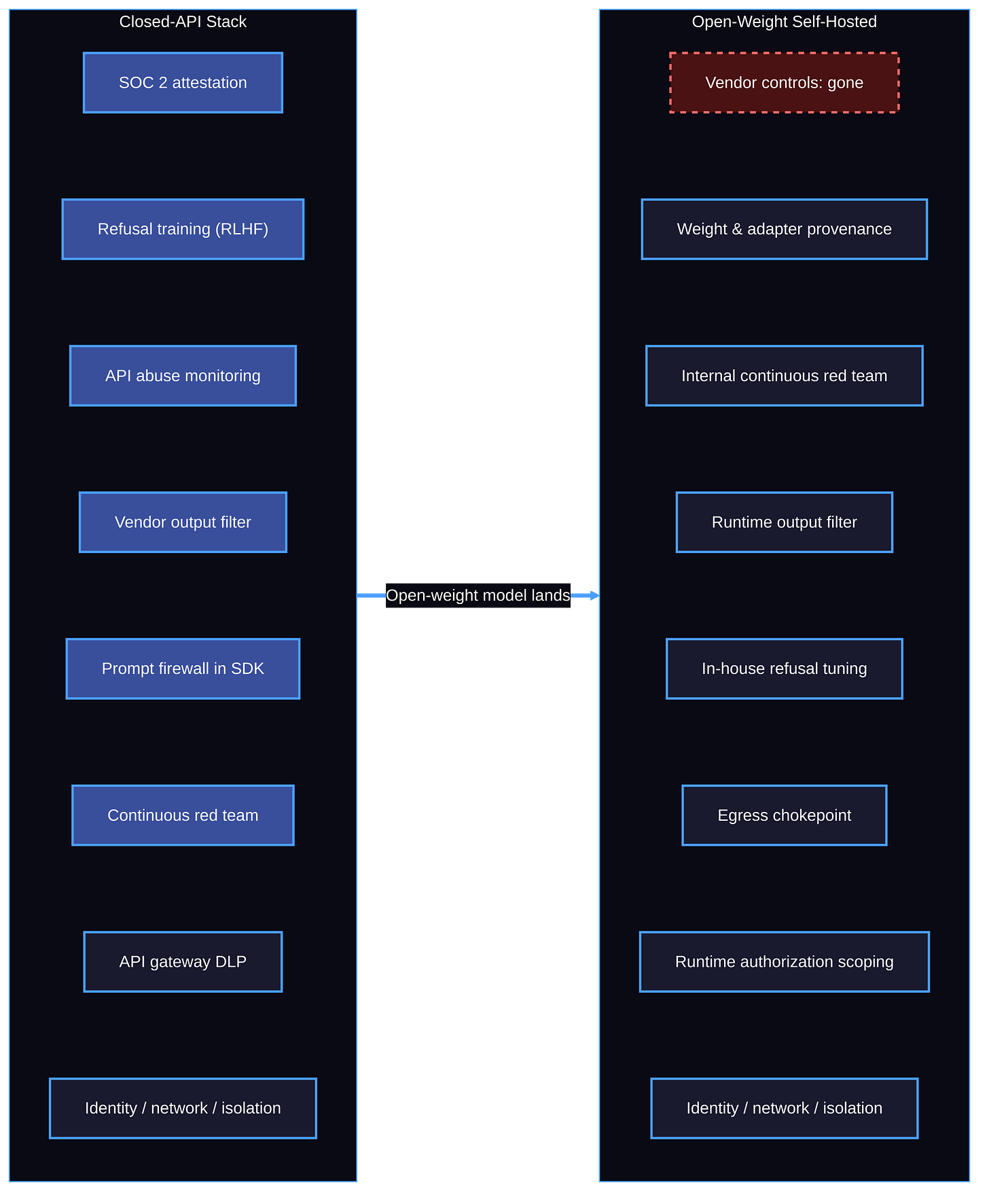

Open-weight reasoning models are landing in enterprise production, and the closed-vendor governance you bought doesn’t transfer with them. “Half-perimeter” is rhetorical; the real number depends on which controls you bought, but the point holds. The day a competent open-weight reasoning model runs on your hardware, the AI-specific governance you bought from your closed vendor stops covering part of the stack. The rest of this post walks the gap and the build.

The Vendor’s Own Words

OpenAI shipped gpt-oss-120b and gpt-oss-20b last year. Both are under Apache 2.0, and both are downloadable from Hugging Face. The 120b runs on a single 80GB GPU. In the model card, OpenAI’s own safety team admits what every CISO should already suspect. Once the weights ship, OpenAI cannot “implement additional mitigations or to revoke access.”

It’s the model provider’s own framing. It’s not me opining. Open-weight is a different risk profile from closed-API, by the model provider’s own assessment. The vendor can’t patch your inference cluster. The vendor can’t revoke a key that doesn’t exist. The vendor can’t run server-side abuse classifiers on traffic the vendor never sees. Everything that lived on the vendor side of the perimeter now lives on yours.

This is not a DeepSeek-versus-American-models story. It’s a closed-API-versus-open-weight story. Llama 3.3 70B (Meta), Qwen 3 32B (Alibaba), Mistral Magistral, and gpt-oss-120b sit on the same side of the boundary. The boundary is wherever the weights stop being someone else’s problem.

What Closed-Vendor Governance Bought You

Walk through what was on the bill of materials when you stood up your closed-API AI program. Oh, that’s right, you never did… but let’s pretend you did. You probably evaluated vendor-attested compliance, usually wrapped in a SOC 2 Type II report and a data processing addendum. DLP is integrated at the API gateway, watching prompts in flight. Output filtering runs on the vendor side, refusing to ship CBRN-adjacent content out of the model. Prompt firewall logic is embedded in the vendor SDK and patched without you redeploying. Vendor red teaming is on a continuous cadence. ToS enforcement occurs when an account misbehaves.

That stack assumed one thing. That a vendor sat on the other end of the inference call. Open-weight self-hosting moves every one of those controls in-house, with no shared customer base to underwrite the cost.

What does transfer? Network egress controls, identity at the runtime boundary, sandbox isolation, and supply-chain provenance for the model weights and fine-tunes. Notice what those have in common. None of them are AI-specific. They were always there. They’re the controls you applied to every other service you ran. Losing the AI-specific layer doesn’t break the non-AI controls. It does mean the only thing standing between a self-hosted reasoning model and a bad day is the perimeter you built for everything else.

Read your closed-vendor MSA carefully. The reps and warranties typically carve out third-party model behavior, hallucinations, and adversarial misuse. The vendor warrants infrastructure availability and indemnifies IP claims. The vendor doesn’t warrant safe model output. The “governance” part of vendor-attested compliance was always thinner than the SOC 2 cover suggested. Self-hosting strips even the thin part.

Refusal Training Is Now an In-House Problem

Vendor refusal training is the AI-specific control most enterprise teams over-trust. The research breaks the over-trust hard.

The Badllama 3 paper (arXiv 2407.01376) showed safety fine-tuning gets removed from Llama 3 8B in five minutes on a single A100 GPU for under fifty cents. The 70B model goes in 45 minutes for under three dollars. The same paper notes the attack runs on free Google Colab for the 8B variant. FAR.AI’s “Illusory Safety” research extended the result. Pre-fine-tune refusal rates near 100% across DeepSeek-R1, GPT-4o, Gemini 1.5 Pro, and Claude 3 Haiku dropped under 20% post-fine-tune. Harmfulness scores climbed past 80%.

The R1 red-team picture is even worse on the model itself, before any attacker fine-tuning. Cisco / Robust Intelligence reported a 100% attack success rate on 50 random HarmBench prompts against R1, while OpenAI o1 rejected every test in a parallel Holistic AI evaluation. Qualys TotalAI found R1’s distilled 8B variant failed 58% of 885 attempts across 18 jailbreak categories. Promptfoo put failures over 60% on prompts, including biological and chemical weapons. KELA jailbroke R1 to produce ransomware development steps and instructions for toxins and explosive devices.

OpenAI’s own approach to gpt-oss is the strongest signal that adversarial fine-tuning is the real threat model. The model card describes the adversarial fine-tuning of gpt-oss-120b under the Preparedness Framework prior to release. OpenAI’s Safety Advisory Group concluded the adversarially fine-tuned model didn’t reach “High” capability in Biological and Chemical Risk or Cyber risk. Read the implication closely. The model provider treats fine-tune-stripped safety as the baseline release condition the model must meet. The deployer running fine-tunes downstream gets no equivalent gate.

OpenAI knows this. It’s why gpt-oss-safeguard shipped on October 29, 2025: open-weight reasoning models for safety classification, designed for developers to operate as a defense-in-depth layer. Llama Guard 3, Prompt Guard, and Code Shield exist for the same reason. The vendor is shipping you the components. Components are not the same as a service. You operate them, tune them, monitor them, retrain them when the policy changes, and absorb the latency. OpenAI’s own gpt-oss-safeguard report names the constraint: reasoning-based classifiers add compute and latency that limit large-scale real-time use.

The math is brutal. The model weights are free. The runtime safety pipeline is not.

The Frameworks Describe the Gap. They Don’t Close It.

NIST AI RMF 1.0 plus the GenAI Profile (NIST AI 600-1, July 2024) plus the GPAI/Foundation Models Profile extension (arXiv 2506.23949) names training data audits (Manage 1.3, Measure 2.8) and model weight protection (Measure 2.7). Voluntary. The CSA NIST AI RMF Agentic Profile draft is candid about the bigger problem. It states plainly that earlier RMF documents did not contemplate “agents that acquire tool-use capabilities and execute autonomously in live production environments.”

OWASP Top 10 for LLM Applications 2025 LLM03 is the most explicit primary-source statement of the half-perimeter problem. The category description is direct: model cards offer no guarantees of provenance, malicious LoRA adapters compromise base models in collaborative environments, and on-device LLMs increase the attack surface. The OWASP Agentic Top 10, released December 10, 2025, adds ASI01 (Agent Goal Hijack) and ASI03 (Identity and Privilege Abuse) as runtime-boundary problems on self-hosted stacks.

ASI01 and ASI03 are not abstract. ASI01 shows up when prompt injection redirects an agent’s plan, and the closed-vendor refusal layer is gone. ASI03 shows up when the agent’s runtime authorization is broader than the task requires, because no vendor SDK is scoping the call for you anymore. Both problems live at the runtime boundary the vendor used to backstop.

EU AI Act Article 53(2) is the regulatory expression of the gap. Open-source GPAI models get a carve-out from technical documentation and downstream-information obligations, provided they’re released under a free open license, weights are public, and the model isn’t monetized. The carve-out vanishes at the Article 51 systemic-risk threshold of 10^25 FLOPs. Llama 3.3 70B, Qwen 3 32B, Mistral Magistral, and most enterprise-deployed open-weight reasoning models sit well below that threshold. They get the carve-out. They impose downstream obligations on enterprise deployers under Article 25(2) when significant modifications happen, a category that catches LoRA fine-tunes. Most teams running fine-tunes don’t know the clause exists. Enforcement begins August 2, 2026.

ISO 42001 mandates AIMS scope definition, third-party supplier oversight, and 38 Annex A controls. The gap there is structural. The open-weight model dropped from Hugging Face is not a “supplier” in the contractual sense. There’s no audit clause, no security questionnaire, no MSA. The standard tells you to define your AIMS scope. It doesn’t prescribe specific runtime-boundary controls for self-hosted foundation models.

Build the Runtime Perimeter

Frameworks describe the gap. Architecture closes it. The work to close it is described in the Huang and Lambros (yes, “this” Lambros) AAGATE paper (arXiv:2510.25863v2, November 3, 2025). AAGATE is a Kubernetes-native control plane that operationalizes NIST AI RMF for self-hosted agentic AI. The reference architecture hosts the open-weight model on Ollama at Layer 1 of the MAESTRO threat-model stack, which is the design assumption built in: the protected stack is “DeepSeek, Qwan, LLAMA, OSS” running on your hardware.

Four things transfer regardless of which control plane you adopt.

First, treat weights as supply-chain artifacts. AAGATE enforces SLSA L3, Cosign keyless signing on every OCI image, and an ArgoCD admission controller that rejects unsigned manifests at the gate. Whichever your path, you need signed weights, signed adapters, and a cluster-side admission policy that refuses to load anything unsigned. The Hugging Face nullifAI incident in February 2025, where ReversingLabs found malicious pickle files evading Picklescan via 7z compression and broken pickle deserialization, is the case study. Picklescan logs an error. The reverse-shell payload runs anyway.

Second, inventory open-weight runtimes alongside closed-API endpoints. AAGATE leverages the Agent Naming Service (ANS), and it registers every agent with a Decentralized Identifier and a SPIFFE certificate. You don’t need the blockchain layer. You do need a CMDB row for every Ollama cluster, every fine-tune, every adapter, with model SHA, lineage, and license tier captured. If your AI inventory has a row for the OpenAI tenant but no row for the GPU cluster running your fine-tuned Llama, the audit is incorrect.

Third, build authorization scope into the runtime, not the vendor SDK. AAGATE’s OAuth Relay translates abstract agent capabilities into ephemeral, narrowly scoped, purpose-bound credentials per side effect. Other architectures will name the same thing differently. The control matters since every external action an agent takes funnels through a policy-enforced single chokepoint with allow-listing, rate limiting, and cryptographic logging. AAGATE calls it the Tool-Gateway. AI gateway products commercialize the same pattern. Pick one.

Fourth, run your own evals because the vendor isn’t running them for you. AAGATE’s Janus Shadow-Monitor-Agent provides continuous, pre-execution adversarial evaluation in-loop, tied to a Governing-Orchestrator Agent executing a millisecond kill-switch when AIVSS scoring and SSVC decision logic flag a critical incident. The adversarial layer can also take the form of a parallel classifier, an internal red team, or any continuous evaluation pattern that mirrors what the vendor was running server-side. The pattern is non-negotiable. The product is.

These four moves are the architectural rebuttal to the half-perimeter. The perimeter you bought was always going to end at the runtime boundary. The runtime boundary is now your problem to instrument.

Operational reality matters here. The inference stack you’re protecting is Ollama, vLLM, SGLang, or llama.cpp. None of them ship with vendor-grade telemetry. Your container hosts a probabilistic system with stateless calls and no support contract. When an attacker fine-tunes a copy of your weights and slips it into your registry, there is no support call to escalate. There is only the runtime perimeter you built before the incident.

Key Takeaway: Closed-vendor governance was the AI-specific half you didn’t have to build. Open-weight reasoning models in production change that. Inventory the runtimes, sign the weights, scope the runtime authorization, and run your own evals. The vendor isn’t doing it for you anymore.

What to do next

If you’re approving an open-weight pilot this quarter, demand four things on the architecture review before the GPUs land. First, model SHA and adapter lineage in the CMDB on day one. Second, an egress chokepoint with input/output sanitization and policy-enforced allow-lists. Third, supply-chain controls (signed weights, SLSA-grade provenance, admission control rejecting unsigned). Fourth, a continuous internal evaluation loop on every high-risk agent.

The CARE framework (Create, Adapt, Run, Evolve) applies the same structure to AI security program design. The CISO Evolution covers the executive judgment side of decisions like this one. The AAGATE paper (arXiv 2510.25863v2) is the open-source reference architecture if you want to start from running code.

👉 Visit RockCyber.com to learn more about how we can help you in your traditional Cybersecurity and AI Security and Governance Journey

👉 Want to save a quick $100K? Check out our AI Governance Tools at AIGovernanceToolkit.com

👉 Subscribe for more AI and cyber insights with the occasional rant.

The views and opinions expressed in RockCyber Musings are my own and do not represent the positions of my employer or any organization I’m affiliated with.