AI Agent Authentication Gets the Hard Part Right. Authorization Is Still Your Problem.

IETF's new AI agent auth draft nails identity with WIMSE and SPIFFE but skips per-action authorization.

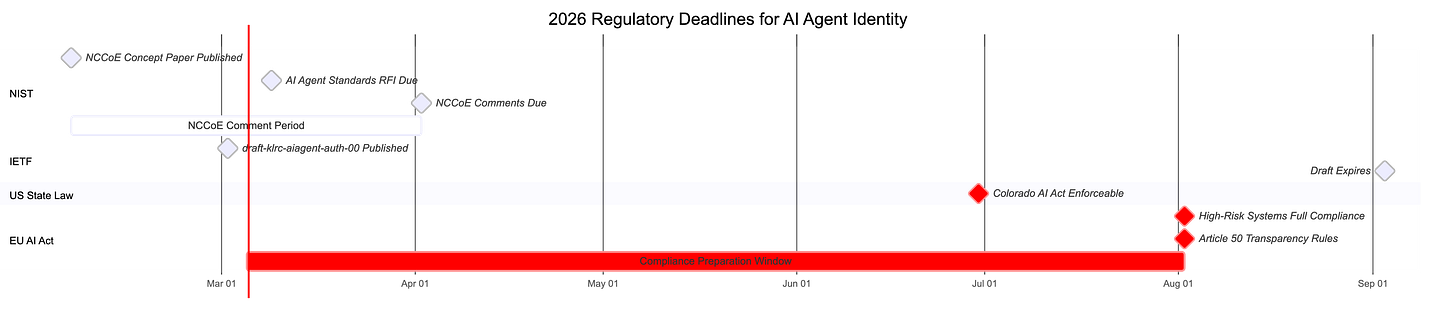

The IETF just published its most ambitious attempt to standardize how AI agents prove their identity across systems. Draft-klrc-aiagent-auth-00, dropped March 2, 2026, composes WIMSE, SPIFFE, and OAuth 2.0 into a 26-page framework called AIMS (Agent Identity Management System). The authentication layer is solid. The authorization layer stops at the token boundary. The Security Considerations section contains two words: “TODO Security.” If you’re deploying agentic systems in production, you need to understand where this draft helps you and where you still have to build your own controls.

Before I get into specifics, a quick note on what this document actually is. An IETF Internet-Draft (I-D) is a working document, the raw material that may eventually become an RFC (an official Internet standard). This one is version -00, the very first public iteration from Pieter Kasselman (Defakto Security), Jean-Francois Lombardo (AWS), Yaroslav Rosomakho (Zscaler), and Brian Campbell (Ping Identity). Criticizing a -00 draft for incompleteness is a bit like reviewing someone’s outline and complaining the conclusion is thin. That said, people are already reading this as deployment guidance, and the gaps matter for anyone building agentic systems today. So let’s talk about what it covers, what it doesn’t cover yet, and what you need to build yourself while the standards process catches up.

The good news: agents are workloads, and workloads have an identity stack

The draft’s foundational thesis gets it right that AI agents should be treated as workloads, not as some new identity category requiring new protocols and running instances of software executing specific tasks. That framing unlocks SPIFFE’s attestation-bound cryptographic identity, WIMSE’s cross-system workload semantics, and OAuth 2.0’s delegation framework. No new protocols needed.

This matters because SPIFFE already works at scale. Uber processes billions of attestations daily through SPIRE. Block runs the full SPIFFE+WIMSE+OAuth stack in production. The draft codifies patterns that companies with real security engineering teams already deploy.

The WIMSE identifiers specified in the draft bind agent identity to the execution environment through hardware-rooted attestation. A SPIRE agent on each node performs workload attestation by examining the kernel or querying the orchestration platform. Your agent’s identity gets measured from where it runs, not merely asserted by who registered it. An OAuth client_id is a registration artifact. A SPIFFE ID is cryptographic proof that Agent X is actually Agent X, running in the expected environment.

The draft also gets credentials right. Short-lived, cryptographically bound, explicit expiration. Static API keys are called out as unsuitable for agent authentication: bearer artifacts with no cryptographic binding, no identity conveyance, operationally painful to rotate.

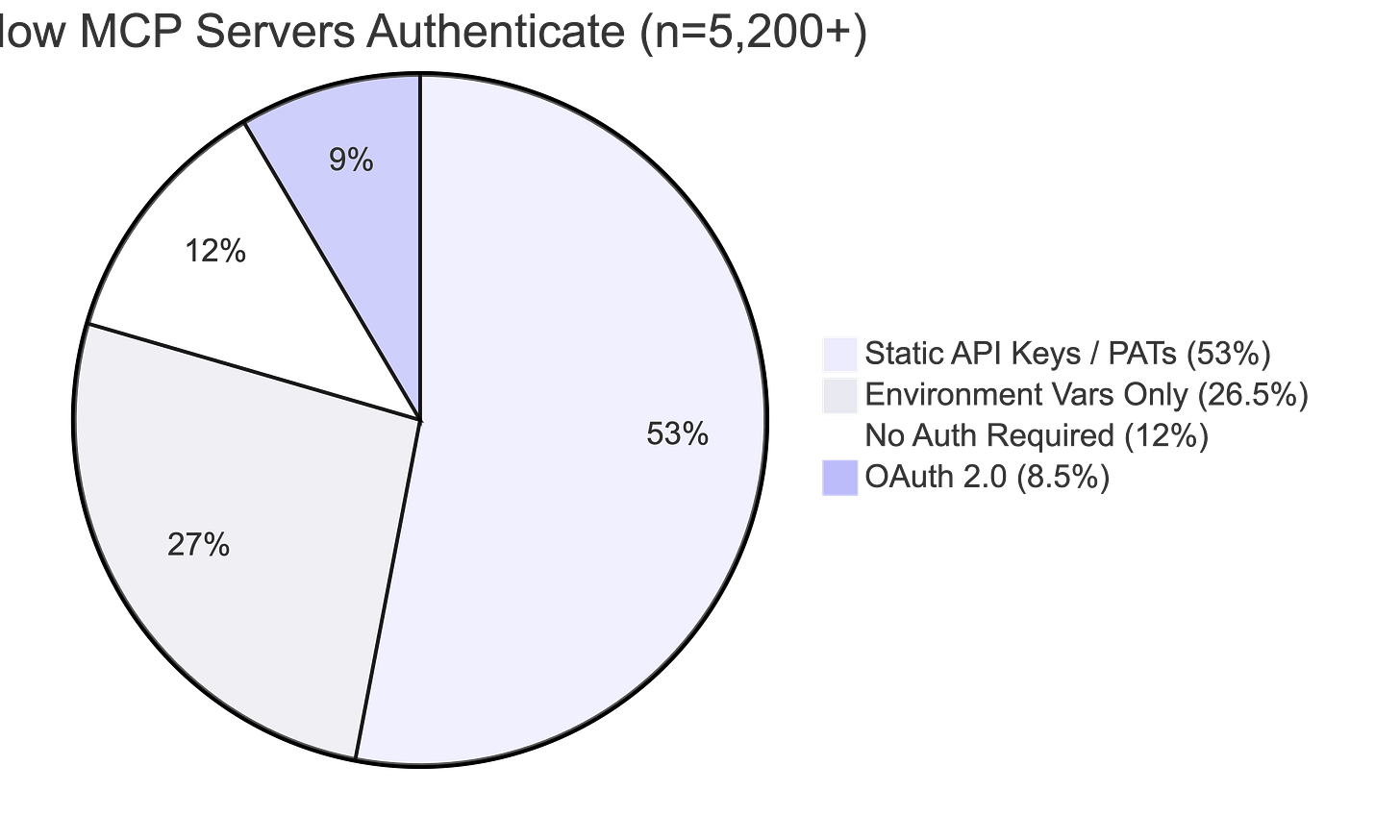

That warning couldn’t come at a better time. Astrix Security analyzed over 5,200 open-source MCP server implementations and found that 53% rely on static API keys or Personal Access Tokens. Only 8.5% use OAuth. The ecosystem is building on exactly the anti-pattern the draft condemns.

Transaction Tokens solve the lateral movement problem

Section 10.4 addresses a real attack vector most frameworks ignore. When access tokens propagate through internal microservice chains within an agent workflow, every hop creates a theft and replay opportunity.

The draft’s answer is Transaction Tokens (draft-ietf-oauth-transaction-tokens-08). Short-lived, signed JWTs that bind user identity, workload identity, and authorization context to a specific transaction. Lifetimes are measured in seconds to minutes. Cryptographic signatures prevent context modification. You can’t grab a Transaction Token from one transaction and replay it in another because the transaction context is cryptographically sealed. A companion draft (draft-oauth-transaction-tokens-for-agents-04) extends this with agent-specific fields for the acting agent, the initiating human, and operational constraints.

The draft also correctly identifies tools forwarding access tokens to downstream services as an anti-pattern.

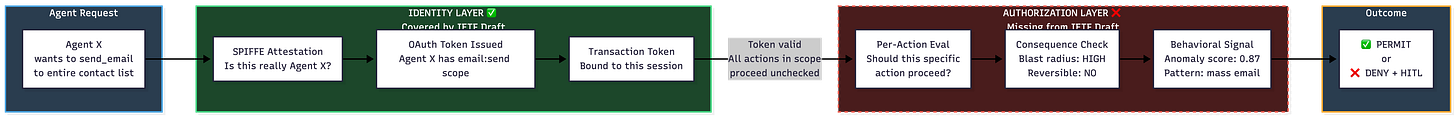

The authorization gap: where scope alone isn’t enough

Here’s where the draft’s -00 status shows. Once an OAuth access token gets issued with a set of scopes, every action within those scopes proceeds unchecked until the token expires. No per-action evaluation. No consequence assessment. No behavioral feedback loop. The authors clearly know authorization needs more work (the AIMS conceptual model describes layers that the spec hasn’t filled in yet), but anyone reading this draft as a deployment blueprint today will inherit that gap.

Think about what that means in practice. An agent with email:send scope authorized to send meeting notes can use that same scope to email every contact in the address book a different message. Each action is technically within scope. The framework treats them identically. The authorization decision happened once, at token issuance. Everything after that is a free pass.

OWASP’s Top 10 for Agentic Applications draws a distinction that the draft hasn’t addressed yet: least agency versus least privilege. Least privilege asks what the agent can access. Least agency extends that to how much freedom the agent has to act on that access without checking back.

The term “least agency” appears nowhere in the draft. Section 10.8 says agents should request minimum scopes and authorization details. That’s least privilege applied to OAuth scopes. Standard stuff. It does nothing to constrain autonomous decision-making within those scopes.

OWASP’s ASI03 (Identity and Privilege Abuse) mitigation guidance recommends per-action authorization through a centralized policy engine. Not once at token issuance. At each privileged step. The draft doesn’t provide a mechanism for this yet, and future revisions may address it. In the meantime, you need to build that layer yourself.

Your token says “allowed.” What it can’t say is “should you?”

The deeper issue goes beyond per-action evaluation. The draft in its current form contains no mechanisms for assessing the potential impact of an action before permitting it. No concept of blast radius. No reversibility check. No impact severity score. Again, this is version -00. These concepts may arrive in later revisions. They’re absent today.

Consider the practical difference. An agent with files:read_write scope can read one file or delete every file in scope. The OAuth framework treats these as equivalent actions. They aren’t. One is routine. The other is catastrophic and irreversible.

Consequence-based authorization asks three questions per permission:

What’s the worst action this agent can take?

Is the damage reversible?

Can you reverse it within an acceptable recovery window?

OAuth scopes can’t answer any of these.

The emerging practice of graduated trust models (read-only, then draft-only, then supervised execution, then earned autonomy) represents an informal consequence-based approach. Most practitioners agree that most agents never earn full autonomy in high-stakes contexts. That’s the correct outcome. The draft provides no framework for expressing or enforcing these graduation stages.

OWASP’s ASI08 (Cascading Failures) recommends blast-radius caps and digital twin replay testing. Run recorded agent actions in an isolated environment first. See if sequences trigger cascading failures before expanding policy permissions. Future revisions of the draft could incorporate these concepts. For now, they’re outside its scope.

The observability gap: strong detection, no policy feedback loop

Section 11’s observability requirements are genuinely strong for detection and audit. Seven minimum audit event fields. Correlation across agents, tools, services, and LLMs. The ability to reconstruct complete execution chains, including delegated authority and intermediate calls.

The draft calls observability “a security control, not solely an operational feature.” Correct. Then it integrates the OpenID Shared Signals Framework with CAEP (Continuous Access Evaluation Profile) for real-time signal delivery. Also good.

The problem is that the AIMS conceptual model in Section 4 promises observability that can “dynamically modify authorization decisions based on observed behavior and system state.” The actual specification delivers reactive remediation, terminate sessions, discard tokens, re-acquire with updated constraints. Detection flows to dashboards and SIEM tools. It doesn’t feed into the policy decision point that evaluates each authorization request. The conceptual model is ahead of the spec, which is normal for a -00 draft. The spec will likely catch up. You can’t afford to wait for it.

An agent exhibiting anomalous tool invocation patterns should see its authorization dynamically narrowed. Not through token revocation (which is all-or-nothing) but through policy-level constraints on permitted actions. The draft gives you a circuit breaker when you need a rheostat.

NIST SP 800-207 (Zero Trust Architecture) explicitly recommends a trust score that changes dynamically based on entity behavior patterns, feeding into the policy engine. Context-aware authorization systems from companies such as Zscaler and StrongDM already implement this pattern in production (not endorsing either). I’d expect future revisions of the draft to engage with these models, especially given that Zscaler’s Rosomakho is one of the four co-authors.

AuthZEN fills the gap the draft hasn’t reached yet

The most interesting omission in the current document is that AuthZEN (OpenID Authorization API 1.0) was approved as a Final Specification in January 2026. It standardizes a transport-agnostic API where any Policy Enforcement Point queries any Policy Decision Point, regardless of vendor. The information model is a four-element tuple:

Subject (the agent), Action (the operation), Resource (the target), Context (ambient attributes).

Every agent tool invocation maps cleanly to an AuthZEN evaluation: subject is the agent’s SPIFFE ID, action is “send_email,” resource is “contact_list,” context carries the delegating user, blast radius classification, reversibility flag, and behavioral anomaly score. The context object is extensible and open-ended. It was designed for exactly this kind of dynamic, attribute-rich decision-making.

The draft references AuthZEN in its normative references. The body text doesn’t discuss it yet. Given that AuthZEN solves the draft’s most significant open question, I’d bet it features prominently in the next revision. For now, that connection is yours to make.

Three policy engines deserve attention for filling that gap. OPA (Open Policy Agent), a CNCF Graduated project, evaluates structured JSON input against declarative policies with sub-millisecond latency. Cedar, from AWS, offers automated reasoning via SMT solver that can mathematically prove properties about policies and benchmarks at 42 to 60 times faster than Rego. Topaz, from Aserto (whose CEO co-authored the AuthZEN specification), combines OPA’s decision engine with a built-in Zanzibar-style relationship graph.

OAuth provides coarse-grained delegation, who can access what resource category. Policy engines provide fine-grained runtime evaluation, should this specific action on this specific resource proceed given current context. That layered model is where the draft needs to go next. Until it gets there, you build it yourself.

Regulatory timelines won’t wait for standards completion

The EU AI Act’s high-risk system requirements take full effect August 2, 2026 (as of this writing, anyway). Five months from now. Article 14 requires human oversight. Article 26 requires deployers to keep automatically generated logs for at least six months. The draft’s identity-bound audit trails and CIBA-based human-in-the-loop mechanism directly support both.

NIST launched two converging initiatives in February 2026. The NCCoE concept paper on AI agent identity and authorization, and the AI Agent Standards Initiative covering security controls, identity, and testing. Both center on WIMSE/SPIFFE + OAuth. Both explicitly include policy-based access control, the piece the IETF draft’s -00 revision hasn’t specified yet.

The Colorado AI Act establishes a “reasonable care” standard for high-risk AI systems effective June 30, 2026. Widely adopted standards become evidence of reasonable care in court. The identity architecture the draft describes will likely qualify for authentication. You still need to build the authorization layer yourself.

MCP and A2A still have fundamental identity gaps

Mapping the IETF draft’s framework onto the Model Context Protocol reveals how far the ecosystem still has to travel. MCP identifies agents as OAuth clients with a client_id, a registration artifact with no attestation binding. No SPIFFE identity verification. No attestation mechanism. No multi-hop delegation. No standard mapping between tool names and OAuth scopes. The draft recommends Workload Proof Tokens for proof-of-possession. MCP uses bearer tokens.

MCP’s OAuth model is human-centric (Authorization Code + PKCE). The Client Credentials Grant for machine-to-machine authentication was removed from the spec and is only returning through an extension. Fully autonomous agents have no standard authentication path in MCP today. Google’s A2A protocol has similar gaps: self-declared identities with no attestation binding, credential acquisition out of scope, authorization left to the receiving agent.

Riptides demonstrated the draft’s compositional pattern working for MCP in practice. Each workload gets a SPIFFE SVID, used as a software statement in Dynamic Client Registration and as a JWT assertion for client authentication. The pattern works. It required significant custom integration that no standard profile defines.

What you should build now

Don’t wait for standards completion. The threat model OWASP defined already exists. The regulatory deadlines are set.

Start with SPIFFE/SPIRE for attestation-bound agent identity. Use SVIDs as JWT assertions (RFC 7523) to obtain OAuth tokens. This follows the pattern the draft describes and Riptides validated in production.

Deploy an AuthZEN-compliant PDP (OPA, Cedar, or Topaz). Evaluate every agent tool invocation against dynamic policy. Pass agent identity, action details, resource metadata, delegation context, and behavioral signals in the AuthZEN context object.

Write Cedar or Rego policies encoding blast-radius thresholds, reversibility requirements, graduated trust levels, and human-in-the-loop triggers. Version-control policies alongside application code.

Tag every tool and action with impact metadata: blast_radius, reversible, data_sensitivity, scope. Enforce that irreversible high-blast-radius actions require explicit human approval through CIBA step-up authorization.

Feed observability data into the policy engine as real-time context attributes. Stop sending behavioral signals only to SIEM dashboards for post-hoc investigation. Make them first-class policy inputs.

Key Takeaway: The IETF draft gives you a strong answer to “is this really Agent X?” It hasn’t answered “should Agent X do this specific thing right now?” yet. That gap will close as the draft matures. In the meantime, authentication without per-action authorization is a locked front door with open windows. Build the authorization layer now.

What to do next

If you’re building agentic systems and trying to figure out where identity controls fit, start with the CARE framework at rockcyber.com for mapping security controls to business risk outcomes. The RISE framework helps you evaluate where your organization sits on the AI security maturity curve, particularly useful for figuring out which authorization controls to prioritize first.

The agent identity problem is a microcosm of the larger question the book addresses: how do you govern autonomous systems when the blast radius of failure compounds faster than your ability to detect it?

More analysis on agentic AI security, MCP authorization gaps, and practical frameworks for building authorization layers at rockcybermusings.com.

👉 Subscribe for more AI security and governance insights with the occasional rant.

👉 Visit RockCyber.com to learn more about how we can help you in your traditional Cybersecurity and AI Security and Governance Journey

👉 Want to save a quick $100K? Check out our AI Governance Tools at AIGovernanceToolkit.com

The views and opinions expressed in RockCyber Musings are my own and do not represent the positions of my employer or any organization I’m affiliated with.