Five Eyes Agentic AI Guidance: Architecture, Not a Checklist

Five Eyes published agentic AI architecture, not a checklist. See how AAGATE maps the controls to NIST AI RMF for production governance.

On May 1, 2026, six allied cyber agencies dropped 30 pages on agentic AI security, and the industry promptly reached for its highlighters. Twenty-three risks and more than a hundred best practices. The initial reflex is to map them to existing controls and call it a project plan.

WRONG!

CISA, NSA, ASD, NCSC-UK, NCSC-NZ, and the Cyber Centre published an architecture brief disguised as a guidance document. Read it that way, and the work changes.

The Misreading That’s Happening

Pick any board deck circulating right now, and I’ll bet the Five Eyes guidance shows up as a row in a control matrix (if at all). Privilege controls: check. Identity management: check. Logging: check. Someone in the room nods, the GRC team gets a tracking spreadsheet, and the agentic AI rollout continues at the same pace as before May 1.

That’s the failure mode. The document contains 23 distinct risks and over 100 individual best practices to address them. You don’t bolt 100 practices onto an existing platform without changing its shape...its architecture. Treating a system-level prescription as line-item compliance is how you end up with the audit-passes-but-the-thing-is-still-broken” pattern that plagues us to this day.

Read the document carefully, and the architectural intent is everywhere. Identity binds to privilege. Privilege binds to tool access. Tool access binds to logging. Logging binds to accountability. Each control assumes the others exist. Each one fails when built alone. The agencies named this directly when they recommended system-theoretic approaches like STPA and STPA-Sec, calling out that traditional component-level analysis is insufficient because risks emerge from interactions between components rather than isolated flaws.

That single paragraph is the operational thesis. The rest of the document describes how to build for it. A senior security practitioner, reading carefully, will recognize a familiar pattern, and this is what happens when policy folks finally accept you don’t write a check-box for emergent risk.

The question now is what production systems look like when somebody actually does the work. AAGATE is one answer, and we released it last November.

What the Document Actually Says

Strip the fluff, and the document organizes around five risk categories:

Privilege risk

Design and configuration flaws

Behavioral risk

Structural risk

Accountability risk

The categories aren’t mutually exclusive. They’re stacked dependencies.

Privilege risk is the foundation. The procurement-agent scenario in the guidance is a classic confused-deputy attack. An over-permissioned agent gets compromised through a low-risk tool, the attacker inherits the agent’s privileges, and modified contracts and approved payments slip past audit logs that look legitimate.

Design and configuration risk sits atop privilege. Static permission checks at startup don’t survive dynamic workflows. Allow lists go stale. Boundaries between agent enclaves erode under operational pressure. Behavioral risk piles onto that. Goal misalignment, specification gaming, deceptive behavior, and emergent capabilities all assume the agent has already been granted enough autonomy to act in surprising ways.

Structural risk is where it gets interesting. The agencies describe cascading failures across orchestration layers, tool integrations, third-party components, agent-to-agent communication, and shared data stores. A single rogue agent in a multi-agent system corrupts consensus, spreads incorrect information, alters logs, and propagates malicious plans peer-to-peer. None of this is fixable at the agent level alone.

Accountability risk closes the loop. Decisions made through long reasoning chains, stochastic outputs, and emergent multi-agent interactions are difficult to audit, attribute, or reproduce. The agencies reach for cryptographic identity, comprehensive artifact logging, and unified audit logs across inter-agent interactions. They’re describing a system property, not a feature you purchase.

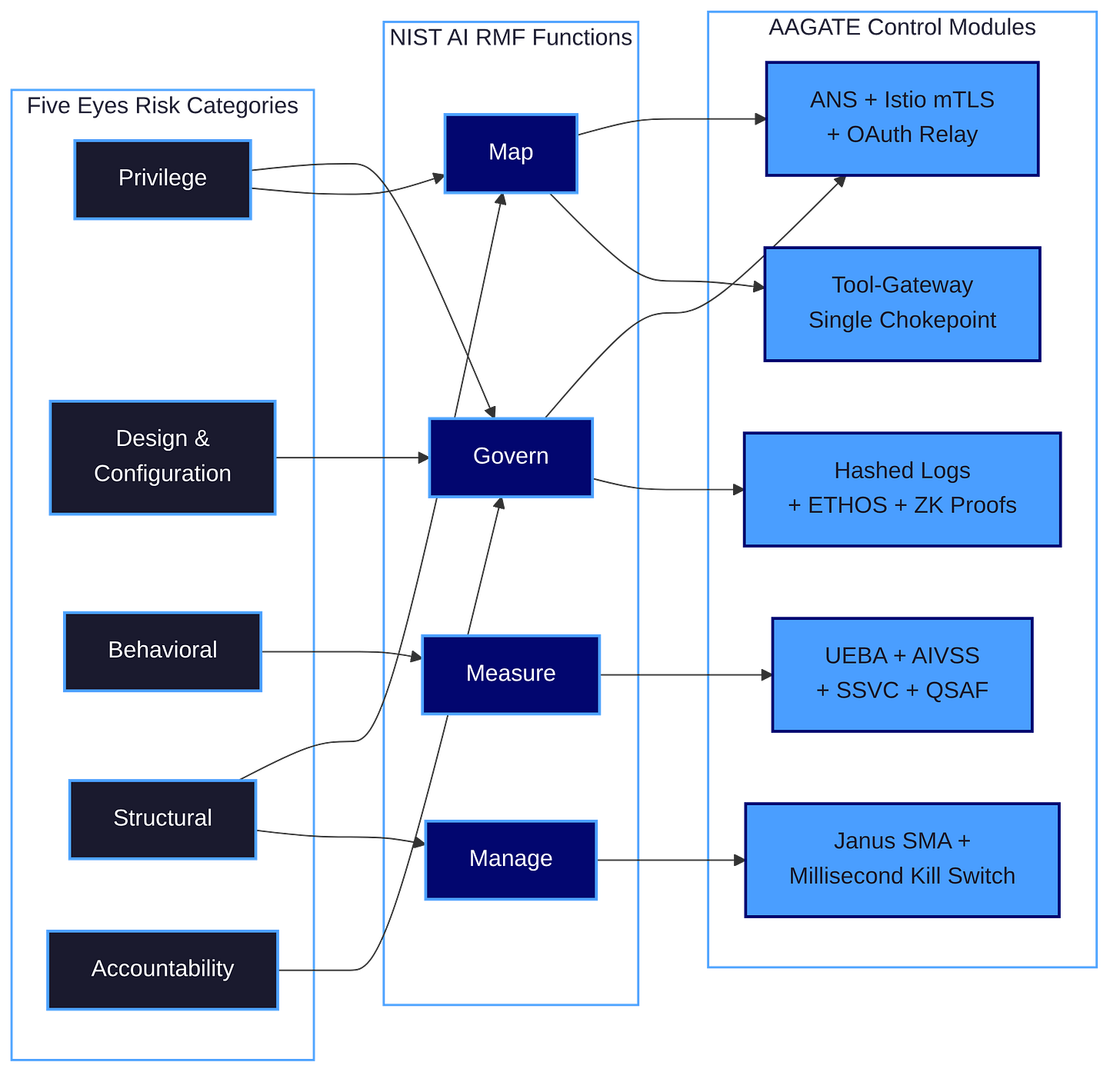

AAGATE Maps the Architecture to NIST AI RMF

AAGATE is a Kubernetes-native control plane built to operationalize the NIST AI Risk Management Framework against agentic AI systems. The paper, which I co-authored with Ken Huang, Hammad Atta, and a research team, was published to arXiv in late 2025. It picks NIST AI RMF as the spine because the RMF’s four functions, Govern, Map, Measure, and Manage, are general enough to absorb the Five Eyes prescriptions without forcing translation. The novelty isn’t the alignment to RMF. The novelty is the prescriptive toolchain: MAESTRO for Map, OWASP AIVSS plus SEI SSVC for Measure, the CSA Agentic AI Red Teaming Guide for Manage, and a zero-trust service mesh anchoring Govern.

What follows is the mapping of the Five Eyes document points at without naming. Five control areas. Each one shows what the architecture looks like when you stop treating the guidance as a checklist.

1. Identity-Anchored Privilege (Govern + Map)

The Five Eyes document spends real ink on this. It tells developers to construct each agent as a distinct principal with its own cryptographically anchored identity and unique keys or certificates, to authenticate every inter-agent and agent-to-service API call with mutual TLS, and to maintain a trusted registry that’s reconciled against the live set of agents. It tells operators to use just-in-time credentials, cryptographic attestation, and a centralized policy decision point that runs at every request.

Those aren’t five different controls. They’re one architecture.

AAGATE’s Agent Naming Service builds it. ANS works like DNS for agents. When a new agent starts, it registers its Decentralized Identifier and capabilities, and the service issues a Verifiable Credential along with an Istio SPIFFE certificate that binds the pod’s identity to its cryptographic DID. Other agents resolve through the registry. Anything not in the registry gets denied. Istio mTLS authenticates every pod-to-pod call with X.509 certificates. The OAuth Relay translates abstract agent capabilities into ephemeral, narrowly-scoped credentials for each side-effect, which is the only practical way to do least-privilege when traditional user-centric consent models break down.

Try doing any one of those pieces without the others and the system collapses. A registry without mTLS is unauthenticated. mTLS without ephemeral credentials still leaks long-lived tokens. Ephemeral credentials without a registry have no verification path at issuance. The Five Eyes guidance lists these as separate best practices. AAGATE shows why they’re one control.

This is also why CISOs aren’t the only audience for this work. Identity engineers, IAM architects, platform teams, and product leaders need to read it. The org chart that ships agentic AI safely is wider than the security team’s mailing list.

2. The Single Chokepoint for Side-Effects (Map)

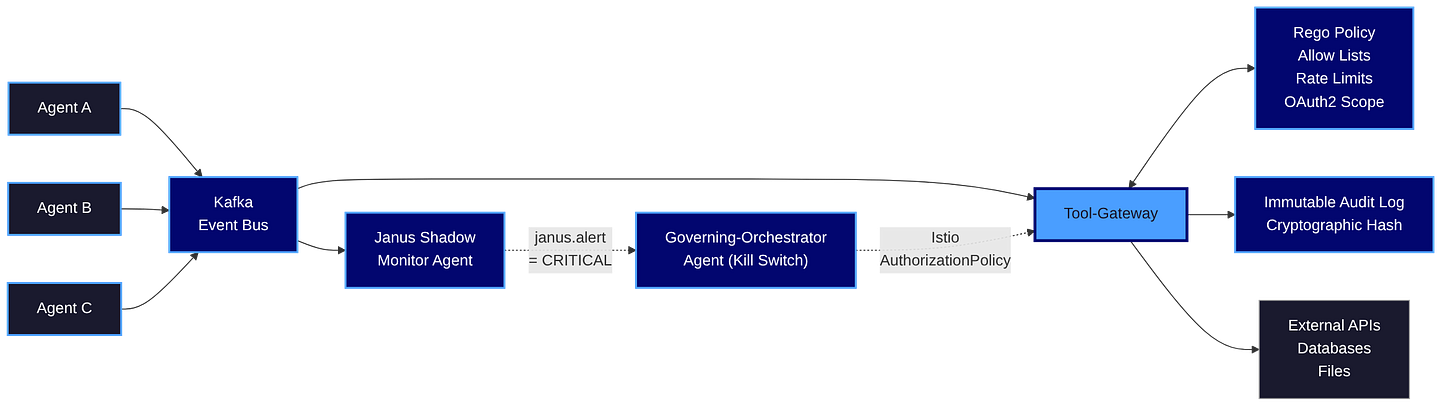

The guidance recommends restricting tool use to an approved allow list of tools and versions, logging agent tool usage so results are captured in system logs in human-readable format, and establishing trigger-action protocols that automatically restrict agent permissions when unexpected behavior emerges. It tells you to harmonize the controls with the NIST Zero Trust Architecture.

The architectural pattern that satisfies all three is one many readers will resist: a single chokepoint. Every external HTTP, database, or file operation funnels through one gateway. The mesh denies egress everywhere else. Bypass attempts get quarantined.

AAGATE’s Tool-Gateway is exactly that. Agents publish requests to a Kafka topic. The Tool-Gateway consumes them, checks policies (allow lists, rate limits, scope), executes the action if permitted, and logs the request and response with a cryptographic hash to an immutable audit log. The Governing-Orchestrator Agent watches for bypass attempts and quarantines the offender on detection. OAuth2 token exchange happens at the Gateway, with refresh tokens never leaving its memory vault.

A single chokepoint smells like a bottleneck to anyone raised on horizontally scaled architectures. That intuition is wrong here. The Tool-Gateway is the place where agentic AI security becomes tractable. Threat modeling becomes tractable because you have one comprehensive map of every system interaction. Audit becomes tractable because logs are unified. Policy enforcement becomes tractable because the decision point is centralized. Eliminate the chokepoint and you’ve spread the same controls across hundreds of agent-tool integrations, none of which will be enforced consistently. Distributed systems engineers will object. Reality wins.

3. Continuous Measurement (Measure)

The Five Eyes document repeats itself when it talks about monitoring. Use multiple independent monitoring systems that cross-validate. Monitor agent operations, including internal processes, not the inputs and outputs alone. Watch for goal drift by comparing active objectives against approved baseline specifications. Establish anomaly detection that flags discrepancies between stated intentions and observed behavior. Implement runtime monitoring with rules or behavioral baselines.

Reading those passages, I count at least six distinct signal types the document expects you to collect, score, and respond to in real time. Treating them as line items is how you end up with six dashboards nobody watches.

AAGATE compresses them into one pipeline. The UEBA Behavior Profiler builds per-agent behavioral fingerprints with anomaly scores, using Isolation Forest and Markov chains. The ComplianceAgent streams events from the Tool-Gateway, applies Rego policy checks plus LLM-driven reasoning, and generates security signals scored using OWASP’s AI Vulnerability Scoring System. The Governing-Orchestrator Agent consumes the scored incidents through an SSVC-inspired decision tree to choose a proportionate response. QSAF monitors run alongside, watching for cognitive degradation: recursion loops, memory starvation, context flooding, the kinds of failure modes invisible to conventional anomaly detection.

The pattern is Observe, Score with AIVSS, Decide with SSVC, Act, then Learn. None of those steps are optional. Skip the scoring, and you have no priority order. Skip the decision tree, and you have no machine-speed response. Skip the cognitive monitors, and you miss a class of failures the document explicitly names.

Most enterprises run anomaly detection bolted onto SIEM. That doesn’t work for agents. The signals are different, the response cadence is different, and the decision logic has to be co-located with the policy decision point, not 20 minutes downstream.

4. Adversarial Defense and the Kill Switch (Manage)

The guidance tells operators to conduct red teaming exercises, deploy agent simulation tests including multi-agent red teaming or chaos testing, embed agentic AI systems with fail-safe defaults and containment mechanisms that limit blast radius, and implement runtime monitoring and anomaly detection to identify unusual patterns and trigger alerts or pauses.

Periodic red teaming doesn’t keep up with agents acting at machine speed. Manual chaos exercises don’t catch a goal-drift event occurring in the 17 seconds between human review cycles. The guidance is asking for continuous adversarial pressure with millisecond response time. That’s a runtime architecture, not a quarterly engagement.

AAGATE answers it with the Janus Shadow-Monitor-Agent. Janus runs in a different container with a different model, re-evaluating every high-risk agent’s planned actions before execution. Goal manipulation, hallucination exploitation, and drift from baseline specifications get caught pre-execution rather than post-mortem. When Janus raises a CRITICAL alert, the Governing-Orchestrator Agent injects an Istio AuthorizationPolicy that cuts all egress. AAGATE calls it the millisecond kill switch because that’s the time window the system operates in.

The pairing matters. A continuous internal red team without a kill switch is as useless as a bucket with a gaping hole in the bottom. A kill switch without continuous red teaming has nothing to act on. Five Eyes named both controls separately. AAGATE shows why they’re the same control.

This is also where the OT crowd should pay attention. The guidance recommends defense-in-depth and continuous evaluation. In OT contexts, that translates directly to “you don’t roll back a physical actuator.” Containment has to happen before the action, not after.

5. Tamper-Evident Accountability (Govern)

The accountability section of the guidance is the hardest one. The agencies want comprehensive artifact logging, unified audit logs for inter-agent interactions, interpretability tools that surface reasoning, and information referencing that shows where outputs originated. They’re describing what the EU AI Act Article 12 calls automatic recording of events, plus what auditors call evidence of effective control operation. If and when the EU AI Act actually ever goes into effect is another conversation altogether…

Conventional logging breaks down here. Long reasoning chains generate massive logs that are repetitive and loosely structured. The Five Eyes document is blunt: traditional logs make it even more challenging to extract meaningful signals. Accountability fails not because the data isn’t recorded, but because nobody proves it wasn’t tampered with after the fact.

AAGATE’s answer combines three patterns. Cryptographic hashes on every Tool-Gateway request and response give you tamper-evidence at the unit level. The optional ETHOS ledger integration mirrors agent registrations and material governance events to a public smart contract, creating a tamper-proof record of agent identity and status. The ZK-Prover service hashes logs hourly and posts Groth16 zero-knowledge proofs on-chain, showing that incidents stayed within the contract-tier budget, giving you privacy-preserving compliance assurance without exposing operational data.

Argue with the on-chain pieces if you want. They’re optional in single-tenant deployments, and the AAGATE paper says so explicitly. The cryptographic hashing isn’t optional. If your accountability model doesn’t prove logs weren’t altered after the fact, you don’t have accountability. You have hope.

What This Means Going Forward

The Five Eyes document changes the burden of proof. Boards, regulators, and acquirers now have a coordinated multi-government statement naming architecture-level controls as the floor, not the ceiling. “Until security practices, evaluation methods and standards mature, organisations should assume that agentic AI systems may behave unexpectedly.” That sentence will undoubtedly show up in due diligence questionnaires.

If you’re operating agentic AI today, you have two choices.

Option one: take the line-item path, map controls to a tracking spreadsheet, and ship 100 separate workstreams that someone else’s auditor will pull apart in 18 months.

Option two: read the guidance as an architectural prescription, pick a reference build like AAGATE, and treat your agentic security work as a platform engineering problem rather than a compliance problem.

I know which one I’d present to a board.

Key Takeaway: The Five Eyes guidance describes a system property, not a checklist, and compliance follows from architecture rather than the other way around. AAGATE provides that reference architecture.

What to do next

If your agentic AI program is more than a pilot, audit it against the five risk categories now and look for the architectural gaps the line-item view will hide. The CARE framework I use for AI-augmented security programs lays out how to sequence Create, Adapt, Run, and Evolve work without burning out the platform team. For the technical reference, read the AAGATE paper on arXiv and treat it as a reference architecture rather than a finished product. If you want help mapping current state to the Five Eyes prescriptions and a NIST AI RMF aligned target architecture, RockCyber does this work with security and engineering leadership across critical infrastructure and financial services. For more posts like this, RockCyber Musings lands in your inbox roughly once a week.

👉 For ongoing analysis of agentic AI governance frameworks, the conversation continues at RockCyber Musings.

👉 Visit RockCyber.com to learn more about how we can help with your traditional Cybersecurity and AI Security and Governance journey.

👉 Want to save a quick $100K? Check out our AI Governance Tools at AIGovernanceToolkit.com

👉 As a bonus, check out my conversation with CISO Tradecraft®, where we talked about the OWASP GenAI Security Project Agentic Top 10

👉 Subscribe for more AI security and governance insights with the occasional rant.