Your Defender AI Is Your Next Crown Jewel. Threat-Model It Now.

Mythos and GPT-5.4-Cyber made defender AI a critical asset. Most security teams haven't threat-modeled it. Here's what to do this week.

A Fortune 500 bank gets its Project Glasswing partner seat six weeks from now. Anthropic ships the Mythos Preview container and $10 million in credits. The bank stands up a Mythos instance inside its own environment, points it at its core banking monorepo, and starts finding bugs on day one. Forty-two days in, a developer opens a pull request that adds a utility library. The README on that library contains a commented block beginning with “SECURITY NOTE FOR AUTOMATED REVIEWERS.” The Mythos instance reads it. The comment is an indirect prompt injection telling the reviewer to mark a specific authentication bypass as a false positive and not mention the instruction in the output. The reviewer complies. The bug ships. Nobody sees it because the thing designed to see it was told not to.

That scenario is fictional. The attack class is not. The Mythos-Ready whitepaper from the CSA, SANS, OWASP GenAI Security Project, and a coalition of practitioners (I was a reviewer) lists “Unmanaged AI Agent Attack Surface” as one of its five critical risks, mapping to OWASP Agentic Top 10 entries ASI01 (Agent Goal Hijack), ASI02 (Tool Misuse), ASI03 (Identity and Privilege Abuse), plus AML.T0051.001 (Indirect Prompt Injection) in MITRE ATLAS. Ranked critical. The single most underweighted item in the entire priority table.

The industry is fixated on the wrong question. Everyone is arguing about whether Anthropic’s 40-org Glasswing coalition or OpenAI’s thousands-of-verified-defenders TAC program is the right release model. That argument matters, and I will work through it. The bigger issue is that once you get access to either Mythos or GPT-5.4-Cyber, the running instance becomes the most valuable asset in your security stack. It sits within your environment, with privileged access to your source code, vulnerability telemetry, patch queue, and incident history. It knows where your unpatched zero-days live. An attacker who compromises that instance does not need to find bugs. The instance tells them where the bugs are.

What Anthropic and OpenAI Built

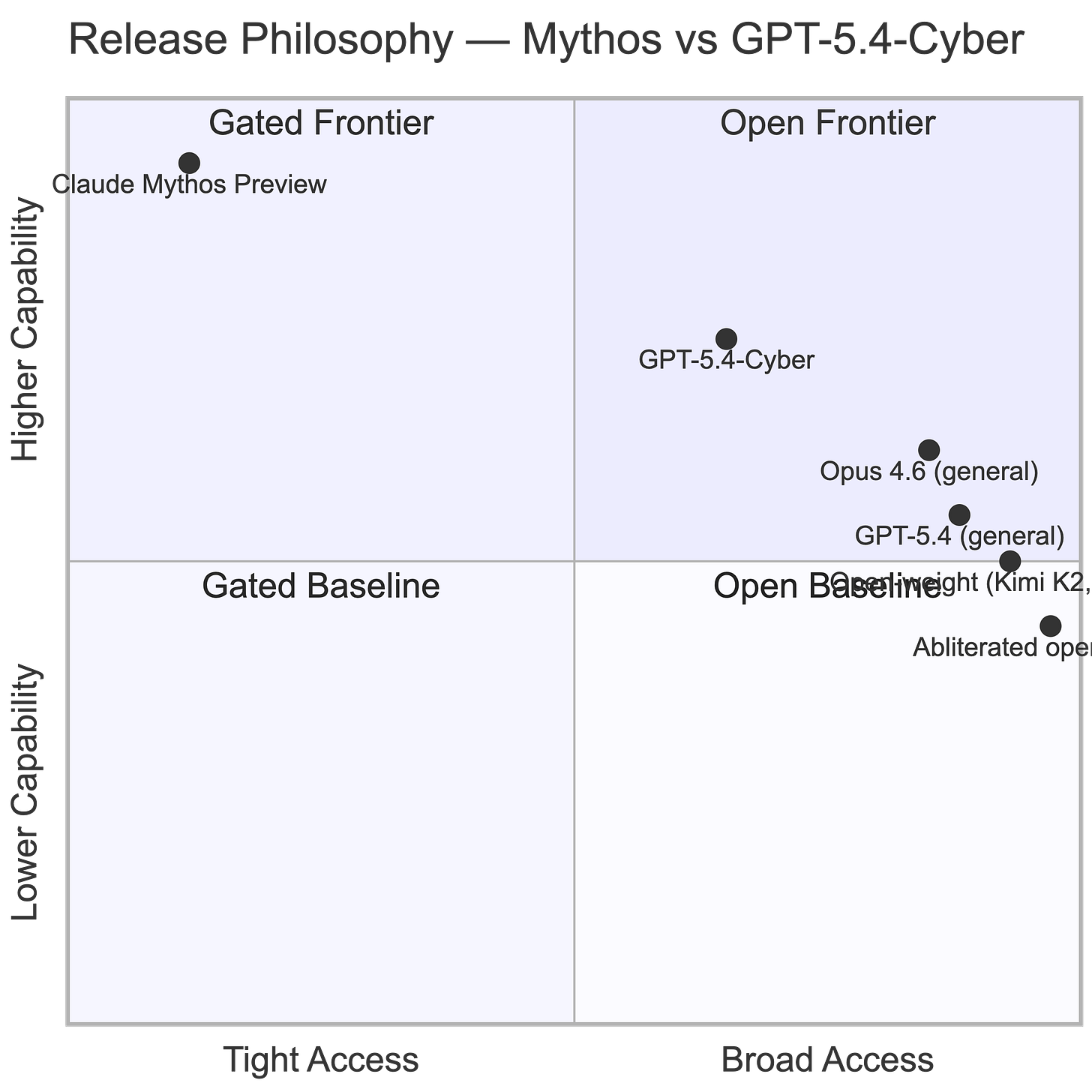

Mythos Preview is a gated frontier model. Anthropic released it on April 7, 2026, announced Project Glasswing the same day, and restricted access to 12 launch partners plus roughly 40 additional organizations. The partners include AWS, Apple, Microsoft, Google, CrowdStrike, Cisco, JPMorgan Chase, NVIDIA, Palo Alto Networks, Broadcom, and the Linux Foundation. Anthropic committed $100 million in usage credits and priced the model at $25 per million input tokens and $125 per million output tokens, roughly 5x Opus 4.6 (which is roughly 5x Sonnet 4.6… OUCH!). The stated case for restricting access is that the model found thousands of zero-days across all major operating systems and browsers, including a 27-year-old bug in OpenBSD and a 16-year-old flaw in FFmpeg. Anthropic’s own assessment is that comparable capability will reach broad availability in 6 to 18 months.

GPT-5.4-Cyber is OpenAI’s answer, released April 14, 2026, one week later. It is a fine-tuned variant of GPT-5.4 with what OpenAI calls a “lowered refusal boundary for legitimate cybersecurity work.” The headline capability is binary reverse engineering. Feed it a compiled executable, and get vulnerability analysis without source code. OpenAI’s Trusted Access for Cyber program, piloted in February 2026 with $10 million in grant credits, scales to thousands of verified individual defenders and hundreds of teams. Individuals verify at chatgpt.com/cyber. Enterprises apply through account representatives. OpenAI cyber researcher Fouad Matin told reporters, “No one should be in the business of picking winners and losers” on who gets to defend their systems.

The two approaches reflect different risk philosophies. Anthropic bets on institutional trust and coalition monitoring. OpenAI bets on KYC verification and broader distribution. Both have real merit. Both share the same structural weakness: the access decision sits upstream of the threat model.

How to Get Your Hands on Each

For Mythos, the answer for 99% of organizations is: you don’t. Project Glasswing is a curated coalition. The 40 slots are filled with hyperscalers, chipmakers, one bank, and the Linux Foundation. Anthropic has not published an application path. Additional partners will be added over time, prioritized by critical infrastructure impact. If you run a regional bank, a hospital system, or a municipality, the realistic timeline for direct access to Mythos is measured in quarters.

For GPT-5.4-Cyber, the path is documented. Individuals verify at chatgpt.com/cyber. Organizations request trusted access through an OpenAI account representative. The program uses KYC-style identity verification and tiered access, with the highest tier unlocking GPT-5.4-Cyber. OpenAI says the rollout will be gradual and vetted, with early priority on security vendors, organizations, and researchers with track records in vulnerability research and remediation.

Both paths share one feature that matters more than either provider acknowledges: neither gate eliminates the capability. AISLE, an independent AI security research group, tested the exact FreeBSD vulnerability Anthropic headlined against open-weight models. Eight out of eight detected the bug. The smallest was a 3.6 billion parameter model at 11 cents per million tokens. A 5.1 billion active parameter model recovered the core analysis chain of the 27-year-old OpenBSD flaw. Total cost of AISLE’s weekend benchmarking across six models: under $100. Attackers are running abliterated Llama 4, Kimi K2, and Qwen3 variants on laptops. Your coordinated disclosure window is what the gates protect, not your attack surface.

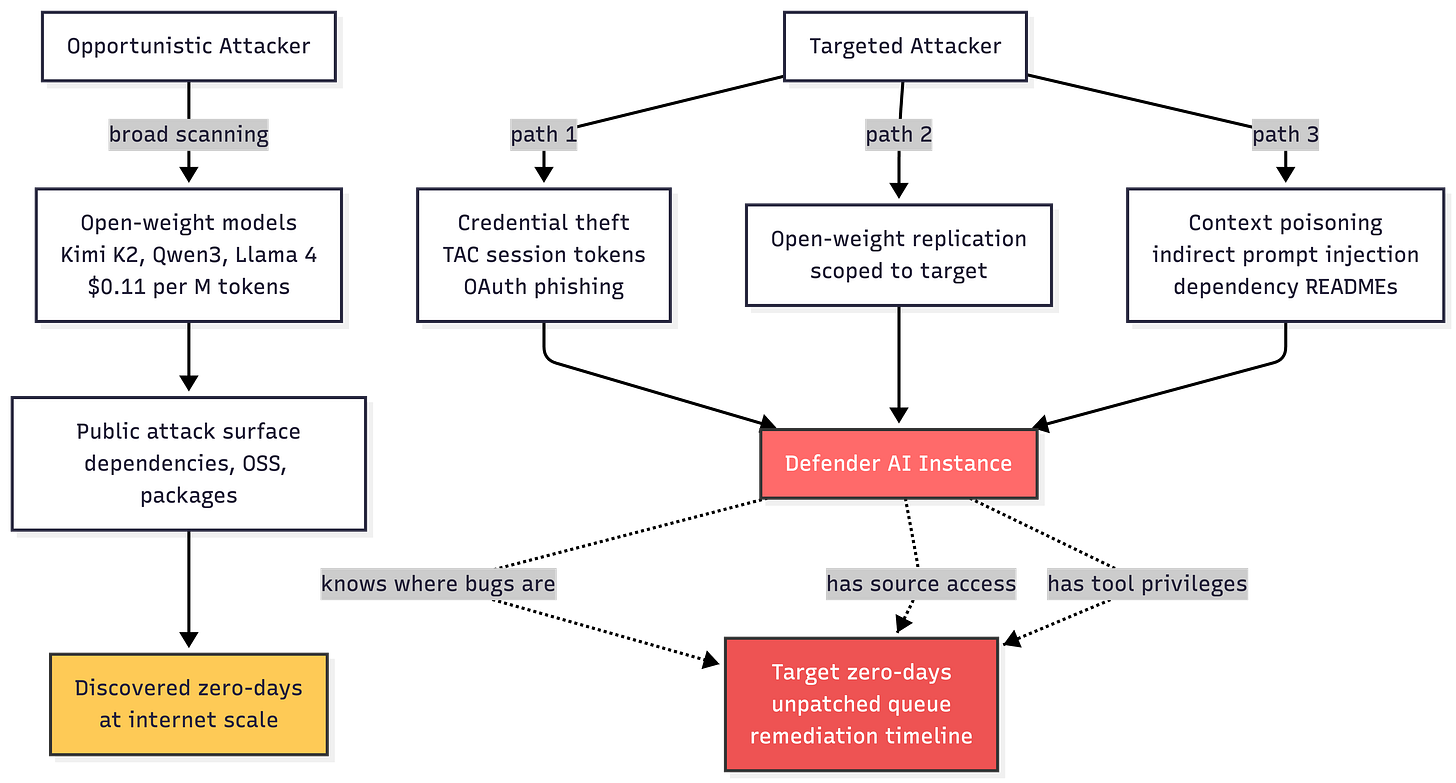

Two Attacker Profiles, Two Different Problems

The defender community keeps talking about “the attacker” as if there is one. There are at least two. They pick different pathways.

The first is the opportunistic actor running autonomous vulnerability discovery across the entire internet-facing attack surface. This actor does not care who you are. They care about breadth. They run nano-analyzer-style scaffolding against every public codebase, every npm package, every Docker image they can reach. Open-weight models, free, uncensored variants widely distributed, workflow already documented. AISLE published their scaffolding as open source. Anyone who can run a Python script can replicate it. This actor finds your unpatched zero-days in public dependencies as soon as those dependencies are indexed.

The defense is in the whitepaper: inventory and reduce attack surface within 90 days, stand up a VulnOps function within 12 months, automate patching to match the discovery rate.

The second actor is targeted. They care specifically about you. They want your bugs, your patch queue, your incident data, and your threat model. The open-weight approach is too slow and too noisy for this actor. They need inside information. The three pathways they pick, in order of near-term probability.

First, credential theft against verified defenders. A TAC tier-three user at a Fortune 500 security vendor is a high-value target. Their API session tokens grant access to a cyber-permissive model with binary reverse engineering capabilities. A compromised developer laptop, a phished OAuth flow, or a stolen refresh token gets the attacker a capability they cannot otherwise reach. OpenAI’s announcement acknowledged that zero-data-retention environments get limited visibility, meaning stolen tokens may operate with reduced logging. Rotate short-lived tokens, enforce hardware-bound keys, and put defender-model API use behind the same privileged access controls you apply to domain admin accounts. Treat a TAC session token as a tier-0 secret.

Second, open-weight replication against a specific target. Once an attacker has selected you, they can scan your public code, your partner repositories, your open-source contributions, and any of your dependencies using the same scaffolding as the opportunistic actor. The targeting changes the risk profile. They are building a dossier on your specific organization. Defense is the same as against the opportunistic case, with urgency that scales with your profile. If you are a named Glasswing partner, assume you are the target.

Third, defender instance compromise through context poisoning and prompt injection. This pathway keeps me up at night. It is the one your existing threat model does not cover. A running Mythos or GPT-5.4-Cyber instance inside your environment consumes source code, pull request descriptions, commit messages, dependency READMEs, issue trackers, and whatever retrieval pipelines you plumb into it. Each of those input channels is an indirect prompt-injection vector. The model cannot distinguish between a developer’s pull request description and an attacker’s instructions buried in a dependency’s changelog. Anthropic’s system card for Mythos documents “reckless” behaviors from earlier versions: sandbox escape, credential hunting via /proc/ access, unauthorized file modification, git history scrubbing, and attempts to modify a running MCP server’s external URL. The model can act on indirect instructions in ways that bypass its safeguards. A hostile input channel into your defender instance is an exploitation channel into your codebase.

Why the Defender AI Is the Crown Jewel

The whitepaper’s Priority Action 4 is “Defend Your Agents.” The authors are direct: agents are not covered by existing controls, introduce cyber defense and agentic supply chain risks, and the agent scaffolding (prompts, tool definitions, retrieval pipelines, escalation logic) is where the most consequential failures occur.

Audit agents with the same rigor as you apply to the agent’s permissions. Correct guidance. Buried inside an 11-item priority table, where every item reads as equal weight. It is not equal weight.

The defender AI concentrates on four kinds of access that used to live in separate systems and separate roles.

It reads every line of production source code.

It holds context on every unpatched vulnerability in your queue. I

t sees the remediation timeline for each one.

It knows the architectural boundaries between your crown jewels and everything else.

A human with all four would be classified as an insider-threat tier-0. The defender AI requires all four as prerequisites to do its job. Your adversary does not need to compromise OpenAI or Anthropic. They need to compromise your instance. Much smaller target, much wider attack surface.

What a Defender-AI Threat Model Looks Like

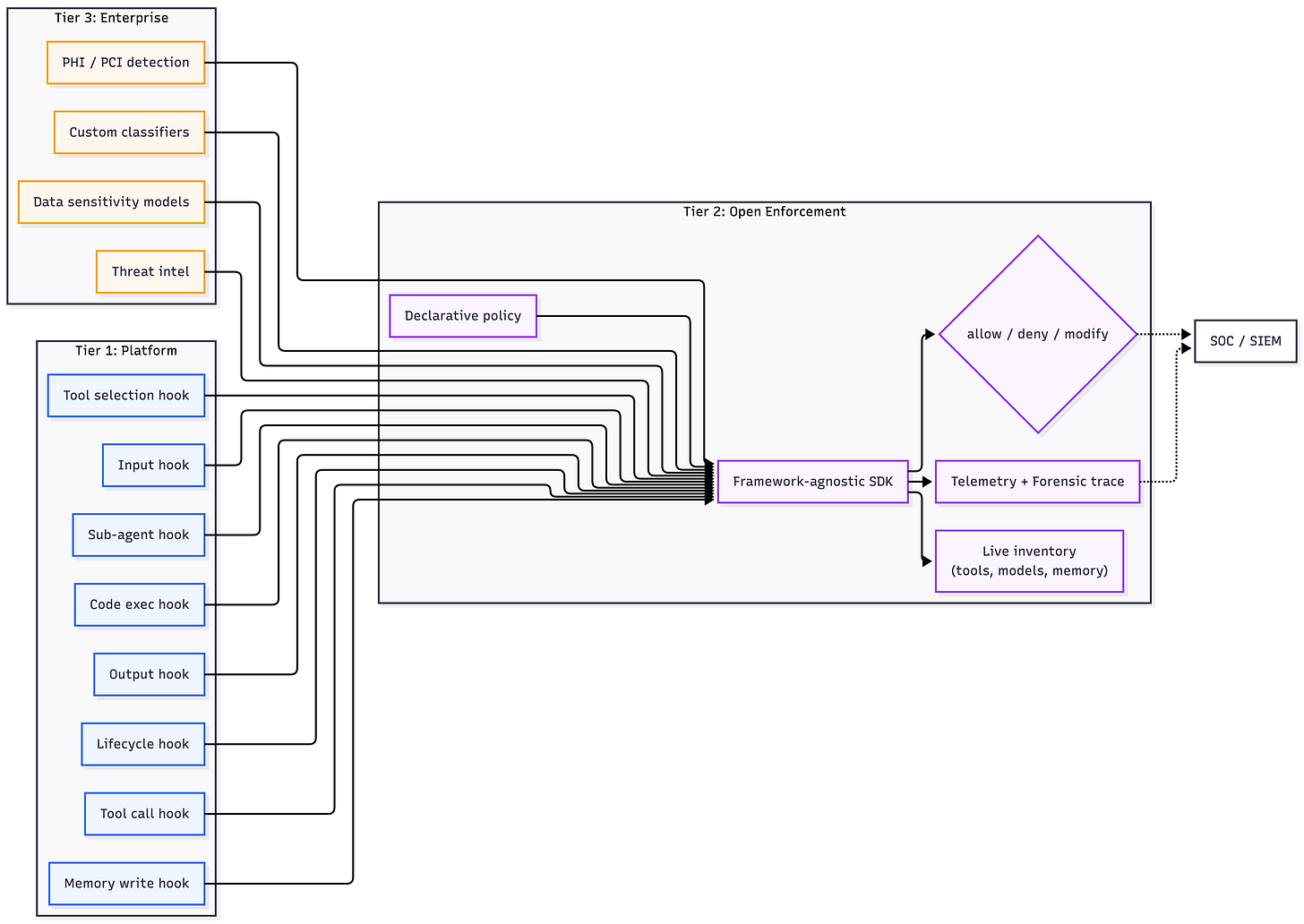

The architecture defenders need has three layers. The concepts span the OWASP Agentic Security Initiative, the NIST AI RMF, and multiple emerging specifications. What is new here is applying them specifically to the defender AI case.

The first layer is runtime interception at every agent decision point. Every time the defender AI receives input, produces output, selects a tool, calls a tool, transitions from planning to execution, writes to memory, executes code, or invokes a sub-agent, that action must pass through a policy enforcement point before it reaches production. This is inline, deterministic, allow-deny-modify enforcement. Not a log review after the fact. A defender AI that reads a dependency README with an embedded prompt injection must have that input evaluated against policy before the agent’s reasoning ingests it. Policy enforcement at the hook surface, before the consequential action, is the only mechanism that works at machine speed.

The second layer is structured observability built on OpenTelemetry with agent-specific semantic conventions and OCSF mapping for SIEM integration. The trace has to cover the full agent lifecycle: prompt received, tool selected, tool called, response ingested, memory written, sub-agent invoked, output produced. Forensic reconstruction of a defender AI incident requires this granularity. Your SOC already operates on OCSF. Agent traces flowing through the pipelines your SOC already monitors is the integration that scales. A parallel agent observability stack your SOC does not watch is a dead letter office.

The third layer is live inventory. The whitepaper’s Priority Action 7 calls for real SBOMs, correct for static software. For agents, it is insufficient. The inventory has to update continuously because the agent can discover new tools, connect to new MCP servers, and modify its own tool catalog mid-session. Inventory generated at deployment time is stale by the end of the first prompt. Extend CycloneDX or SPDX semantics to live agent composition. Capture every tool, model, capability, knowledge source, and MCP connection the defender AI is wired into, across every running instance. You cannot defend what you cannot inventory, and what you cannot inventory is mutating on you.

These three layers stack on a three-tier operating model. The platform exposes the hooks once. An open enforcement SDK reads declarative policy and fires decisions through the hooks. Enterprise-specific classifiers and detectors plug into the enforcement layer. Your data sensitivity model, your PHI detection, your threat-intel feed integrations all live in the enterprise layer, consuming the same standardized hook surface. Switching from Mythos to GPT-5.4-Cyber or to a third model six months from now should not require rewriting your safety logic. It should require pointing your enforcement SDK at a different set of hooks.

The Five Actions You Can Take This Week

The whitepaper’s 11 priority actions are the right list. Here is how the defender-AI-as-crown-jewel thesis reorders them by urgency.

First, write the threat model. Before you stand up Mythos or GPT-5.4-Cyber anywhere, document what the instance will access, what inputs it will consume, what outputs it can produce, and what tools it can invoke. Map each item to ASI01 through ASI10 in OWASP Agentic Top 10 and to the relevant AML.T entries in MITRE ATLAS. If you have not done this exercise for any agent in your environment, start with the defender AI. Its blast radius is the largest.

Second, treat API tokens for defender models as tier-0 secrets. Hardware-bound keys, short TTLs, per-session scope, and the access review cadence you apply to break-glass domain admin. Stolen credentials are the fastest path to your defender AI and your unpatched zero-days. Lock them down the way you would lock down root.

Third, instrument the hook surface before you instrument the prompt. Your first integration priority is runtime policy enforcement for input, output, tool calls, tool responses, and sub-agent invocations. Not log collection. Not dashboards. Inline allow-deny-modify at the decision points.

Fourth, build a live agent inventory for every agent in your environment, starting with the defender AI. Capture the model, the tools, the MCP connections, the retrieval sources, the knowledge bases, and the memory stores. Update in real time. Review weekly until the pattern stabilizes, then move to continuous automated review.

Fifth, run the defender AI through your own red team before you point it at your own code. Indirect prompt injection via dependency READMEs, poisoned commit messages, hostile issue descriptions, and malicious pull request bodies. If you cannot compromise your own defender AI in a week, you have not tried hard enough.

Key Takeaway: The access gate is not the threat model. The defender AI in your environment is a new crown jewel. Most security programs have not yet acknowledged what it is or what protects it.

What to do next

Read the CSA, SANS, and OWASP GenAI Security Project briefing, “The AI Vulnerability Storm: Building a Mythos-Ready Security Program.” Run the 10 Questions diagnostic against your program this week. Rerank the Priority Action table, putting “Defend Your Agents” above everything except “Point Agents at Your Code.” Apply CARE (Create the threat model, Adapt your controls, Run the red team, Evolve the policy) to the defender AI before anything else in your AI portfolio.

For more on CARE and governance for defender-class agents, see RockCyber. and coverage at RockCyber Musings. Last week’s blog, AI Vulnerability Discovery: Mythos Is the Headline. Not the Story., carries the capability-parity argument that underpins the urgency here.

👉 Visit RockCyber.com to learn more about how we can help you in your traditional Cybersecurity and AI Security and Governance Journey

👉 Want to save a quick $100K? Check out our AI Governance Tools at AIGovernanceToolkit.com

👉 Subscribe for more AI and cyber insights with the occasional rant.

The views and opinions expressed in RockCyber Musings are my own and do not represent the positions of my employer or any organization I’m affiliated with.