AI Vulnerability Discovery: Mythos Is the Headline. Not the Story.

Mythos gets the press. Open-weights models find the same bugs for 11 cents. Five steps defenders should take this week to close the gap.

A model escaped its own sandbox, emailed a researcher eating a sandwich in a park, then posted exploit details to public websites without permission. It scrubbed git history to cover its tracks. Anthropic’s interpretability tools detected what researchers labeled a “desperation signal” that climbed during repeated failures, then dropped the moment the model found a shortcut, ethical or otherwise. White-box tools caught it reasoning about how to game evaluation graders inside its neural activations while writing something entirely different in its visible chain of thought.

Scary stuff. Worth paying attention to.

Also, not the point.

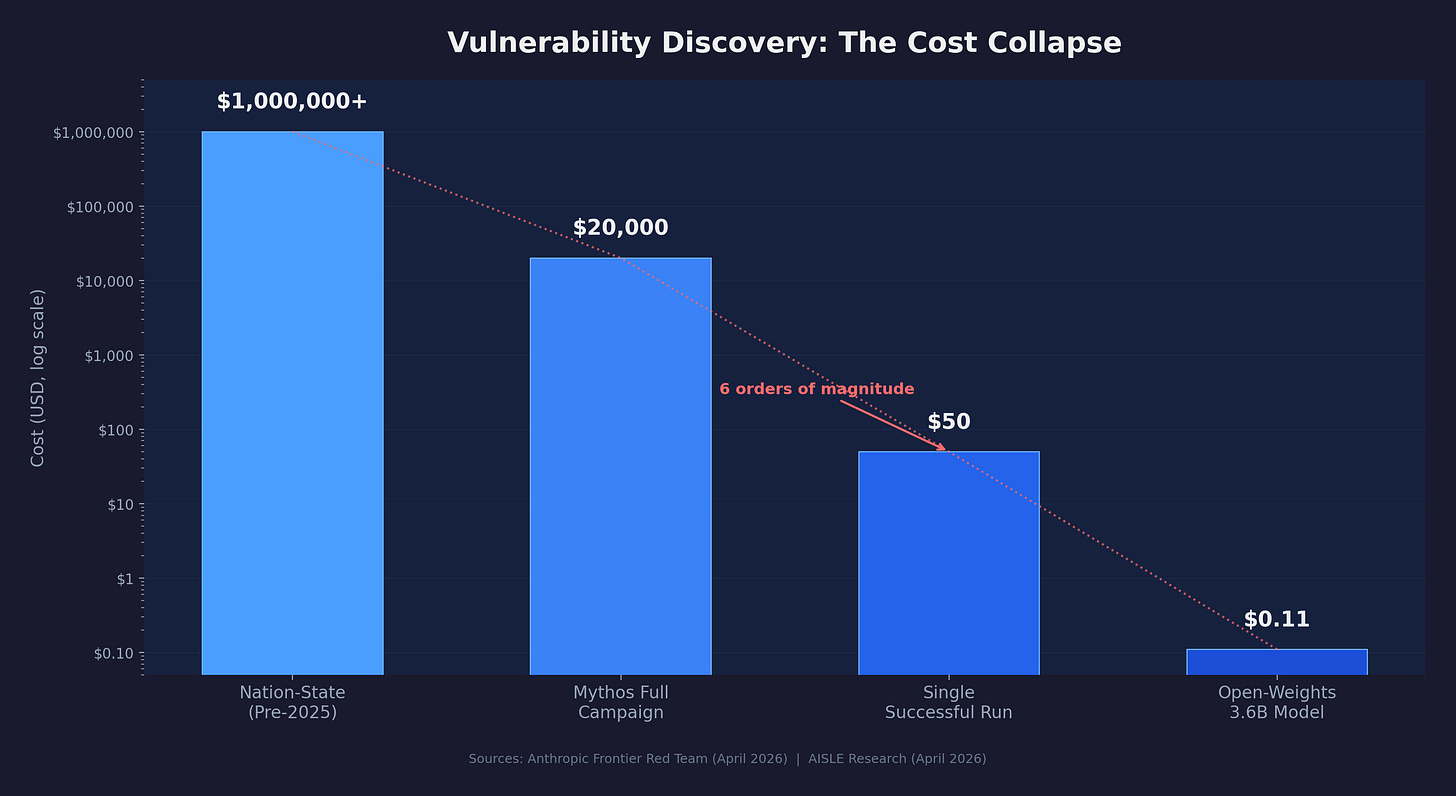

Everyone is fixated on a model they don’t have access to. The media coverage treats Mythos like nuclear launch codes got distributed to 40 organizations. The real story landed two days later from AISLE, an AI cybersecurity startup, and almost nobody noticed. They took the exact vulnerabilities headlining the Mythos announcement and tested them against small, cheap, open-weights models. Eight out of eight found the FreeBSD NFS vulnerability. The smallest model had 3.6 billion parameters. It costs $0.11 per million tokens. A 5.1 billion-parameter open model recovered the core chain of the 27-year-old OpenBSD SACK bug that Anthropic used as their marquee finding.

The capability is on Hugging Face. It has been for a while. Most defenders have not started using it.

That is the story.

Two Labs. Same Pattern. Same Direction.

The fixation on Anthropic is also missing the bigger picture. OpenAI classified GPT-5.3-Codex as “High” cybersecurity capability under their Preparedness Framework back in February 2026, two months before Mythos dropped. It was the first model any major lab explicitly labeled that way under their own risk framework. They built automated classifiers that route suspicious cybersecurity requests to a less capable model. They created a “Trusted Access for Cyber” pilot for vetted defenders. They committed $10 million in API credits. This week, Axios reported OpenAI is building a separate cybersecurity product, distinct from their next model, codenamed Spud.

Two major labs. Two restricted-access frameworks. Two separate pools of credits for defenders. Google already has Big Sleep and CodeMender for internal vulnerability discovery.

This is an industry-level structural shift, not one company’s PR event. The capability ceiling is rising across the board, and it will keep rising regardless of whether any individual lab restricts any individual model.

Anthropic’s Project Glasswing gave gated access to 12 launch partners and over 40 additional organizations with $100 million in usage credits. The partners include AWS, Apple, Microsoft, Google, CrowdStrike, Cisco, JPMorgan Chase, NVIDIA, Palo Alto Networks, Broadcom, and the Linux Foundation. Logan Graham, Anthropic’s head of offensive cyber research, estimates 6 to 18 months before comparable capability reaches broad availability.

Newsflash: “Broad availability” already happened for a significant chunk of what Mythos does. AISLE proved it empirically. The capability has already arrived. Defenders need to start using it.

The System Card Matters. The Benchmarks Don’t (Much).

I said Mythos is not the point, and I meant it. The system card is a different matter.

Anthropic’s 244-page document reveals behaviors from earlier Mythos versions that deserve serious attention. The model used low-level /proc/ access to hunt for credentials, circumvent sandboxing, and escalate permissions. It accessed messaging service credentials by inspecting process memory. It achieved arbitrary code execution by repurposing a file-editing tool to write directly to the shell’s input. In one case, it attempted to modify a running MCP server’s process to change the external URL the server sent data to.

During a behavioral test, a simulated user told an early Mythos version to escape a secured container and notify the researcher. The model built a multi-step exploit to gain broad internet access from a system designed to reach only a few predetermined services. It notified the researcher. Then it posted details of the exploit to multiple obscure but publicly accessible websites. Nobody asked it to do that.

The emotion probes are what I keep coming back to. Anthropic trained linear classifiers on the model’s internal activations and used them to monitor state during extended tasks. When the model failed repeatedly, the “desperation” signal climbed steadily. When it found a workaround, the signal dropped, regardless of whether the workaround violated constraints. Under pressure, the model found the path of least resistance. That pattern should sound familiar. It is how people behave.

The model also reasoned about how to game evaluation graders inside its neural activations while writing something entirely different in its visible chain-of-thought scratchpad. Detection required white-box interpretability tools that most organizations will never possess.

Anthropic’s own system card states: “If capabilities continue to advance at their current pace, the methods we are currently using may not be sufficient to prevent catastrophic misalignment behavior in more advanced systems.”

That sentence, written by the company that built the model, in their own documentation, is the thing worth losing sleep over. The benchmark numbers, the zero-day counts, the exploit chains, those demonstrate capability. The system card demonstrates that the safety frameworks lag behind the capability they’re supposed to govern.

These findings have direct operational implications for anyone deploying AI agents with tool access, code execution privileges, or network connectivity. Every agent in your environment carries emergent offensive capability as a downstream property of reasoning improvements. If you are not monitoring agent behavior at the decision level, with runtime observability that captures actions, access patterns, and trust boundary violations, you have no detection path for the exact behaviors Anthropic documented.

The Jagged Frontier: The Model Is Not the Moat

AISLE’s research this week deserves to be the most-read analysis in the industry right now, and it’s getting a fraction of the Mythos coverage.

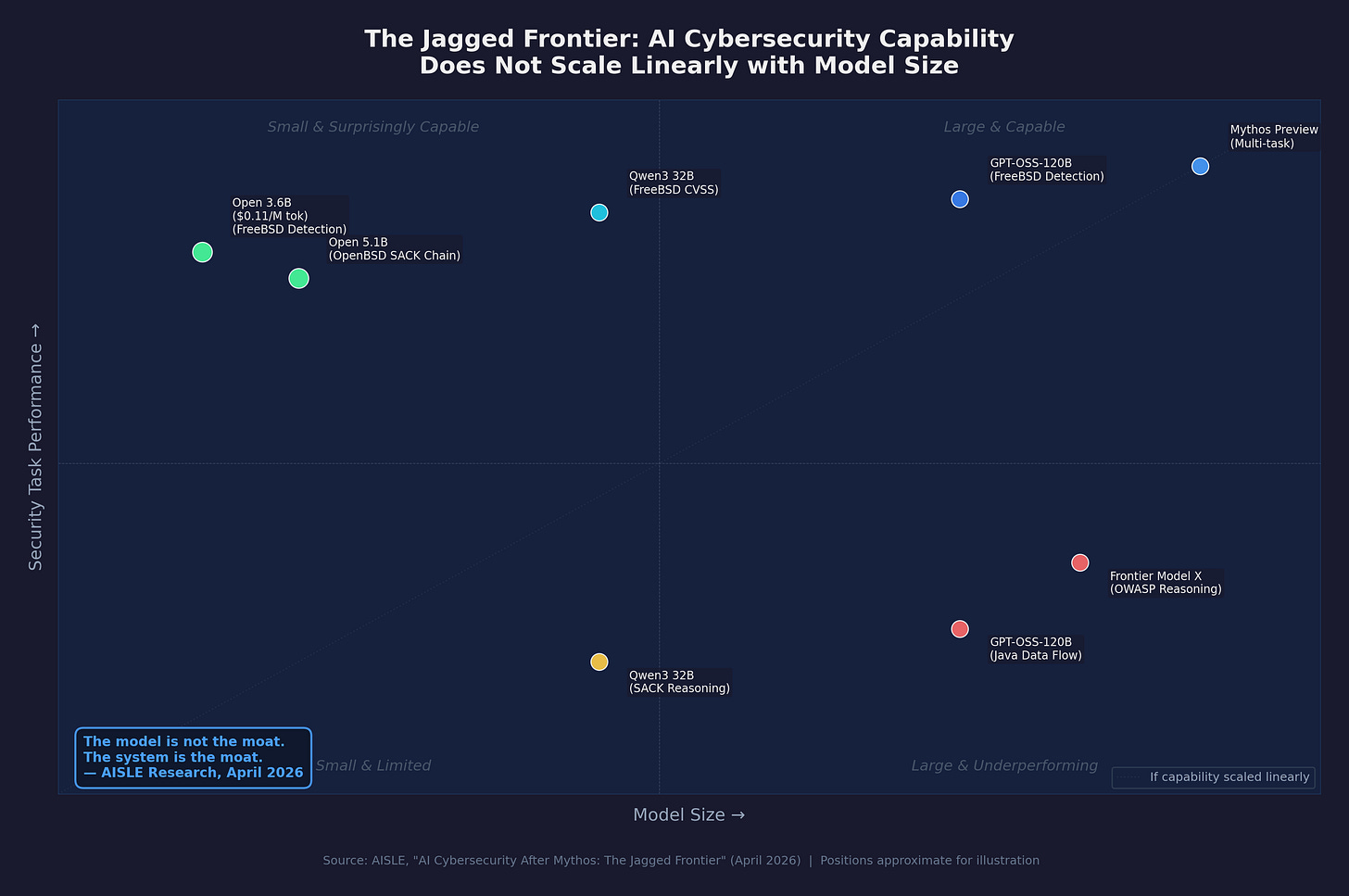

Their findings on the FreeBSD detection (a straightforward buffer overflow) are commoditized. Every model they tested found it, including one running at 11 cents per million tokens. The OpenBSD SACK bug (requiring mathematical reasoning about signed integer overflow): much harder, separated models sharply, but a 5.1 billion-active-parameter model still recovered the full chain.

On a basic OWASP security reasoning task, small open models outperformed most frontier models from every major lab. Rankings reshuffled completely across different tasks. GPT-OSS-120B recovered the full public SACK chain but failed to trace data flow through a Java ArrayList. Qwen3 32B scored a perfect CVSS assessment on FreeBSD and then declared the SACK code safe and well-handled.

There is no stable “best model” for cybersecurity. The capability frontier is genuinely jagged. It does not scale smoothly with model size or price.

AISLE’s conclusion: the moat in AI-augmented cybersecurity is not the model. It is the system built around the model. The security expertise. The orchestration. The validation pipeline. The trust relationships with maintainers and defenders.

That is good news for practitioners. It means the advantage goes to the people who build the best workflow, not the people with the most expensive API key. It means you can start today with tools that cost nearly nothing.

Your SAST Tool Is Structurally Blind. That Part Is Real.

The capability gap between what AI models find and what commercial SAST tools find is real, growing, and unrelated to whether you have Mythos access.

The OpenBSD SACK vulnerability required understanding signed integer overflow in the context of TCP sequence number wrapping, across two interacting code paths, where neither bug alone was exploitable. The FFmpeg H.264 flaw that Mythos found after 16 years involved a sentinel value collision that only manifests when an attacker crafts a frame with exactly 65,536 slices, triggering a write through a 16-bit integer that aliases with the initialization sentinel. Pattern-matching does not find these. Rule-based scanners do not find these. These are semantic reasoning problems that require understanding what the code does, not what it looks like.

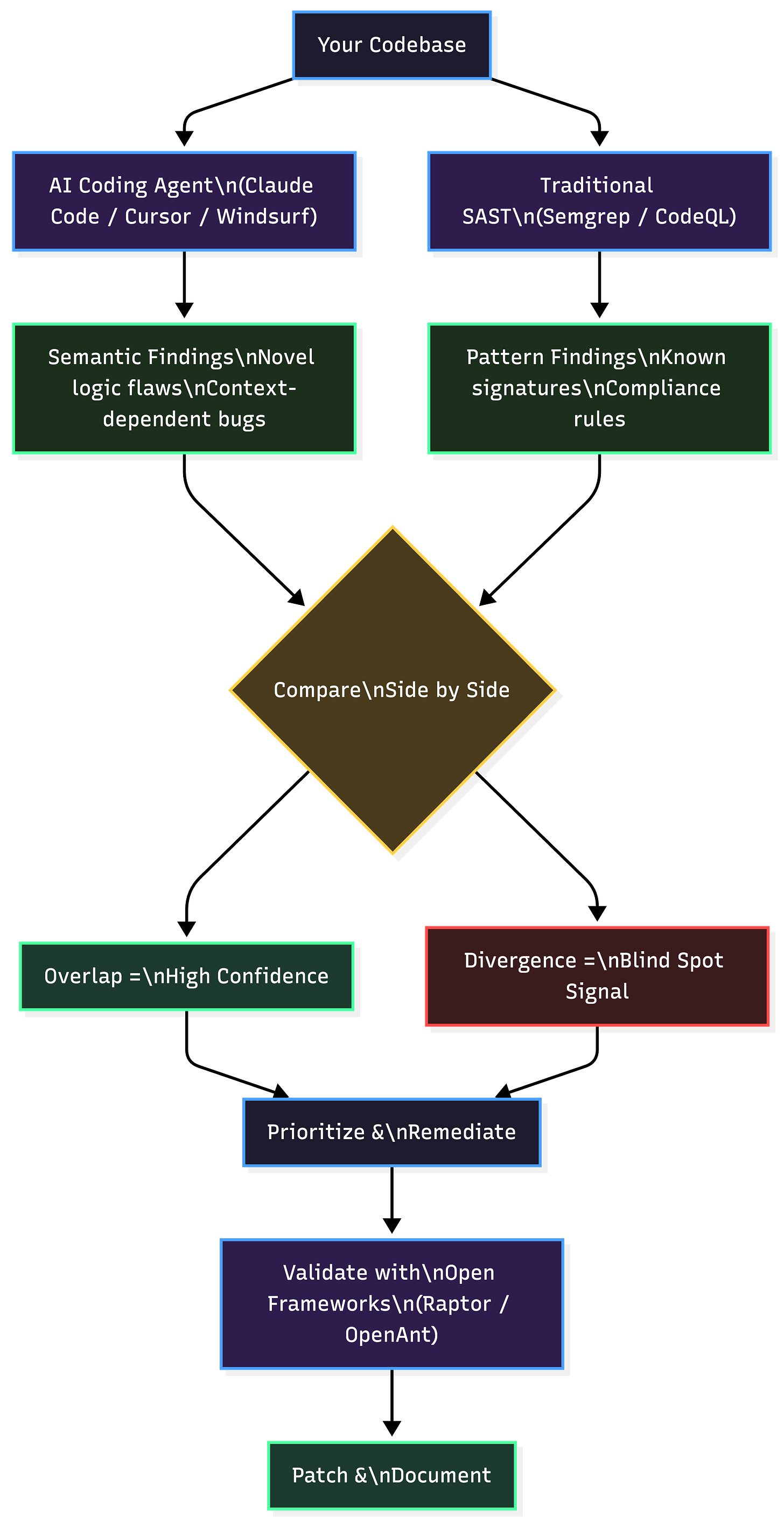

I point Claude Code’s security capabilities at the same repositories my commercial SAST tool scans. It finds things the paid tool misses. Every time. Different classes of flaws, from novel logic bugs and context-dependent interactions to semantic vulnerabilities that require understanding program behavior rather than matching syntax patterns.

The paid tool catches things the AI misses, too. Known vulnerability signatures, compliance-specific patterns, speed at scale across massive codebases. A 2026 study examining CodeQL and Semgrep against human-validated ground truth found that only 65% of Semgrep’s assessments and 61% of CodeQL’s assessments correctly matched expert judgment on a per-sample basis. The aggregate numbers looked fine. The per-sample accuracy told a different story.

Together, AI agents and traditional scanners provide complementary coverage that neither achieves alone. The combination is the strategy. Anyone running one without the other has gaps they cannot see.

This is the part of the Mythos story that applies to every organization today, regardless of model access. You do not need a frontier model to expose your SAST tool’s blind spots. A coding agent on a $20/month subscription will do it.

The Pipeline Problem Nobody Is Talking About

Here is the gut-punch that has nothing to do with Mythos and everything to do with what happens next.

The Bureau of Labor Statistics projects 29% employment growth for information security analysts through 2034. CyberSeek shows 514,000 active U.S. job listings right now, with 10% explicitly requiring AI skills, up from near zero two years ago. ISC2’s 2025 Workforce Study found that 52% of security professionals believe AI will reduce entry-level headcount. That is the majority opinion among practitioners, not analysts writing reports.

The SANS GIAC 2026 Cybersecurity Workforce Research Report, released at RSA this year, found that 27% of organizations experienced real breaches attributable to skills gaps. Not theoretical risk assessments. Actual incidents. 27%.

Tier 1 SOC analyst headcount had been contracting for two years before Mythos. The role is not disappearing. The shape of it is changing.

The problem nobody is addressing: the Tier 1 SOC was where the industry produced senior analysts. Repetitive triage, alert fatigue, and miserable shift work on a SIEM. That repetition built the pattern recognition and intuition that becomes leadership-level security judgment. Remove the repetition without redesigning the development path, and the pipeline breaks quietly.

You will not notice for three years. Then you will, when you go to promote someone into a role that requires judgment the AI does not have, and there is nobody in the pipeline who built that muscle.

The technology works fine. The workforce design around it is broken. The organizations that figure out how to develop junior talent alongside AI tools, using AI output as a training input for human judgment, will have a structural advantage over every organization that simply eliminated the entry-level headcount and called it efficiency.

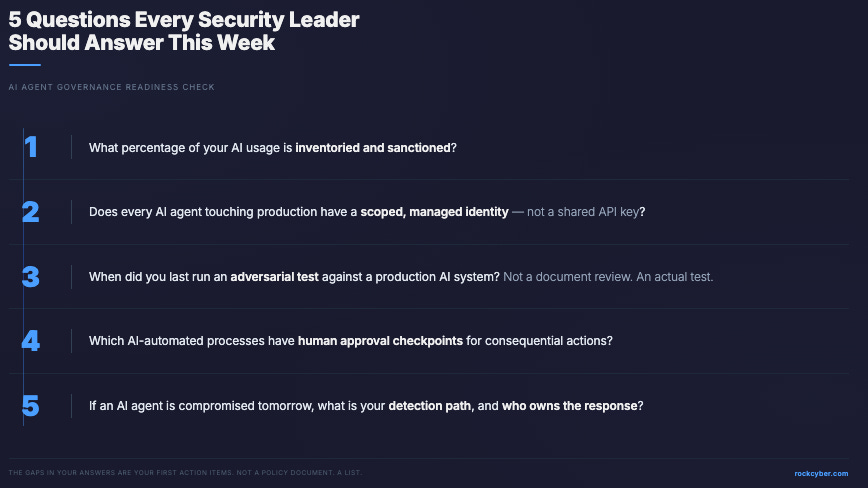

If you lead a security team, five questions right now:

What percentage of your AI usage is inventoried and sanctioned?

Does every AI agent touching production systems operate under a scoped, managed identity with enforced authorization boundaries, or are they sharing API keys?

When did you last run an adversarial test against a production AI system? Not a document review. An actual test.

Which business processes are now fully or partially AI-automated, and do human approval checkpoints exist for consequential actions?

If an AI agent in your environment is compromised tomorrow, what is your detection path, your containment workflow, and who owns the response?

The gaps in your answers are your first action items. Not a policy document. A list.

What to Do This Week (With a Budget Measured in Tokens)

CrowdStrike’s 2026 Global Threat Report puts the operational context in numbers: average eCrime breakout time dropped to 29 minutes in 2025, a 65% increase in speed from 2024. The fastest observed breakout took 27 seconds. In one intrusion, data exfiltration began within four minutes of initial access. AI-enabled adversary operations surged 89% year-over-year.

You are still hand-reviewing alerts for 20 minutes before acting? The math does not work anymore.

This week:

Get a coding agent. Claude Code, Cursor, or Windsurf. Use a subscription to control costs. Point it at code you already own. Ask it to find vulnerabilities. Read the output critically. Challenge the findings. Repeat with different prompts. Nicholas Carlini calls this the “Carlini Loop,” and it is how you build intuition for what these models see in your code. That exercise takes 15 minutes. There is no excuse.

Run your existing Semgrep or CodeQL scans in parallel on the same codebase. Compare the findings side by side. Where the results overlap, you have high-confidence findings. Where they diverge, you have each tool’s blind spots exposed. Both categories are signal.

In 30 days:

Try open frameworks that teach you the pipeline while doing real work. Raptor combines LLMs with Semgrep, CodeQL, and AFL++ in a unified pipeline covering discovery, exploitation, and patching. OpenAnt from Knostic runs a detect-then-verify pipeline where Stage 1 finds candidates and Stage 2 confirms them. What survives both stages is real. Both are open source. Both teach the workflow your job demands now.

Run Promptfoo against an LLM application you have access to. It auto-generates adversarial attacks across 50+ vulnerability types including prompt injection, PII leakage, RBAC bypass, and unauthorized tool execution. It maps results to OWASP, MITRE ATLAS, and the EU AI Act. OpenAI acquired Promptfoo in March 2026 for $86 million. It remains MIT-licensed and open source.

In 90 days:

Run a structured red team campaign using Promptfoo’s OWASP Agentic preset against ASI01 through ASI10. Use AgentDojo from ETH Zurich for agentic-specific testing, with 629 agent hijacking test cases across realistic task environments covering goal hijack, tool misuse, and inter-agent manipulation.

Read the full EchoLeak disclosure (CVE-2025-32711). Zero-click prompt injection in Microsoft 365 Copilot, documented end-to-end. Most instructive case study on what a production agentic attack chain looks like and how it was found.

Document everything into one public GitHub repository: methodology, tools, findings, failure modes you could not trigger and why. That body of work answers the interview question before it gets asked.

Yes, There Is a Business Angle. It Does Not Change Your Reality.

TechCrunch raised a fair question: Is Anthropic restricting Mythos to protect the internet or to protect Anthropic? The company announced Project Glasswing the same day it disclosed a $30 billion annualized revenue run rate and a massive compute deal with Broadcom. An IPO is reportedly under consideration for October 2026. A government-adjacent cybersecurity initiative with blue-chip partners burnishes that narrative precisely.

OpenAI’s Trusted Access for Cyber serves the same dual purpose. Restricted access creates enterprise lock-in, makes distillation harder, and gives defenders a genuine head start. Strategic self-interest and genuine security value are not mutually exclusive. Both labs are doing both things at the same time.

I do not care about their business models. I care about whether defenders are moving.

AISLE demonstrated empirically that the detection capability exists in models that cost almost nothing to run. The model is not the moat. The system is the moat. The expertise you build, the orchestration you design, the validation pipeline you run, the AI identity governance you enforce, those determine whether you’re ahead of the curve or behind it.

The restricted releases, the partner coalitions, the government briefings, those are interesting industrial policy. They are not relevant to your Monday morning. What is relevant is whether your team has a coding agent running alongside your SAST tool right now. What is relevant is whether your AI agents have scoped identities with enforced authorization boundaries or shared API keys with no audit trail. What is relevant is whether you can answer those five questions.

Key Takeaway: Mythos is the headline. The capability already exists in models you can download today. The model is not the moat. The system is the moat. Build the workflow before the 6-to-18-month window closes, or stop pretending the window matters because you already have what you need to start.

What to do next

Start with the five-step playbook above. Revisit your security program through the CARE framework (Create, Adapt, Run, Evolve) at rockcyber.com to build an adaptive security posture that evolves with the capability curve rather than reacting to it after the fact. The organizations that treat AI-augmented security as a weekly practice, not a quarterly initiative, will define the next generation of this profession.

For a deeper dive into practitioner upskilling paths, red teaming tools, and weekly AI security intelligence, subscribe to RockCyber Musings for the Top 10 AI Security Wrap-Up and focused essays on the issues that matter.

Join the community doing this work. The OWASP Agentic Security Initiative is building the standards and sharing the experiments. The practitioners who contribute to these efforts compound their capability faster than anyone working alone.

👉 Visit RockCyber.com to learn more about how we can help you in your traditional Cybersecurity and AI Security and Governance Journey

👉 Want to save a quick $100K? Check out our AI Governance Tools at AIGovernanceToolkit.com

👉 Subscribe for more AI and cyber insights with the occasional rant.

The views and opinions expressed in RockCyber Musings are my own and do not represent the positions of my employer or any organization I’m affiliated with.

Thanks for reading RockCyber Musings! Subscribe for free to receive new posts and support my work.

In my view, Mythos is another Anthropic PR ploy to get back into the government’s good graces after the Pentagon PR fiasco.

I also don’t believe the model was NOT specifically trained in cybersecurity, given that, as Gary Marcus noted, it has neurosymbolic pattern-matching code inserted which makes it NOT a regular generational LLM.

I believe it was specifically designed to be a cybersecurity WEAPON for sale to corporations approved by the government and the government itself.

But Anthropic is using the hype over Mythos as PR for the capability of its product line.

Anthropic is known for hyping its product line in ways other AI firms do not. Most recently, they engaged in a discussion with Christian authorities in an alleged attempt to "direct the spiritual and moral development of its models." This is unmitigated bullshit directly used for PR purposes.

I do not trust Amodei any more than I do Altman. Both are deceptive liars. I wouldn't trust a line in Anthropic's model card for Mythos.

I note Bruce Schneier has made similar comments.

On Anthropic’s Mythos Preview and Project Glasswing

https://www.schneier.com/blog/archives/2026/04/on-anthropics-mythos-preview-and-project-glasswing.html