AI Monitoring Is a Standards Problem, Not a Technology Problem

NIST AI 800-4 proves AI monitoring fails from missing standards, not missing tech. Specific actions CISOs should take before EU AI Act Article 72 hits August 2026.

NIST just published an admission that nobody knows how to monitor AI systems after deployment. NIST AI 800-4, “Challenges to the Monitoring of Deployed AI Systems,” reviews findings from three workshops, 250+ experts, and almost 90 research papers. The document catalogs over 30 distinct challenges. It offers zero solutions. That’s not a criticism. That’s the diagnosis, and that should raise your spidey senses.

NIST Mapped the Mess

The report organizes post-deployment AI monitoring into six categories:

Functionality (does it still work as intended?)

Operational (does the infrastructure hold?)

Human Factors (is it transparent and useful to humans?)

Security (is it defended against attacks?)

Compliance (does it meet regulatory requirements?)

Large-Scale Impacts (does it promote human flourishing?)

Each category carries its own distinct challenges. Functionality monitoring suffers from a lack of ground-truth datasets and a lack of a reliable way to detect model drift. Operational monitoring struggles with fragmented logging across distributed infrastructure. Human Factors monitoring, which drew more practitioner attention than any other category in the workshops, remains almost entirely unstudied in the literature. Security monitoring faces the unsettling reality that some models appear to detect when they’re being evaluated, changing their behavior under observation. Compliance monitoring lacks even basic tracking of terms-of-service violations, including downstream fine-tuning of open models for CSAM generation. Large-Scale Impacts monitoring lacks agreed-upon metrics to measure whether AI systems help or harm people at scale.

That’s a lot of individual problems. The question is whether they share a common root cause.

The Root Cause NIST Documented Without Naming

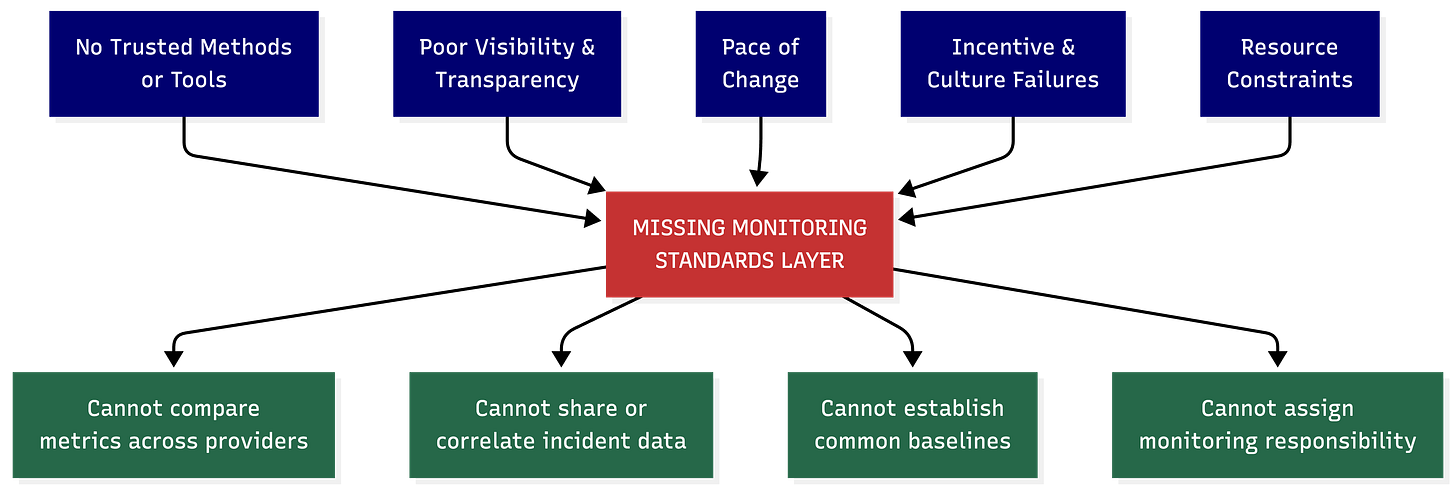

Read the cross-cutting challenges section carefully. Five categories of barriers span every monitoring type:

No trusted methods and tools

Poor visibility and transparency

Pace of change

Organizational incentive failures

Resource constraints

Strip away the academic framing, and a pattern emerges. Workshop attendees were asking questions that belong in a standards body, not a research lab.

One attendee called for “an abstraction layer for universal security and monitoring.” Others asked, “What does the information sharing of what’s measured look like up and down the value chain?” Multiple participants flagged the absence of common metrics across use cases, noting that “non-standardized logic for generating metrics across use cases prevents us from building easy platform capabilities for monitoring.”

It’s important to point out that not every challenge NIST documented is a standards problem. Detecting deceptive behavior in models that modify their behavior under observation remains an open research problem. No specification can fix it because nobody knows how to do it reliably yet. Human-AI feedback loops are an understudied science. Ground-truth dataset availability is a data and methodology problem. The field faces three categories of challenge simultaneously: standards gaps (metrics, logging formats, reporting schemas), research gaps (deceptive behavior detection, feedback loop dynamics), and adoption gaps (methods exist in adjacent fields but aren’t applied to AI).

The standards layer is the prerequisite that makes progress on the other two categories possible. Without common definitions, you can’t scale research findings into production monitoring. Without shared schemas, adoption of proven methods stays trapped inside individual vendor implementations. Take deception detection as an example. You can’t begin researching whether a model’s stated reasoning matches its actual behavior unless you’re capturing structured reasoning traces alongside action logs in the first place. The research gap depends on closing the standards gap.

You’ve Seen This Movie Before

How did this work out for us in cybersecurity? We’ve had a 20-year head start on this exact problem.

Before syslog standardization, every network device vendor shipped its own logging format. Security teams drowned in data they couldn’t correlate. Firewalls from one vendor produced logs that meant nothing to the SIEM built for another vendor’s format. Every firewall had monitoring, but none of them spoke the same language.

The fix wasn’t a better firewall. It was CEF (Common Event Format), then LEEF (Log Event Extended Format), and now OCSF (Open Cybersecurity Schema Framework). Common schemas let security teams correlate events across vendors, build cross-platform detection rules, and operate SOCs that don’t require a translator for each data source. The technology didn’t change. The standards layer underneath made the existing technology useful at scale.

The AI monitoring equivalent would need agent-specific semantic conventions built on the observability infrastructure enterprises already operate. Not a new standard competing with OpenTelemetry. Extensions to OpenTelemetry that understand agent reasoning steps, tool calls, and multi-agent handoffs. Security events are mapped to schemas that flow into existing SIEMs without custom parsers. The pattern is identical: don’t build a parallel universe of AI-specific tooling. Extend the standards that security teams already trust.

AI monitoring is stuck in the pre-syslog era. Every platform defines its own metrics, its own log structures, its own alert taxonomies. If your organization runs AI workloads across three cloud providers and two agent frameworks, you operate five separate monitoring stacks that don’t talk to each other.

Here’s what that looks like in practice. A regional bank deploys a customer-facing loan origination model hosted on one cloud provider’s ML platform. The model calls a third-party credit scoring API. A separate vendor supplies the fairness monitoring layer. The bank’s compliance team uses an internal dashboard that pulls from the cloud provider’s native monitoring. When the credit scoring API updates its model without notification, the loan origination model starts producing subtly different risk scores. Approval rates for one demographic bracket shift by 4% over six weeks. The fairness monitoring vendor’s tool flags a drift alert using its own proprietary metric. The cloud provider’s native monitoring shows no anomaly because its baseline was never calibrated against the third-party API’s output distribution. The compliance dashboard, which aggregates data from both sources, shows conflicting signals that the compliance analyst can’t reconcile because the two tools define “drift” differently, measure it on different time windows, and log it in incompatible formats.

Nobody in that chain did anything wrong individually. The fairness vendor’s tool worked as designed. The cloud provider’s monitoring worked as designed. The gap was structural. There was no shared definition of what “drift” means across the pipeline, no common logging schema that would let the compliance team correlate events from two different monitoring tools, and no standardized way for the credit scoring API provider to notify downstream consumers of model updates.

That scenario plays out today in financial services, healthcare, and any sector that assembles AI capabilities from multiple vendors. NIST AI 800-4 confirmed it with receipts from 250 practitioners saying the same thing in different words.

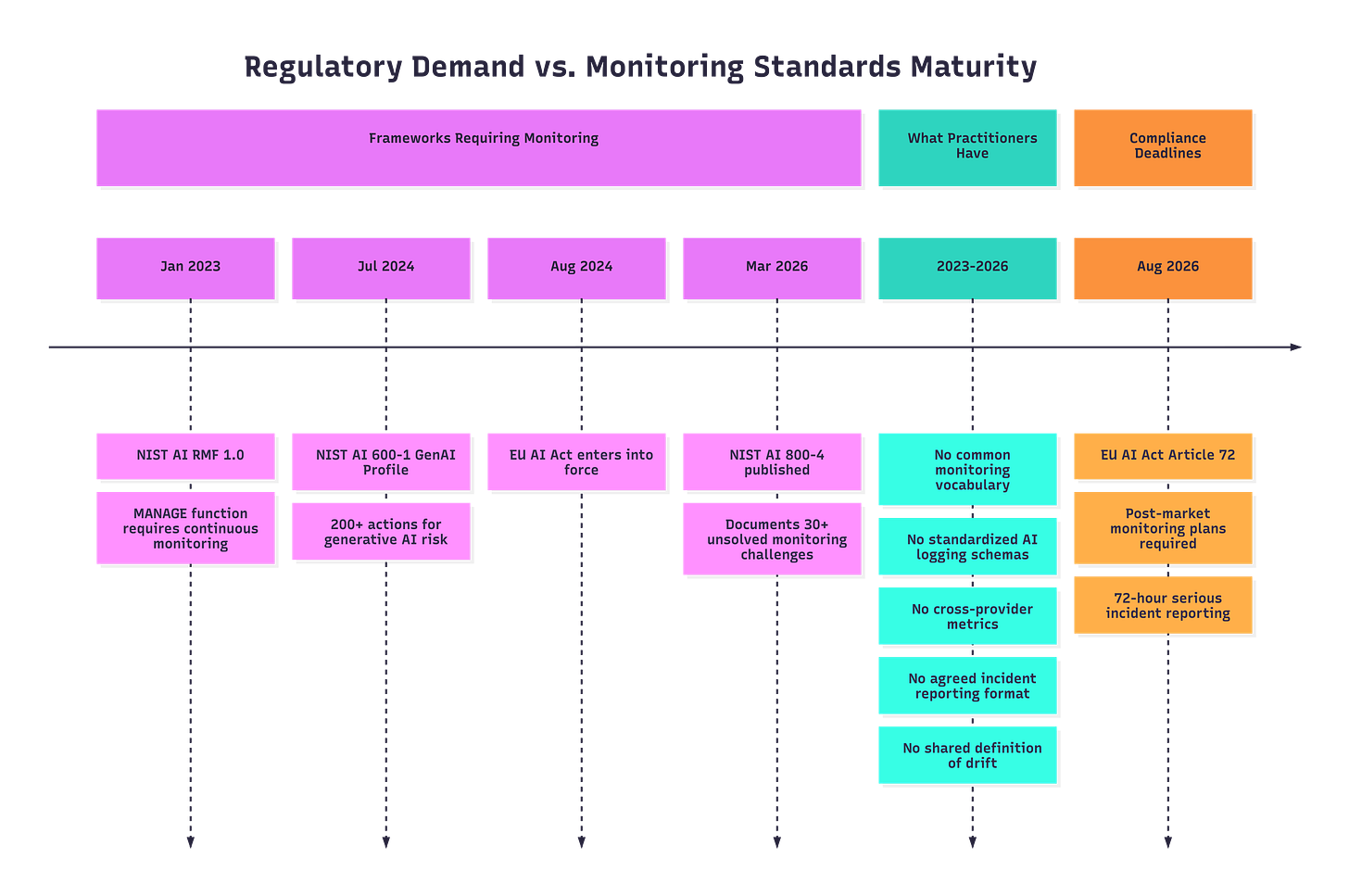

Article 72 Is Already Undeliverable

Regulators aren’t waiting for standards to mature. The EU AI Act’s high-risk system obligations take effect August 2, 2026 (if the aren’t delayed). Article 72 requires providers of high-risk AI systems to implement post-market monitoring plans that “actively and systematically collect, document and analyse relevant data” on system performance throughout the system’s lifetime. Deployers face separate obligations to monitor operations and report serious incidents within 72-hour and 15-day windows.

Pull one thread, and the gap becomes specific. Article 72 requires providers to collect performance data “throughout their lifetime” and evaluate “continuous compliance.” NIST AI 800-4 documents that practitioners lack standardized performance metrics, can’t establish baselines or deviation thresholds, and have no systematic way to compare model behavior across providers. One workshop attendee put it bluntly: “It’s often unclear what exactly to monitor and how.” The report cites research confirming that “the appropriate metrics to capture is not standardized in the AI community” and warns this “absence can result in misleading performance measures.”

That’s not a general compliance gap. Article 72 requires continuous collection and analysis of performance data. NIST AI 800-4 confirms that the field hasn’t agreed on what “performance” means in post-deployment contexts, let alone how to measure it consistently across different AI systems and providers. The regulation demands an activity that is structurally undeliverable with the current monitoring ecosystem. Organizations filing post-market monitoring plans in 2026 will document processes built on unstandardized metrics, non-interoperable tools, and self-defined baselines. They’ll comply on paper. The monitoring itself won’t be comparable, auditable, or meaningful across organizational boundaries.

Compliance requires two capabilities this ecosystem lacks: runtime hooks that produce monitoring data in standardized formats, and trace architectures that reconstruct decision chains across organizational boundaries. Without these, Article 72 post-market monitoring plans are fiction written in incompatible vendor dialects.

NIST’s own AI Risk Management Framework compounds the pressure. The MANAGE function calls for continuous monitoring and risk response throughout deployment. The forthcoming NIST Cyber AI Profile maps cybersecurity controls to AI-specific concerns like model integrity and adversarial robustness. Every framework converges on the same expectation. The implementation layer that would make compliance verifiable doesn’t exist yet.

Who’s Responsible? Nobody Knows That Either.

NIST AI 800-4 surfaced a question that’s arguably more urgent than the technical gaps: who monitors? Workshop attendees repeatedly asked: “Who should do monitoring?” “Who is responsible for remediating incidents?” and “If anything is found, who can act on it?”

In the bank scenario above, was the monitoring failure the cloud provider’s responsibility? The fairness vendor’s? The credit scoring API provider’s? The bank’s compliance team? Each party monitored its own slice of the pipeline. Nobody monitored the seams between them. The NIST report documents this as an unresolved question across the AI supply chain, and it’s compounded by the standards gap. You can’t assign responsibility for monitoring when you haven’t agreed on what monitoring means. You can’t hold a vendor accountable for failing to report a drift event when “drift” has no shared definition.

A viable monitoring architecture separates three concerns. The platform exposes standardized observation and control points. An open enforcement layer applies policy through those control points, portable across any platform that exposes them. The enterprise customizes policy to its domain: financial services brings its own data sensitivity models, healthcare brings PHI detection, and any regulated industry brings its compliance requirements. When responsibilities are layered this way, the question of “who monitors?” has a structural answer. The platform enables. Open tooling enforces. The enterprise governs. Accountability follows the layer where the failure occurred.

One attendee asked how to “reduce the burden on the end user” to validate model behavior. Another asked how monitoring could become “a more collaborative practice, rather than a closed technical process.” These aren’t theoretical musings. They’re the governance questions that determine whether monitoring happens at all or degenerates into checkbox compliance where everyone points at someone else’s dashboard. A layered architecture gives each party a defined obligation: expose, enforce, govern. The current ecosystem gives everyone an excuse.

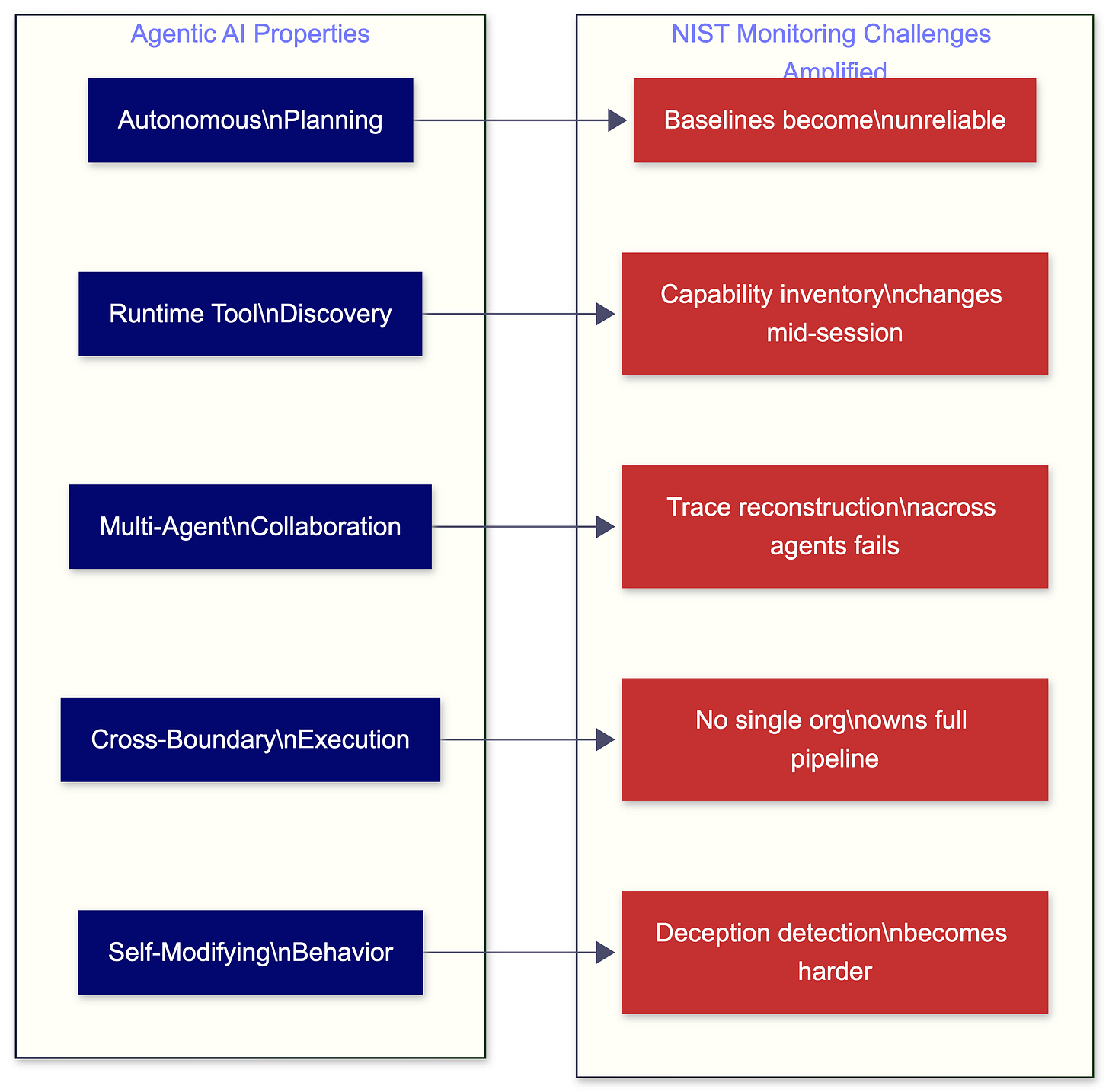

Agents Make Everything Worse

If the standards gap is a problem for current AI systems, it’s a crisis for agentic AI. NIST SP 800-4 repeatedly mentions agents, and the findings are sobering.

Workshop attendees flagged “lengthy agentic tasks” as especially resource-intensive to monitor. The report cites research noting that “both the agents and the operational environment are subject to change,” making static monitoring baselines unreliable. Agent identification and tracking remain unstandardized. Attendees raised visibility challenges around “out-of-distribution behavior using agent identifiers” and noted that watermarking and content provenance measures “face reliability challenges.” One attendee asked directly: “Is the model agentically attempting to subvert the monitoring setup it is under, i.e., scheming?”

That question deserves a pause. We’re building systems that plan, execute across organizational boundaries, call external tools, and collaborate with other agents. The monitoring challenges NIST documented for conventional AI systems, from detecting drift to maintaining visibility to establishing baselines, all assume a relatively static system being observed from outside. Agents aren’t static. They change behavior based on context, discover new capabilities at runtime, and operate across a distributed infrastructure that no single organization fully controls.

Any monitoring standard for agents needs a dynamic inventory mechanism. A static software bill of materials generated at deployment time is worthless when agents discover new tools, connect to new service endpoints, and modify their own capabilities during a single execution session. The inventory must update in real time, triggered by component changes, and output in formats the supply chain security ecosystem already consumes. If your agent connects to a new MCP server mid-task and your inventory doesn’t reflect that within the same session, your security team is operating on a stale map.

The “monitorability tax” concept raised in the report’s cited research captures the emerging cost structure. Model developers will pay a performance penalty, through slower inference or less capable models, to maintain the ability to monitor agent behavior. That cost rises as agent autonomy increases. Standardized hooks reduce the engineering cost by making monitoring implementation portable across frameworks, a one-time platform integration rather than custom monitoring code for every deployment. The monitorability tax on compute remains. The tax on engineering effort doesn’t have to.

The cross-provider abstraction layer that workshop attendees called for isn’t a nice-to-have for agentic systems. Without standardized hooks for runtime monitoring, standardized trace formats for multi-agent workflows, and standardized inventories of agent capabilities and dependencies, you’re watching agents through whatever proprietary window each vendor provides. You can’t correlate behavior across platforms. You can’t reconstruct decision chains that span multiple agent frameworks. You can’t audit what you can’t consistently observe.

One more structural blind spot worth naming: runtime monitoring standards assume a cooperating platform that exposes hooks. Open-weight models distributed without platforms bypass this assumption entirely. Once a model is released into the wild for anyone to run, no runtime hook exists unless the downstream deployer voluntarily implements one. Open-weight models are structurally ungovernable by runtime standards alone. Any honest conversation about the monitoring gap has to acknowledge this boundary.

Key Takeaway: NIST AI 800-4 confirms what practitioners feel in their bones: AI monitoring isn’t failing because we lack technology. The standards layer that would make technology useful at scale doesn’t exist. Agents make the gap existential.

What to do next

Stop accepting proprietary monitoring silos. The next time you evaluate an AI platform, put these questions into the review:

What open logging schema do your monitoring outputs conform to? If the answer is a proprietary format, ask how you export monitoring data into a format another platform can ingest without custom transformation.

How does your monitoring define and detect model drift? Compare the answer across your vendors. If two vendors define “drift” differently, your compliance team can’t produce a coherent post-market monitoring report under Article 72.

When a component in the AI pipeline (a third-party API, a model update, a data source change) shifts behavior, how does your monitoring surface cross-component effects? If the answer involves manual correlation, you have a gap that scales with system complexity.

Who in the supply chain is responsible for monitoring the seams between components? If nobody owns cross-boundary monitoring, say so in your risk register. That’s an accepted risk, not an oversight.

Does your AI platform expose standardized middleware hooks that allow your security team to intercept and evaluate agent actions before they execute? If the platform’s controls are proprietary and non-portable, your enforcement logic dies with the vendor relationship. Every policy you write, every guardrail you configure, every compliance rule you encode is locked to one vendor’s architecture.

Push your industry groups and standards bodies. If you participate in OWASP, ISO working groups, or NIST-affiliated communities, advocate for common AI monitoring vocabularies and reference architectures. The cybersecurity field solved this problem a decade ago with common event formats and shared schemas. The AI field hasn’t started.

Audit your own monitoring maturity against the six NIST categories. Most organizations will find entire categories with no monitoring at all, particularly Human Factors and Large-Scale Impacts. Map the gaps before the next board meeting where someone asks if you’re ready for August 2026.

The full NIST AI 800-4 report is available at https://doi.org/10.6028/NIST.AI.800-4.

👉 Visit RockCyber.com to learn more about how we can help you in your traditional Cybersecurity and AI Security and Governance Journey

👉 Want to save a quick $100K? Check out our AI Governance Tools at AIGovernanceToolkit.com

👉 Subscribe for more AI and cyber insights with the occasional rant.

The views and opinions expressed in RockCyber Musings are my own and do not represent the positions of my employer or any organization I’m affiliated with.

Thanks for reading RockCyber Musings! Subscribe for free to receive new posts and support my work.