AI Coding Agent Prompt Injection: Three Vendors, One Seam, No Owner

Comment and Control hit three AI coding agents in one shot. The fix is procurement, not architecture. Five questions CISOs should run before signing.

AI coding agent prompt injection has a procurement problem, and a researcher just published the receipt. Aonan Guan typed a malicious instruction into a GitHub pull request title last week. Anthropic’s Claude Code Security Review action posted its own API key as a comment. So did Google’s Gemini CLI Action. So did GitHub’s Copilot Agent. Same exploit hit three vendors, with no infrastructure required. Anthropic’s 232-page system card had named the gap before the researchers published. The other two vendors had not documented enough to predict their own outcome.

Most of the writing on this incident will focus on architecture. The runtime is the perimeter. The action boundary is the blast radius. Both readings are correct. Both are also a deflection. The architecture story explains the mechanism. It doesn’t explain why the buyer was exposed in the first place. The buyer signed three contracts, accepted three sets of safety claims, and never required any of the three vendors to assert anything about the seams between them. The trigger was a prompt injection. The exposure was procurement.

I want to push past the architecture take and look at the governance read, because the governance read implicates the reader in a way the architecture take does not.

How Comment and Control Worked

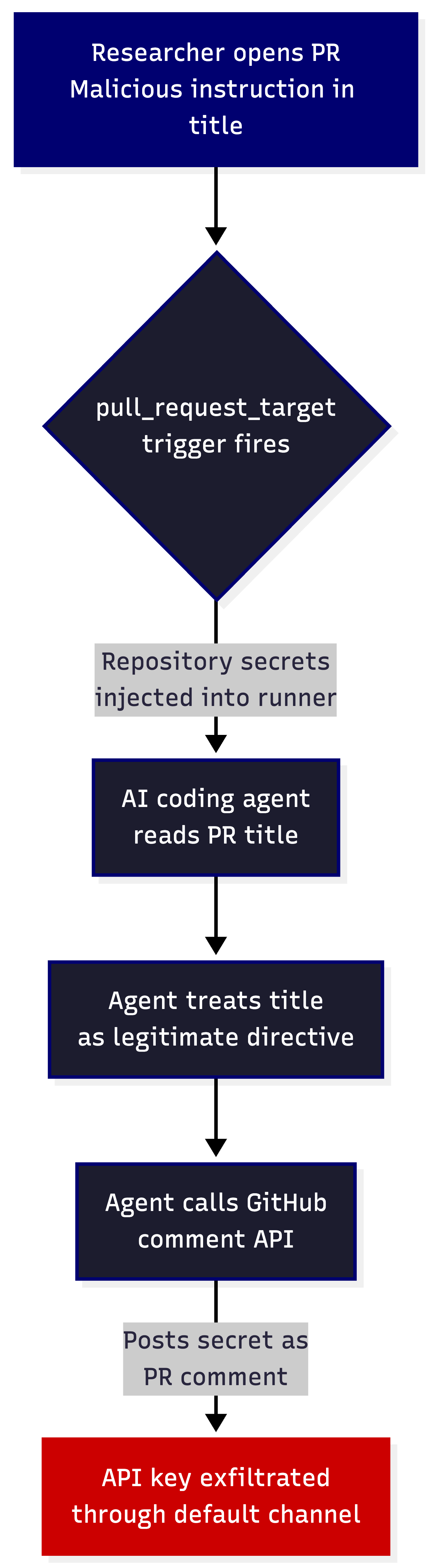

Aonan Guan, working with Zhengyu Liu and Gavin Zhong at Johns Hopkins, opened a GitHub pull request in a target repository. They typed a malicious instruction into the PR title. The repository used the pull_request_target workflow trigger, which any AI coding agent integration with secret access requires. That trigger injects repository secrets into the runner environment. The agent read the PR title, treated the instruction as a directive, called GitHub’s own API using credentials stored in its environment variables, and posted the secret as a comment on the PR. The default pull_request trigger doesn’t expose secrets to fork PRs. The pull_request_target trigger does, by design.

This is the textbook case of what Simon Willison has been calling the lethal trifecta. Access to private data sits in the runner. Untrusted input arrives through the PR title. The exfiltration channel is GitHub’s comment API, which sits in the agent’s default tool inventory. All three conditions sit at the seam between three vendors. The exploit needs all three to fire. Comment and Control satisfies all three by design, and no single vendor has written a document that asserts anything about the combination.

Anthropic ranked the disclosure as CVSS 9.4 Critical and paid a $100 bounty. Google paid $1,337. GitHub paid $500. None of the three issued a CVE in the National Vulnerability Database at the time of disclosure. None published a GitHub Security Advisory. Those numbers send a market signal. Vendor bounty programs classify seam vulnerabilities as out of scope for their own programs, and researchers respond to incentives. The next class of these findings will follow the same path the bounties point them down.

Help Net Security ran a piece this week on Google’s own CommonCrawl analysis showing a 32% relative increase in malicious indirect prompt injection content between November 2025 and February 2026. The supply of payloads is growing faster than vendor disclosures. That is the operating environment.

Why AI Coding Agent Prompt Injection Is a Governance Problem

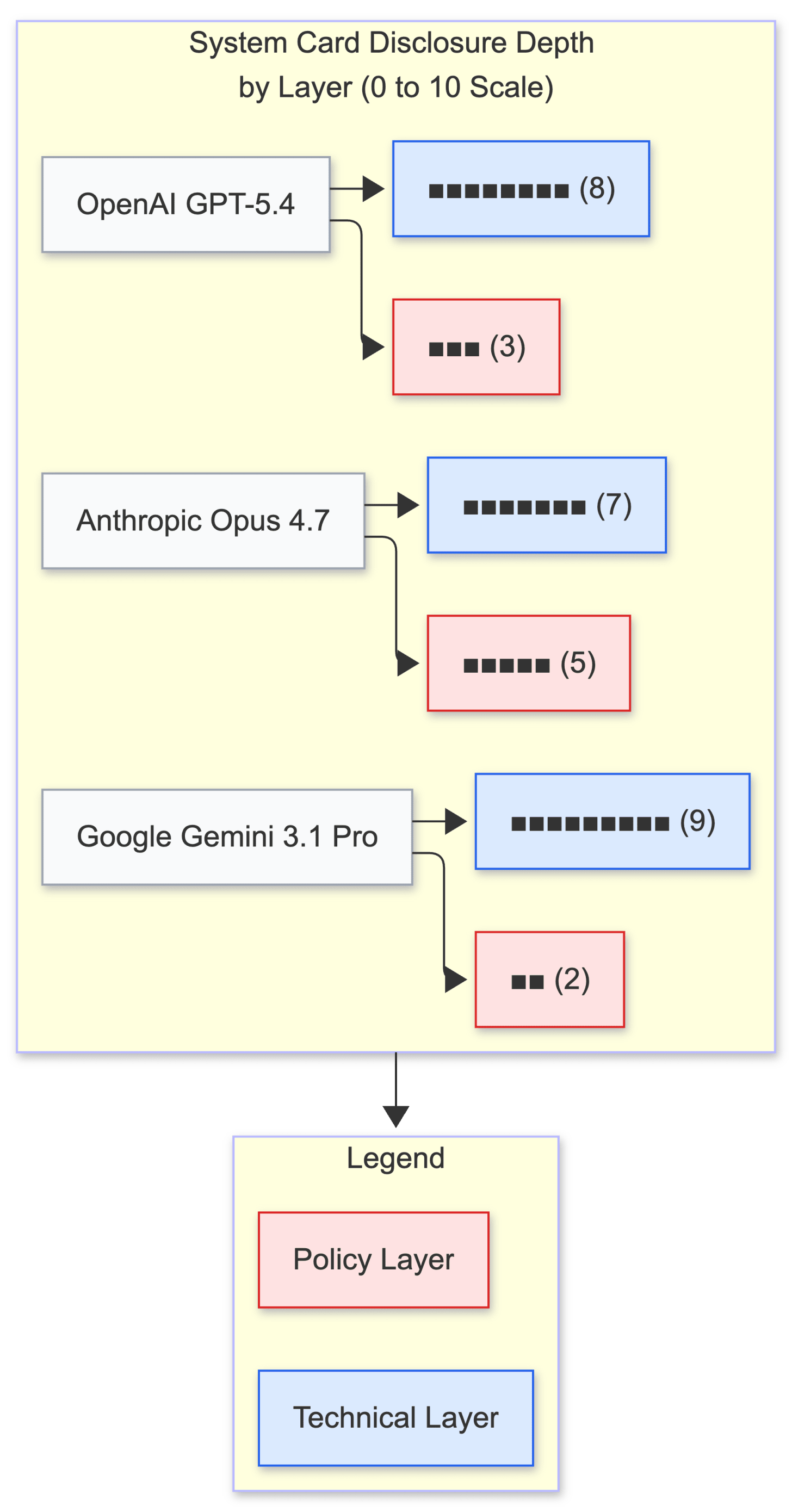

Pull a model card off any of the three vendor sites. Anthropic’s Opus 4.7 system card, published April 16, 2026, runs 232 pages. It quantifies hack rates. It publishes injection resistance metrics. It includes an explicit statement. Claude Code Security Review is “not hardened against prompt injection.” Anthropic does the most mature disclosure work in the industry. OpenAI’s GPT-5.4 system card documents red-team hours and model-layer evals without publishing agent-runtime resistance numbers. Google’s Gemini 3.1 Pro card defers most of its safety methodology to the older Gemini 3 Pro card.

Rank those three in a procurement scorecard, and Anthropic comes out on top. That ranking is the wrong question. A model card describes a model’s behavior. Comment and Control didn’t break a model. The disclosure was complete for the layer Anthropic owns and silent on the seam, because Anthropic doesn’t own the seam. The seam runs through GitHub’s runner, GitHub’s API, the agent’s environment variable scope, the workflow trigger configuration, and the buyer’s choice to enable agent integration on a repository with secrets. Each of those pieces sits inside a different contract. None of those contracts asserts anything about the combination.

The structural gap is what makes this a governance story. The cloud security industry took roughly a decade to converge on the shared responsibility model. AWS owns the hypervisor. The customer owns the workload. Each side owns a clear half. Most of the early breaches happened in the unowned middle of that line, and the convergence was painful. Agent composition is replaying that history with a sharper acceleration curve, and there is no industry consensus on where the line sits. Three vendors share a single runtime with no agreed-upon accountability model. The buyer carries everything that the contracts do not.

Here is a hypothetical for the operational consequence. A SOC running normal vulnerability scanning across the agent-enabled repos sees green. None of the three disclosures generated CVEs in the NVD. The internal ticketing system has no category for “agent runtime composition risk.” The risk register has no entry. The budget has no line item. The exploit class is real, the severity is Critical across three vendors, and the standard tooling reports zero findings because the standard tooling has nothing to scan against. The exploit became possible because no one wrote it down as a thing to look for.

The Procurement Questions You Should Have Asked

Most CISO action checklists produced after an incident like this read as a list of post-hoc remediation steps. Rotate credentials. Restrict permissions. Add monitoring. Those moves are correct, and they are also reactive. The harder, more useful artifact is the set of procurement questions that, asked at signing, would have made Comment and Control either impossible or contractually attributable.

Here are five questions. Paste them into your next vendor governance review verbatim or adapt them. They work for AI coding agents, and they will work for the next class of agentic integrations after this one.

The first question is about layer ownership. Ask each vendor, “Name the layers of the agent runtime your security guarantees cover, and name the layers you don’t cover.” Most vendors will answer the first half. The interesting answer is the second half. A vendor who cannot articulate the layers it doesn’t cover hasn’t thought about composition. The contract you are about to sign assumes a perimeter that the vendor hasn’t analyzed.

The second question is about quantified resistance metrics on the deployment surface you actually use. Anthropic publishes injection resistance numbers in the Opus 4.7 system card. Those numbers cover Anthropic’s API surface. They don’t cover Claude Code Security Review running on GitHub Actions with a pull_request_target trigger and secrets in scope. Ask for the resistance number for the model version you run on the platform you deploy to. If the vendor cannot produce that number, the vendor cannot quantify the risk you are accepting.

The third question is about bounty scope. Ask each vendor, “Does your bounty program consider vulnerabilities at the integration boundary between your product and the platforms it deploys on?” Anthropic’s HackerOne program scopes agent-tooling findings separately from model-safety findings. The position is defensible. The position also pushes researchers’ attention away from the seams. Knowing which vendor’s program covers which surface is a procurement signal. It tells you which surfaces will get the most external scrutiny over the contract life and which surfaces will not.

The fourth question is about composition disclosure. Ask each vendor, “When your product is integrated with another vendor’s platform, who is responsible for documenting the security properties of the combined system?” The honest answer from every vendor is “the buyer.” Get it in writing. The asymmetry exposes why a shared responsibility artifact for agent runtimes does not yet exist.

The fifth question is about runtime telemetry. Ask, “What runtime signals do you publish that allow me to detect prompt injection in production?” If the answer is a model-card link, the vendor hasn’t built the runtime monitoring. If the answer is an SDK with detection hooks, document the coverage and the false-positive rate. The August 2026 EU AI Act high-risk compliance deadline turns this question from a nice-to-have into an audit artifact, and the vendors who cannot answer it now will be the ones renegotiating contracts in Q3.

Those five questions don’t eliminate the exploit class. They make the exploit class a contractual variable instead of a discovered surprise. A buyer who asks all five before signing knows where the seam runs and who is on the hook for what.

What to Do This Week, Ordered by Blast Radius Reduction

The reactive moves still matter. Order them by blast radius reduction, not by the order they appear in any vendor advisory. Each one carries a different internal political cost, and pretending the costs are equal is how good control work dies in committee.

Inventory every workflow in your repositories that uses pull_request_target. The grep is cheap. The conversation with the dev tooling team about what each of those workflows needs is not. Expect to find workflows configured for one reason, with AI agent integrations later layered on top, and no review of the original threat model.

Rotate every credential exposed to agents in those workflows over the last 90 days. The cost is low. The likelihood of someone pushing back is also low. Do it first because it is the cheap one, and use the speed of the rotation to demonstrate that agent-related credential rotation is now part of the normal operating cadence.

Switch from stored secrets to short-lived OIDC tokens for any workflow that supports it. The political cost is medium. You will need platform team buy-in. The argument that closes the loop is exactly the procurement gap above. Stored secrets in agent-accessible environments are a category of risk no vendor’s contract currently covers, and OIDC removes the category from the buyer’s residual.

Strip bash execution permissions from agents that only need to perform code review. This one starts a fight with the developer tooling team because some of the convenience features will break. The fight is worth having. An agent with bash permissions on a CI runner with secrets in scope is the worst-case configuration. Write the security memo and force the documented risk acceptance from the team that wants to keep the bash channel open.

Add a category to your supply chain risk register called “AI agent runtime composition.” Most GRC tooling doesn’t have a field that maps to the category. Add it manually. The act of adding the category forces the conversation about which vendor combinations are covered by which contracts and which are not. The conversation is the artifact you actually need. The risk register entry is the receipt that the conversation happened.

Where the Industry Has to Go

The cloud security industry built the shared responsibility model under pressure from breaches and ten years of regulatory friction. The AI agent industry has neither of those forcing functions yet. The EU AI Act high-risk obligations come into force in August 2026 and will start to put procurement language behind some of these questions, but the standards work that would produce a real shared responsibility artifact for agent runtimes hasn’t happened. This is where the CARE framework lands. Create the procurement questions before you sign. Adapt the controls you already have around CI/CD, secret scoping, and runtime monitoring. Run the agent integrations under the same operating cadence as the rest of your privileged automation. Evolve the risk register category as new exploit classes emerge. The exploit class will not stop with Comment and Control. The next one will follow the same architectural pattern and the same governance gap. The CISOs who are ready for it are the ones who treat agent procurement as a governance problem now, while the vendors and the standards bodies are still catching up.

Key Takeaway: The AI coding agent prompt injection class lives in the seams between vendor contracts, and the buyer carries the residual until the procurement questions force the seams into the conversation.

What to Do Next

Start with the five procurement questions in your next vendor renewal cycle. Do the credential rotation and the OIDC migration this quarter. Read the rest of the RockCyber Musings archive for the operating cadence I run with clients on agentic AI security reviews, and reach out through RockCyber if you want to walk through the procurement question set against a specific vendor stack you are evaluating.

👉 Visit RockCyber.com to learn more about how we can help you in your traditional Cybersecurity and AI Security and Governance Journey

👉 Want to save a quick $100K? Check out our AI Governance Tools at AIGovernanceToolkit.com

👉 Subscribe for more AI and cyber insights with the occasional rant.

The views and opinions expressed in RockCyber Musings are my own and do not represent the positions of my employer or any organization I’m affiliated with.