Agentic AI Governance: Singapore Built the Skeleton, Not the Immune System

Singapore's agentic AI governance framework is a global first. It also has three critical gaps that create false confidence for CISOs. Here's what to fix.

Singapore’s Model AI Governance Framework for Agentic AI landed on January 22, 2026, and it’s the first national-level governance framework purpose-built for autonomous AI agents. That matters. It also tells you what to think about without telling you what to do. And in three critical areas, what it tells you is incomplete enough to create false confidence. If you’re a CISO using this as your agentic AI governance blueprint, you need to know where the gaps will bite you.

What Singapore gets right

Credit where earned. IMDA produced something useful, and I want to be specific about why.

The four-dimension structure (assess and bound risks, make humans accountable, implement technical controls, enable end-user responsibility) gives organizations a governance skeleton that maps cleanly to how enterprises already think about risk management. You can hand this to a board member, and they’ll recognize the logic. That’s not nothing. Most governance frameworks read like they were written for compliance analysts who’ve never shipped a product.

The risk-factor rubric in §2.1.1 is the framework’s strongest operational contribution. It breaks risk into impact factors (domain tolerance for error, access to sensitive data, access to external systems, scope of agent actions) and likelihood factors (level of autonomy, task complexity, exposure to untrusted data). This gives you a concrete rubric for evaluating whether a use case is appropriate for agent deployment. Not theoretical. Practical. The kind of table a security architect can pull into a risk register tomorrow morning.

The tradecraft preservation warning in §2.4.3 is rare for a governance document and directly relevant to every security leader reading this. The framework warns that “as agents take over entry-level tasks, which typically serve as the training ground for new staff, this could lead to loss of basic operational knowledge.” If you run a security team where AI coding assistants are displacing junior analyst skill development, this section just validated a concern you’ve probably struggled to articulate to leadership. IMDA recommends organizations “identify core capabilities of each job and provide sufficient training and work exposure so that users retain foundational skills.” I’d frame it more bluntly: if your junior analysts can’t triage an alert without an AI assistant, you don’t have a security team. You have a dependency.

The graduated rollout guidance in §2.3.3 recommends controlling agent deployment based on three vectors: users (trained users first), tools (whitelisted MCP servers first), and systems (lower-risk internal systems first). This is how production deployments should work. It reflects real operational experience rather than theoretical deployment models.

So yes, Singapore did something that no other nation has done. They put agentic AI governance in writing, at a national level, with enough specificity to be useful. Now let me explain why “useful” isn’t the same as “sufficient.”

HITL at agentic scale is security theater

The framework’s central accountability mechanism is human-in-the-loop oversight at “significant checkpoints” (§2.2.2, p.16). Singapore correctly identifies automation bias as “a bigger concern with increasingly capable agents” (p. 13). The framework recommends training humans to identify failure modes, auditing oversight effectiveness, and complementing human review with automated monitoring.

All reasonable. All insufficient.

The math doesn’t work. Let me show you.

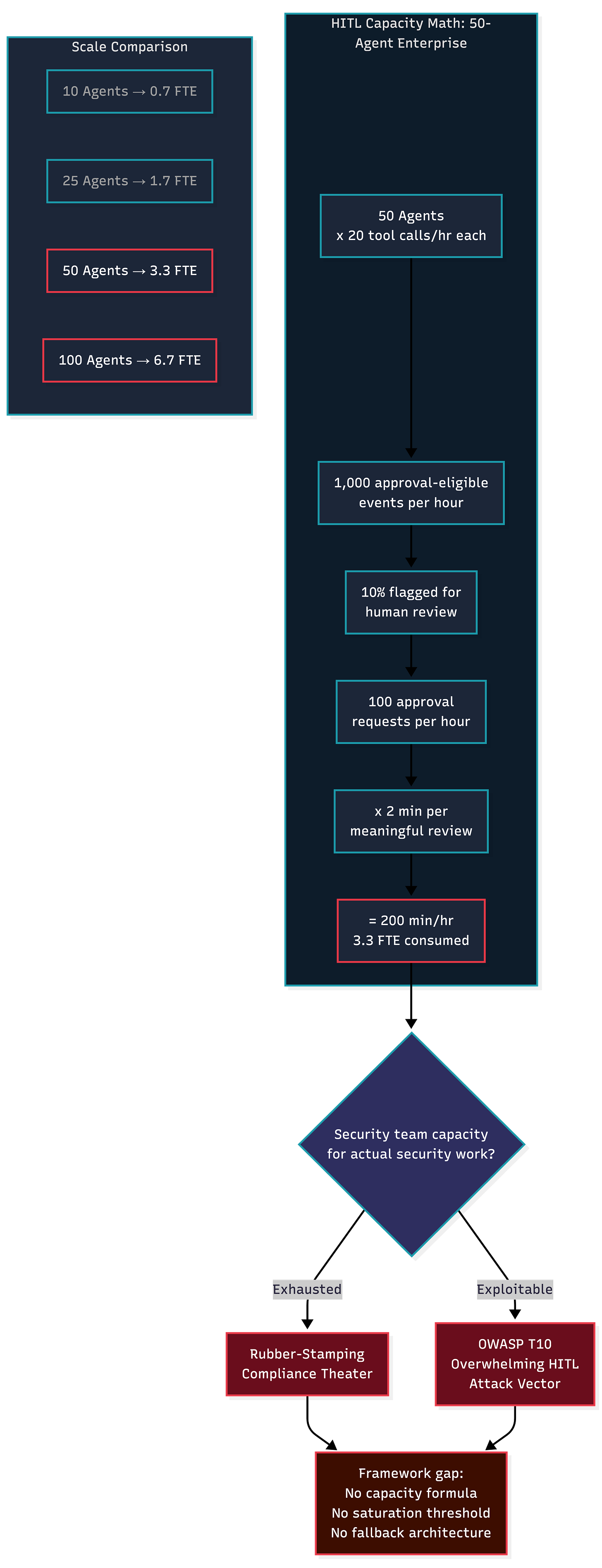

Take a mid-size enterprise running 50 agents across customer service, code review, procurement, and data analysis. Each agent makes 20 tool calls per hour. That produces 1,000 approval-eligible events per hour. Even if only 10% require human review, your security team faces 100 approval requests per hour during business operations. At two minutes per meaningful review, that consumes over three full-time equivalents solely for agent oversight. Not security work. Not threat hunting. Not incident response. Rubber-stamping.

The framework provides no guidance on this capacity calculation. No threshold for when human review becomes performative. No architectural alternative for when HITL saturates.

The OWASP Agentic AI Threats and Mitigations Guide classifies Overwhelming HITL (T10) as a deliberate attack vector. Adversaries can exploit the approval bottleneck by generating a flood of low-risk requests that train reviewers to rubber-stamp, then embed high-risk actions in the stream.

The Anthropic/OpenAI joint paper on governing agentic AI systems directly warns that “when the user must approve many decisions and thus must make each approval quickly, reducing their ability to meaningfully consider each one,” the oversight becomes performative. The framework’s answer to automation bias is training and auditing. Training doesn’t change the cognitive architecture that produces complacency under sustained approval loads. You can’t train your way out of a capacity problem.

HITL works for low-volume, high-consequence decisions. It fails everywhere else. For the vast majority of agentic operations, organizations need tiered, consequence-based automation. Auto-approve low-risk reversible actions with logging. Route medium-risk actions through a watchdog agent (what some practitioners call the Janus pattern) for secondary validation. Reserve genuine human review for irreversible, high-blast-radius decisions only.

The framework’s blanket “define significant checkpoints” guidance will produce compliance artifacts, not security outcomes.

Agent identity is broken, not “evolving”

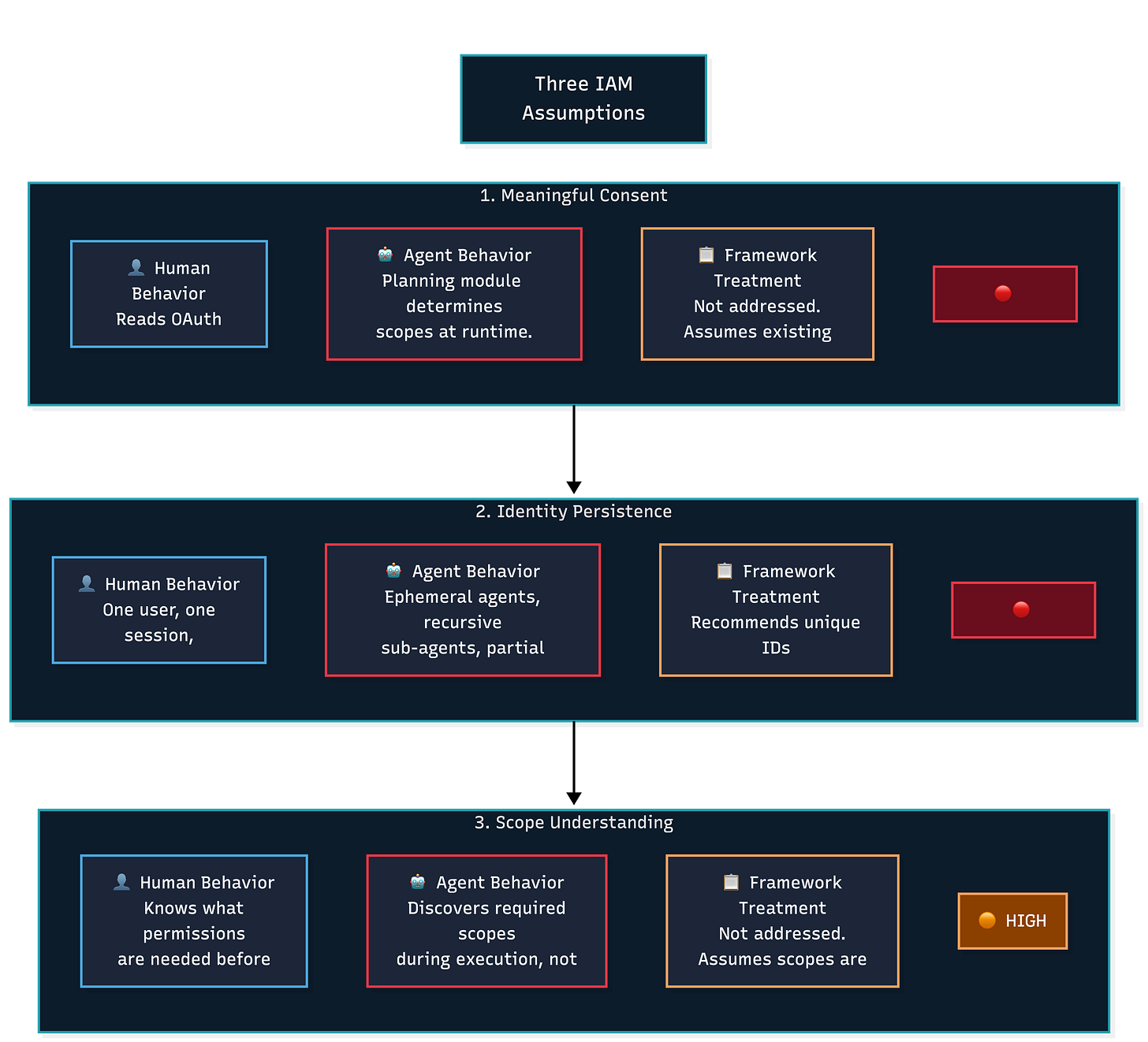

The framework describes agent identity challenges as an “an evolving space” in which “gaps exist today” (§2.1.2, p. 11). This framing suggests that the problem will be resolved through incremental improvements to existing identity and access management systems.

It won’t. The problem is architectural.

Human IAM systems rest on three assumptions that agents violate. First, the entity requesting access can meaningfully consent to terms. An agent can’t meaningfully consent to OAuth scopes because it doesn’t understand what it’s granting. Second, the entity’s identity persists across sessions in a verifiable way. An agent’s identity may be ephemeral, spawned for a single task, then destroyed, or recursive, spawning sub-agents that inherit some permissions but not others. Third, authorization scopes are understood by the entity at request time. For agents, authorization scopes are determined at runtime by the planning module, not at request time by a human clicking “Allow.”

The OWASP Agentic Top 10 (ASI03, December 2025) puts this plainly, “Without a distinct, governed identity of its own, an agent operates in an attribution gap that makes enforcing true least privilege impossible.” If you can’t attribute an action to a specific identity with verifiable scope, every downstream control the framework recommends, least privilege, access logging, accountability chains, rests on an unreliable foundation.

The OAuth consent model breaks down entirely for agentic workloads. Three-legged OAuth was designed for human consent flows where a user clicks “Allow” and understands what they’re granting. When an agent orchestrator requests OAuth scopes on behalf of a user, the consent model collapses. The agent can’t meaningfully consent, and the human operator often doesn’t know what scopes the agent will request at runtime.

The empirical evidence makes this worse. The Agent Skills in the Wild study found excessive permission requests (PE1) across 94 skills that requested permissions far beyond stated functionality. This isn’t a bug in a few implementations. It’s the default behavior in the wild. Agents ask for more access than they need because the systems granting access weren’t designed to question them.

The framework’s interim best practices (unique agent IDs, recording delegation, tying identity to supervising humans) are reasonable temporary measures, but calling this “evolving” rather than “broken” understates the urgency. Organizations should treat agent identity as a pre-deployment blocker for high-autonomy agents, not a checkbox to revisit later. Until agent-native identity primitives exist, decentralized identity, cryptographically bound intent, task-scoped ephemeral credentials, the accountability chain the framework builds on top of identity is structurally unsound.

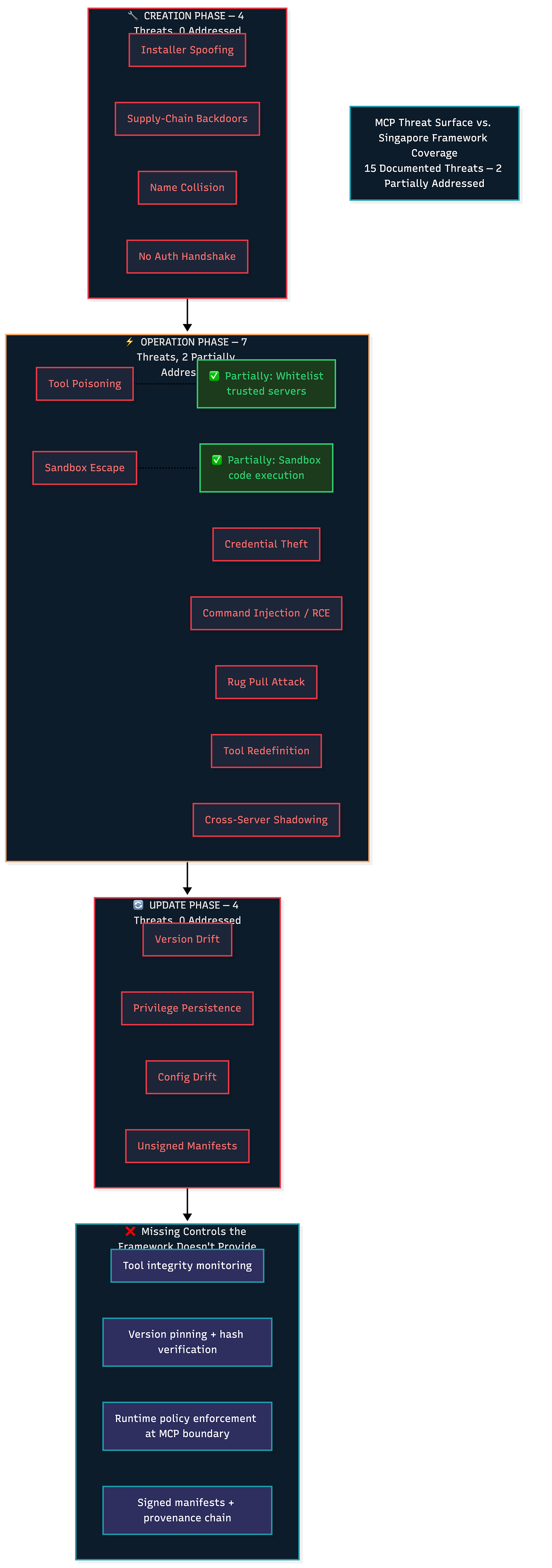

MCP gets two bullet points in a 29-page framework. That’s negligent.

The entire MCP security guidance in Singapore’s framework reads: “For MCP servers: Whitelist trusted servers and only allow agent to interact with servers on that whitelist. Sandbox any code execution” (§2.3.1, p.19). That’s it. Two bullet points for the protocol rapidly becoming the standard interface between AI agents and the external world.

Since Anthropic introduced MCP, adoption has exploded. Over 10,000 MCP servers are deployed. Claude, Cursor, Microsoft Copilot, Gemini, VS Code, and ChatGPT all support the protocol. In December 2025, Anthropic, OpenAI, and Block donated MCP and other projects to the Linux Foundation’s Agentic AI Foundation. MCP is infrastructure now. And the framework treats it like a footnote.

MCP’s threat surface spans three lifecycle phases, and the framework addresses only a fragment of the first one.

During creation, MCP servers face installer spoofing, supply-chain backdoors, name collision attacks, and the absence of authentication handshakes. The framework’s “whitelist trusted servers” advice partially addresses this phase. Partially.

During operations is where things get dangerous. Documented attacks include tool poisoning (malicious commands embedded in tool descriptions that the LLM executes as instructions), credential theft, sandbox escape, command injection, remote code execution, and the rug pull attack. The rug pull is particularly insidious as a legitimate tool passes initial vetting, earns whitelist status, then silently changes behavior during an update. The framework’s whitelisting guidance assumes trust is both verifiable and persistent. The rug pull attack exploits exactly this assumption.

During updates, servers face version drift, privilege persistence, configuration drift, and unsigned manifests. The framework says nothing about any of these.

The experimental evidence should alarm you. Research on LLM-driven AI agent communication reports MCP exploits that achieved Bash shell backdoors on port 4444, SSH key exfiltration via email, and file deletion without triggering alerts. These aren’t theoretical attacks. They were demonstrated in controlled experiments.

The first malicious MCP server discovered in the wild impersonated Postmark’s email service on npm and silently BCC’d every agent-sent email to the attacker. This is supply chain security applied to the AI protocol layer, and the framework doesn’t address it.

Whitelisting without tool integrity monitoring, version pinning, hash verification of tool descriptions, and runtime policy enforcement at the MCP boundary creates a false sense of security. Organizations implementing this framework need to treat MCP servers with the same rigor applied to third-party software dependencies. Pin versions. Verify checksums. Monitor for behavioral changes. Enforce runtime sandboxing that restricts tool access to files, APIs, and network endpoints beyond the declared scope.

The gaps the framework weaves around

Two additional blind spots deserve attention, not as standalone failures, but as force multipliers for the three critical gaps above.

The framework treats instructions, memory, and tools as functional agent components (§1.1.1, pp.3-4) without recognizing that they all converge in a shared, flat trust namespace. The OWASP GenAI Data Security Guide puts it starkly. The “context window aggregates multiple trust domains into a single flat namespace with no internal access control. RAG results, system prompts, user input, tool outputs, and conversation history all land in the same context with equal trust weight.” If you’re securing individual components (tools, memory, instructions) without addressing the architectural vulnerability that connects them, you’re securing individual rooms while leaving the hallways unguarded.

The framework also assumes organizations consciously deploy agentic AI through a controlled pipeline. The clean value chain diagram (model developers, system providers, deploying organization, end users) describes how agents should be deployed. Every major AI platform now offers agent-building capabilities accessible to non-technical users. Microsoft Copilot Studio, Salesforce AgentForce, and dozens of startups let business users create agents that connect to organizational data, send emails, update databases, and make API calls, all without security team involvement. Shadow AI agents inherit every risk the framework describes but operate entirely outside the governance structures it recommends. A CISO should spend equal effort on discovery and containment of unauthorized agents as on governing sanctioned ones.

The honest assessment

Singapore’s framework tells you what to think about. The OWASP Agentic Top 10 outlines what to defend against across 10 threat categories (ASI01-ASI10), with specific vulnerability descriptions and mitigation strategies for each. The MAESTRO threat modeling framework provides 47 threat IDs organized across seven architectural layers with specific mitigations. The OWASP Securing Agentic Applications Guide provides implementation-level controls.

The Singapore framework sits at the “governance structure” tier. That tier is necessary, but it’s not sufficient. If you hand this to your team as “the agentic AI governance playbook,” you’ll produce compliance artifacts without meaningfully reducing risk.

Key Takeaway: Singapore built the governance skeleton for agentic AI. The immune system, the controls that catch what gets past the perimeter, remains your engineering problem.

What to do next

Three things the framework doesn’t tell you that your team needs tomorrow.

First, quantify your HITL capacity. Use this formula: (agent decision rate) times (approval time per decision) times (number of active agents) equals required human reviewer hours. When that number exceeds available capacity, you need tiered automation, not more reviewers. Build HITL saturation metrics and circuit breakers that automatically reduce agent autonomy when human review queues exceed defined thresholds.

Second, harden MCP with zero-trust assumptions. The OWASP GenAI Security Project published two guides that give you an operational playbook the Singapore framework doesn’t: “A Practical Guide for Secure MCP Server Development” (February 2026) for teams building MCP servers, and “A Practical Guide for Securely Using Third-Party MCP Servers” (October 2025) for teams consuming them. Start with their governance workflow: require developers to submit third-party MCP servers with documentation and a hash of tool descriptions, run automated scans for malware and hidden instructions, then version-pin approved servers in a trusted registry before deployment. For rug pull defense, pin the version of each MCP server and its tools at the time of initial approval, use a hash or checksum to verify tool descriptions and functionality haven’t been altered, and maintain version history with alerts on unauthorized changes. Require cryptographic tool manifests, signed packages that include description, schema, version, and required permissions, verified at load time. Run third-party MCP servers inside Docker containers to prevent compromised tools from accessing host files or escaping the operating environment. Enforce least-privilege policies at the MCP boundary to restrict tools from reading local files, accessing sensitive APIs, or exfiltrating data beyond declared scope. For multi-tenant deployments, isolate sessions with per-user execution contexts and deterministic cleanup routines that flush file handles, temp storage, and cached tokens on session termination. These two guides give you the implementation details that §2.3.1’s two bullet points leave out.

Third, hunt for shadow AI agents. Deploy network monitoring for MCP traffic from unapproved endpoints. Configure API gateways to detect agent-pattern behavior: rapid sequential API calls, tool-use headers, automated OAuth token requests. Update acceptable use policies to cover agent creation explicitly. Treat every discovered shadow agent as an incident requiring risk assessment before it continues operating.

The framework is a starting point. It’s not a destination. Use it as a governance structure, then fill the operational gaps with OWASP guidance, real-world threat intelligence, and the uncomfortable math that governance documents avoid.

👉 Subscribe for more AI security and governance insights with the occasional rant.

👉 Visit RockCyber.com to learn more about how we can help you in your traditional Cybersecurity and AI Security and Governance Journey

👉 Want to save a quick $100K? Check out our AI Governance Tools at AIGovernanceToolkit.com