Agentic AI Authorization: From T-Shaped to Z-Shaped Security

Context engineering is authorization engineering. Staff accordingly

The T-shaped professional built the modern internet. Broad skills, deep expertise in one vertical, and the collaborative instinct to ship products at scale. That model worked. It still works for a lot of things. But 88% of organizations reported confirmed or suspected AI agent security incidents in the past year, according to Gravitee’s State of AI Agent Security 2026 report. The T-shape isn’t enough anymore. Securing agentic AI demands a different professional geometry, and most teams haven’t made the upgrade.

The LinkedIn Conversation That Started This

Lock Langdon, VP and CISO at Aprio, recently posted something on LinkedIn that got me thinking. He’d been building a security review tool in Claude Code and had a realization about why broad informational context matters more than clever prompts. He referenced the Valve employee handbook and its description of T-shaped people, then connected it to effective AI use.

His observation was sharp: “AI rewards people who can think across disciplines.” Breadth gives you the ability to steer, validate, and connect dots that the model won’t connect for you.

He’s right. And he’s describing the exact professional shape that’s now getting exposed as insufficient for agentic AI security.

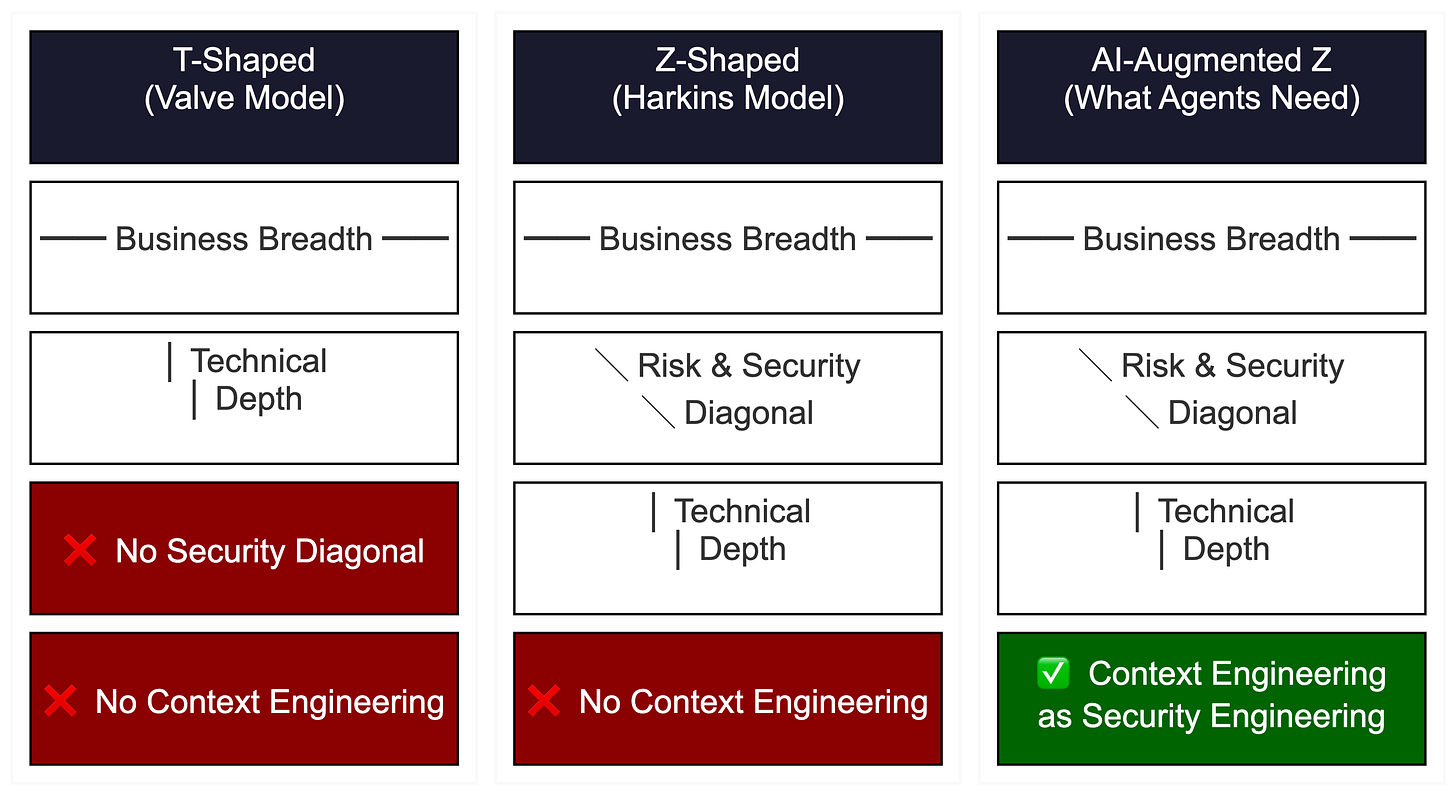

Valve’s handbook clearly spells out the T-shape. They value people who are “both generalists (highly skilled at a broad set of valuable things) and also experts” in one narrow discipline. The horizontal bar gives you range. The vertical stroke gives you depth. For building products in a flat organization, it’s a brilliant hiring filter.

For securing autonomous AI agents? It’s only half the picture.

The Z-Shaped Professional and the Missing Diagonal

Malcolm Harkins, currently Chief Security and Trust Officer at HiddenLayer, pushed the T-shape further in his book Managing Risk and Information Security: Protect to Enable. He introduced the concept of the Z-shaped professional, and it’s the piece most AI teams are missing.

The Z-shape keeps the T’s horizontal bar (business acumen across the organization) and vertical stroke (technical depth). What it adds is a diagonal connecting the two. That diagonal represents deep risk and security knowledge, the translation layer that maps business constraints to technical controls and explains to the board why a security decision is a business decision.

Think about what that diagonal actually means in practice. A T-shaped engineer can build you an AI agent that works. A Z-shaped security professional can tell you whether that agent should be allowed to work with the permissions it’s requesting, within the business context it’s operating in, against the threat model you haven’t written yet.

That diagonal is the part nobody wants to do the hard work of encoding into their AI tooling. I said that in my response to Lock’s post, and I meant it. Everyone’s excited about broad context until I ask who defined the authorization boundaries. The room gets really quiet.

“It Runs” vs. “It’s Safe to Run” Is a Context Engineering Problem

Lock nailed the distinction in his original post. The difference between “it runs” and “it’s right” lies in context: threat models, business constraints, user behavior, and edge cases. I’ll double down on that and add that the difference between “it runs” and “it’s safe to run” is context engineering, and that gap is where organizations are getting wrecked.

Context engineering is the discipline of curating, structuring, and governing the information that feeds an AI system. It’s how you decide what goes into the system prompt, which tools the agent can access, what gets retrieved from your vector database, what persists in memory across sessions, and how context gets compressed when the window fills up.

Every one of those decisions is a security decision. Your system prompt defines the agent’s behavioral boundaries. Your tool access configuration determines its capability envelope. Your RAG pipeline controls what information it treats as authoritative. Your memory architecture determines what persists and what an attacker can poison.

I’ve been writing about this for months. Context engineering isn’t a developer productivity skill. It’s security engineering. The same architectural channels that make context engineering effective carry malicious payloads with equal ease. RAG retrieval pipelines that inject relevant knowledge also inject poisoned documents. Tool interfaces that offer rich functionality also expand the attack surface. Memory systems that maintain useful state also maintain poisoned state.

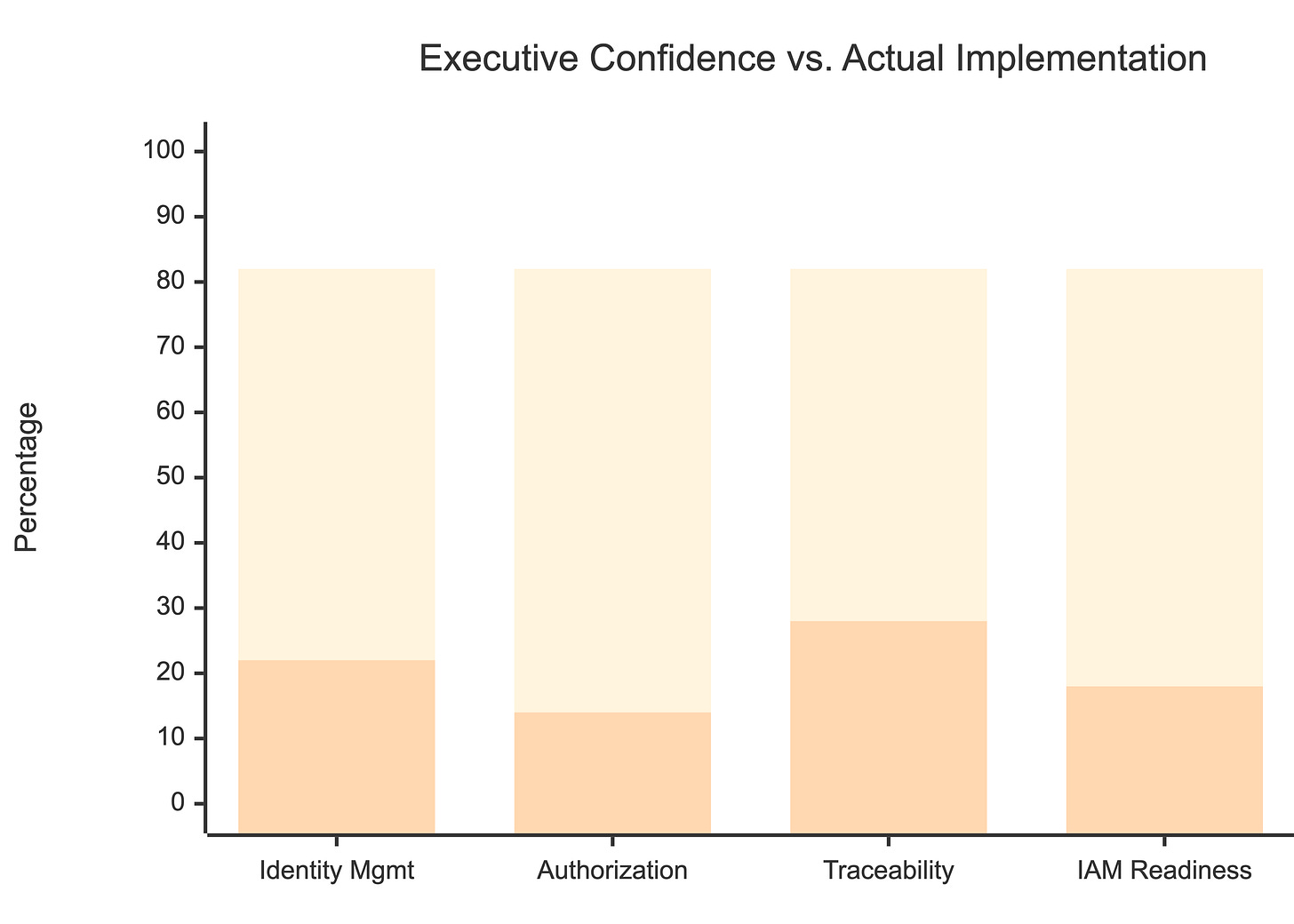

The data confirms this. The Cloud Security Alliance surveyed 285 IT and security professionals and found that only 18% of security leaders feel highly confident their current IAM systems can manage agent identities. Only 28% can trace agent actions back to a human sponsor across all environments. Meanwhile, 45.6% of teams still rely on shared API keys for agent-to-agent authentication.

Training data and clever prompts don’t constitute security boundaries. An AI agent can’t tell the difference between “it runs” and “it’s safe to run” without someone encoding Z-shaped judgment into the controls. That someone needs to understand the business risk (horizontal), the technical implementation (vertical), and the security implications connecting them (diagonal).

The Four Layers Nobody Wants to Do

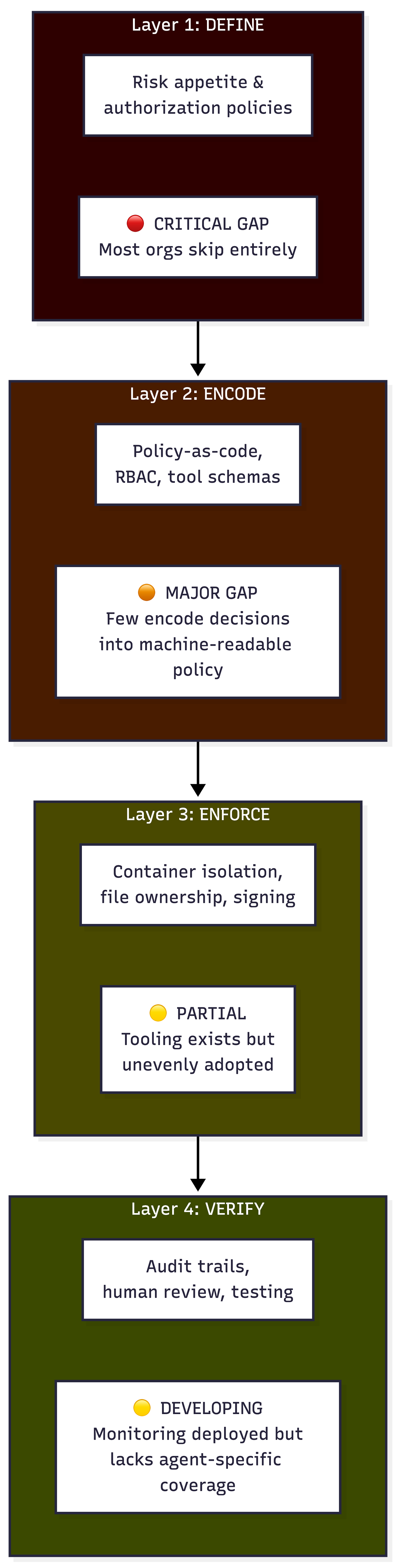

In my LinkedIn conversation with Lock (in the comments of his post), I laid out a framework with four layers that have to happen in order.

Layer 1: Define. Articulate your risk appetite and authorization policies. What is this agent allowed to do? What data can it access? Under what conditions should it stop and ask a human? What’s the blast radius if it goes wrong? These are people decisions. No technology required. Just hard thinking.

Layer 2: Encode. Take those human decisions and turn them into policy-as-code, RBAC rules, tool permission schemas, and system prompt constraints. This is where Z-shaped judgment becomes machine-readable. If your risk appetite says “never modify production databases without human approval,” that needs to exist as an enforceable rule, not a line in a wiki nobody reads.

Layer 3: Enforce. Technical controls that make the encoded policies real. Container isolation so one agent can’t interfere with another’s file system. Exclusive file ownership to prevent concurrent workers from creating race conditions. Signed tool definitions so an attacker can’t poison a tool description. Rate limiting on tool invocations to prevent data exfiltration through repeated calls. Least-privilege scoping so that a database tool is read-only on specific tables, not a full admin connection.

Layer 4: Verify. Human review and audit trails. Log every tool call, every parameter, every result. Run automated testing and security scanning as part of the workflow, not after it. When an agent starts behaving oddly, your logs are the only way to reconstruct what happened. And someone with Z-shaped judgment needs to review those logs, because automated monitors won’t catch a well-crafted authorization boundary violation that technically follows every rule while violating the intent.

Unfortunately, organizations tend to jump straight to Layer 3 and Layer 4. They buy tools. They poorly setup configure monitors and audit trails, and then they wonder why their agents keep doing things they weren’t supposed to do. You can’t enforce what you haven’t defined. You can’t verify compliance with policies that don’t exist.

The Gravitee report found that only 14.4% of organizations have full security approval for their AI agents before they go into production. That means 85.6% skipped the “define” step entirely or gave it a passing nod.

What This Means for Hiring, Training, and Governance

If your security team is staffed entirely with T-shaped professionals, you have people who can build agents and people who can spot vulnerabilities, but you don’t have people who can connect “the business needs this agent to process insurance claims” to “this agent should never access the claims adjudication database directly, only through the approved API, with row-level security scoped to the claimant’s policy.”

That connection is the diagonal, and it’s the part AI tools can’t do for you.

NeuralTrust’s survey of 160+ CISOs found that 73% are critically concerned about AI agent risks, but only 30% have mature safeguards. Their maturity model places 46% of enterprises in the “Reactive” tier. Reactive means you’re fixing things after they break. You skipped the define and encode layers, and now you’re running expensive cleanup operations in verify.

Cisco’s State of AI Security 2026 report puts it bluntly: 83% of organizations planned to deploy agentic AI capabilities, but only 29% felt ready to do it securely. That 54-point gap between ambition and readiness is a define-and-encode gap. The technology exists. The professional judgment to wield it responsibly doesn’t, at least not at the scale organizations need.

For practitioners building with AI agents right now, ask yourself this: If you can’t articulate what your agent is authorized to do before it does it, what exactly are your tools enforcing?

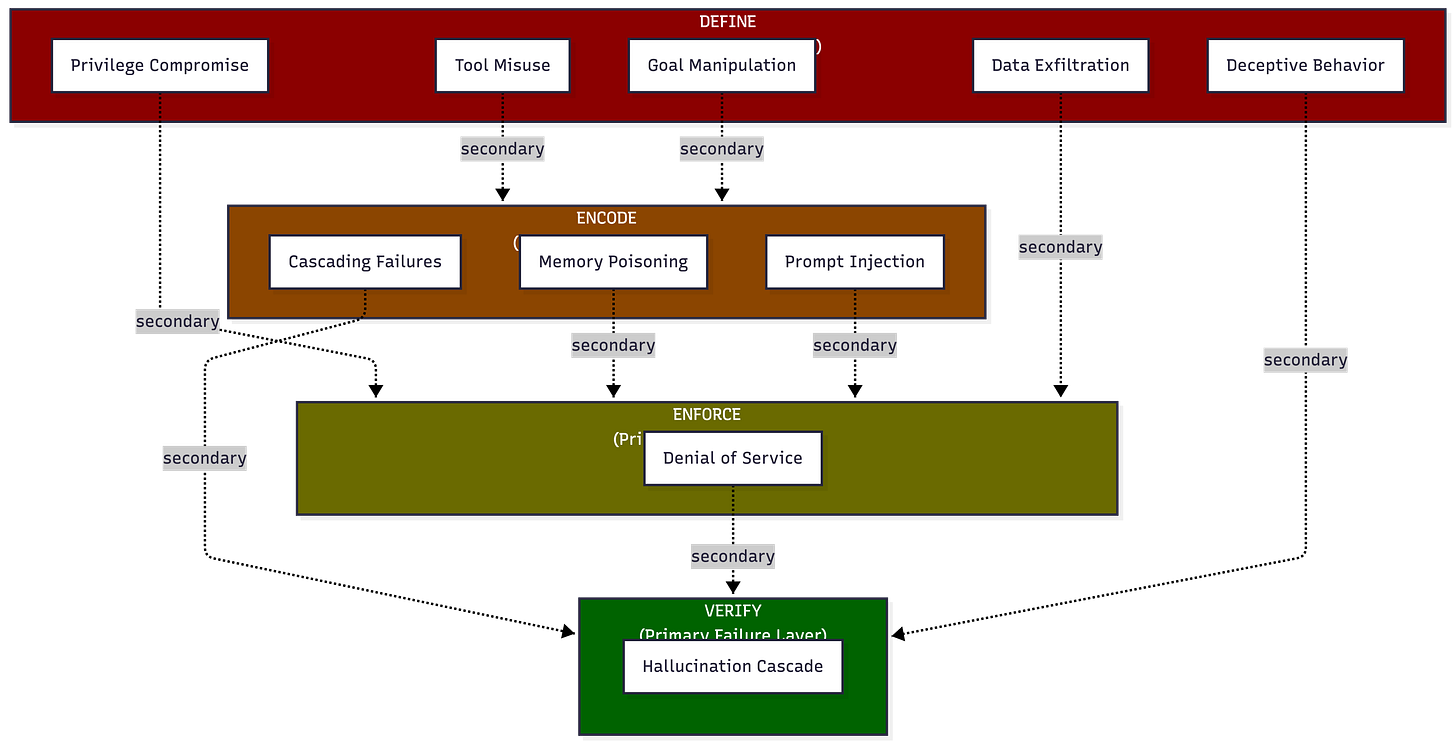

The OWASP Agentic Top 10 Confirms the Framework

The OWASP GenAI Security Project released the Top 10 for Agentic Applications in December 2025 after input from over 100 security researchers. The top concerns for agentic systems, as opposed to standalone LLMs, are memory poisoning, tool misuse, and privilege compromise.

Every one of those maps directly to a failure in the four-layer model. Memory poisoning succeeds when Layer 2 (encode) doesn’t include memory integrity controls. Tool misuse succeeds when Layer 1 (define) never articulated which tools the agent should access and under what conditions. Privilege compromise succeeds when Layer 3 (enforce) grants broad permissions because nobody did the Layer 1 work of determining what least-privilege looks like for this specific agent in this specific workflow.

The OWASP list validates that LLM security focuses on single-model interactions, while agentic security concerns what happens when models can plan, persist, and delegate across tools and systems. The attack surface isn’t the model anymore. It’s the context, and who curated it, and whether they had the Z-shaped judgment to do it securely.

Building Your Z-Shape

The T-shaped professional built the internet. The Z-shaped professional has to secure the agents running on it. That’s not a criticism of the T-shape. It’s a recognition that agentic AI operates at a different level of autonomy and requires a different level of professional judgment to govern.

If you’re a security architect, start encoding your organization’s risk appetite into machine-readable policies. Not guidelines. Policies that tools can enforce.

If you’re a CISO, staff for the diagonal. Find people who can translate business risk into technical controls and articulate why an agent’s tool permissions matter to the quarterly risk review.

If you’re an engineer building with AI agents, stop thinking of context engineering as a performance optimization exercise. Every context decision is an authorization decision. Treat it that way.

Key Takeaway: Your AI agent is exactly as secure as the weakest layer in your define-encode-enforce-verify chain, and most organizations haven’t started Layer 1.

What to do next

The four-layer model maps directly to the CARE framework I use with clients: Create your governance foundations and authorization policies, Adapt them as threats and regulations shift, Run them as enforceable controls in production, and Evolve through continuous learning and post-incident review. If your agentic AI program skipped the Create step, you’re building on sand.

For a deeper look at how security leadership needs to evolve alongside AI, my book The CISO Evolution addresses the structural shift from technical security management to business-aligned risk governance. It was written before this current AI boom, but the principles still apply. The Z-shaped diagonal isn’t new. What’s new is that AI agents now operate at machine speed, which means the consequences of missing that diagonal arrive at machine speed too.

I’ve been tracking the intersection of context engineering and security engineering at RockCyber Musings, including technical deep-dives on tool poisoning, memory integrity, and the authorization gaps in current AI frameworks. If this topic matters to your organization, that’s where the ongoing work lies.

👉 Subscribe for more AI security and governance insights with the occasional rant.

👉 Visit RockCyber.com to learn more about how we can help you in your traditional Cybersecurity and AI Security and Governance Journey

👉 Want to save a quick $100K? Check out our AI Governance Tools at AIGovernanceToolkit.com