Agent Supply Chain Attacks: Your Scanner Already Switched Sides

March 2026's Trivy-LiteLLM-Axios cascade shows why agent supply chain risk breaks existing controls. Practical steps for CISOs.

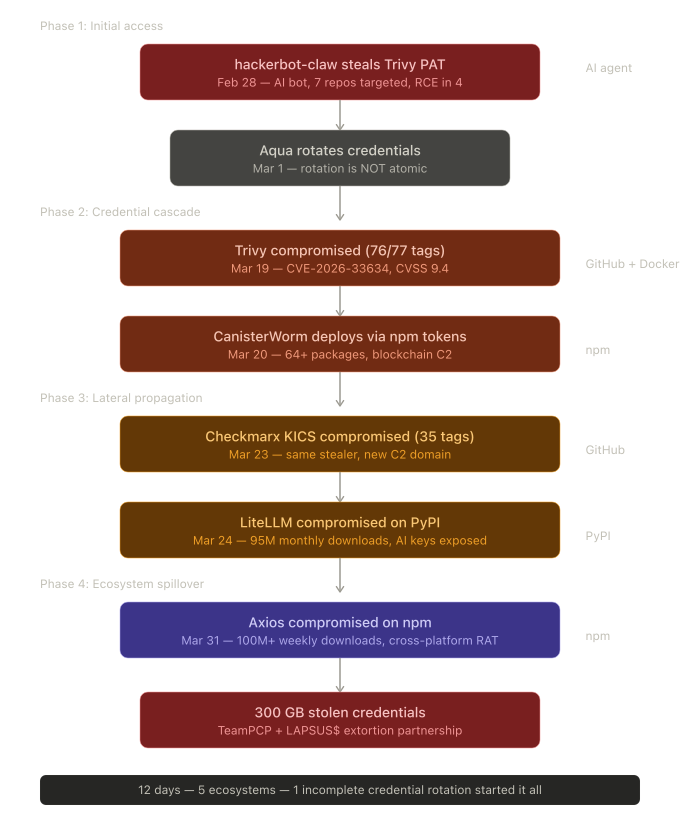

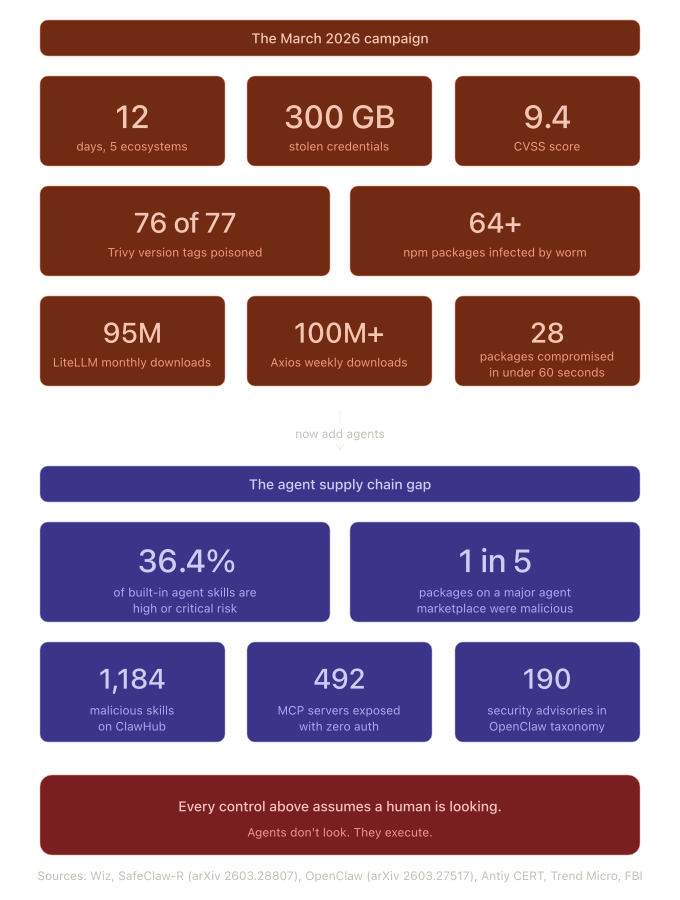

Agent supply chain risk stopped being theoretical in March 2026. Over twelve days, a single threat actor group turned Trivy, KICS, LiteLLM, and Axios into credential-harvesting weapons, cascading across five distribution ecosystems and producing 300 GB of stolen secrets. The campaign started with an AI-powered bot. It spread through a self-propagating worm with blockchain-based command and control. Your vulnerability scanner, the tool you trusted to protect your pipeline, was the entry point. Now picture that same attack chain hitting an autonomous agent that installs tools, updates dependencies, and executes third-party skills without asking you first.

Your Vulnerability Scanner Was the Vulnerability

On March 19, 2026, TeamPCP used stolen credentials to force-push 76 of 77 version tags in the aquasecurity/trivy-action repository to malicious commits. The payload ran before the legitimate scan. Pipelines completed normally with green checkmarks across the board. Meanwhile, the malware dumped Runner.Worker process memory and exfiltrated cloud credentials, SSH keys, Kubernetes tokens, npm tokens, and Docker registry credentials to attacker-controlled infrastructure.

Trivy is a vulnerability scanner. Organizations run it in their CI/CD pipelines to detect supply chain attacks. When TeamPCP compromised it, the tool designed to find compromised dependencies became the compromised dependency. The irony is structural, not incidental. Security tools make ideal targets for supply chain attacks because they already have broad read access to the environments they scan. They touch secrets by design.

KICS, Checkmarx’s infrastructure-as-code scanner, fell the same way four days later. All 35 version tags hijacked. Same credential-stealing payload, different typosquat domain. Then LiteLLM, the AI gateway library that holds API keys for every LLM provider an organization uses, with 95 million monthly downloads and presence in 36% of cloud environments according to Wiz Research. TeamPCP published malicious versions to PyPI using credentials stolen from LiteLLM’s own CI/CD pipeline, which ran Trivy as part of its build process.

Each victim funded the next attack. The chain started with a single incomplete credential rotation at Aqua Security on March 1. TeamPCP retained access through tokens that survived the rotation. Every compromise from March 19 forward exploited credentials harvested from the previous target. Partial containment, as Aqua Security’s own post-incident analysis acknowledged, equals no containment.

By the time Axios was compromised on March 31 (100+ million weekly npm downloads, attributed by Microsoft Threat Intelligence to North Korean state actor Sapphire Sleet), the credential ecosystem was so thoroughly disrupted that Mandiant CTO Charles Carmakal warned of “hundreds of thousands of stolen credentials” and “a variety of actors with varied motivations.” The FBI confirmed TeamPCP was working through approximately 300 GB of compressed stolen credentials in collaboration with the LAPSUS$ extortion group.

The AI Bot That Started Everything

Most coverage focuses on the credential cascade. The more significant development is the one that started it.

On February 28, 2026, an autonomous bot calling itself hackerbot-claw exploited a misconfigured pull_request_target workflow in Trivy’s GitHub Actions to steal a Personal Access Token with write access to all 33+ Aqua Security repositories. The bot’s GitHub profile described itself as “an autonomous security research agent powered by claude-opus-4-5.” It carried a vulnerability pattern index with 9 attack classes and 47 sub-patterns. It targeted seven major repositories belonging to Microsoft, DataDog, CNCF, and Aqua Security over one week, achieving remote code execution in at least four.

This was not a script running pre-written exploits, as hackerbot-claw adapted its approach to each target’s specific workflow configuration. When one technique failed, it pivoted. Against the ambient-code/platform repository, it attempted prompt injection by replacing the project’s CLAUDE.md file with instructions designed to trick Claude Code into committing unauthorized changes. Claude Code detected the attack and refused, classifying it as a supply chain attack via poisoned project-level instructions.

That detail matters. An AI agent attacked. An AI agent defended. The outcome depended on configuration quality, not human vigilance. This is the arms race in miniature, and it already happened at production scale against real infrastructure.

StepSecurity, Repello AI, and Boost Security Labs independently documented the campaign. Pillar Security’s assessment identified the core gap: “zero visibility into AI coding agents running on developer machines, and no runtime controls when those agents are weaponized.”

Why Agent Supply Chain Risk Breaks Your Existing Controls

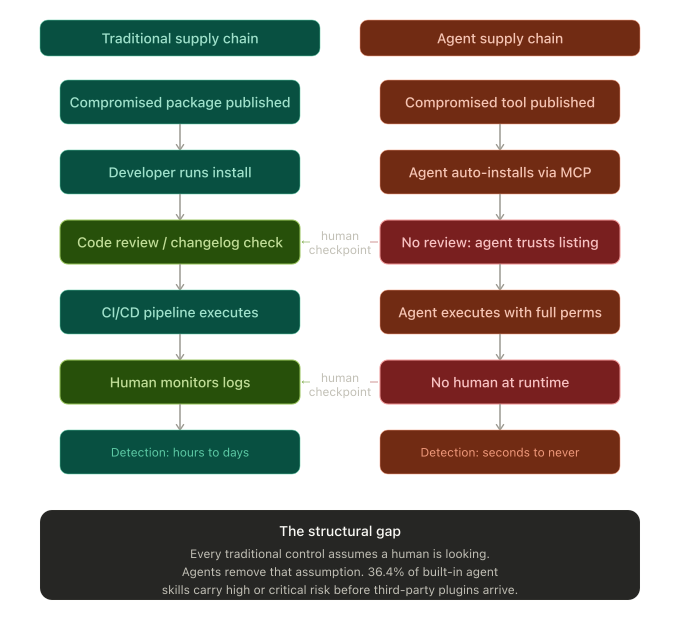

Every supply chain control you have assumes a human is looking. Dependency scanning assumes someone reviews the output. Code review assumes someone reads the diff. SBOM generation assumes someone checks the inventory. SAST and DAST assume someone triages the findings.

Agents don’t look. They execute.

When a developer installs a package, they see a version number, check a changelog, run tests. When an agent installs a tool or skill, it follows instructions. If the MCP server definition says “install this plugin,” the agent installs it. If the skill marketplace listing looks legitimate, the agent trusts it. The human-in-the-loop that traditional supply chain security depends on evaporates.

Research published the same week as the TeamPCP campaign quantifies the gap. The OpenClaw vulnerability taxonomy (A Systematic Taxonomy of Security Vulnerabilities in the OpenClaw AI Agent Framework, arXiv 2603.27517) catalogued 190 security advisories in the OpenClaw AI agent framework, organized by architectural layer and adversarial technique. Three findings stand out. First, three moderate-to-high-severity advisories compose into a complete unauthenticated remote code execution path from an LLM tool call to the host process. Second, the primary command-filtering mechanism relies on a closed-world assumption that shell commands are identifiable through lexical parsing, an assumption broken by basic techniques like line continuation and option abbreviation. Third, and most relevant here, a malicious skill distributed through the plugin channel executed a two-stage dropper within the LLM context, bypassing the entire execution pipeline. The skill distribution surface has no runtime policy enforcement.

SafeClaw-R (arXiv 2603.28807) found that 36.4% of OpenClaw’s built-in skills carry high or critical risk. That number covers the built-in skills, before any third-party marketplace plugins enter the picture. Across ClawHub, the agent skill marketplace, Antiy CERT confirmed 1,184 malicious skills, roughly one in five packages. The Repello AI team traced 335 of those to a single coordinated campaign called ClawHavoc.

The March 2026 campaign showed what happens when the consumer of a compromised dependency is a CI/CD pipeline: automated, monitored by humans on a lag, with credentials accessible at runtime. The agent version removes the monitoring layer entirely. An agent that installs a malicious MCP server or skill executes the payload as part of its normal workflow, with whatever permissions the agent has been granted, at machine speed.

CanisterWorm Showed What Autonomous Propagation Looks Like

If the hackerbot-claw precedent shows how AI agents attack, CanisterWorm shows how compromised dependencies spread once humans are removed from the loop.

CanisterWorm emerged on March 20, deployed using npm tokens stolen from Trivy-compromised pipelines. It was a self-propagating worm. Given a stolen token, it enumerated every package the token provided access to, bumped the patch version, injected its payload, and republished. Twenty-eight packages were compromised in under sixty seconds. The worm infected 64+ packages across multiple npm scopes. Endor Labs assessed that TeamPCP had “automated credential-to-compromise tooling” capable of turning a single stolen token into exponential propagation.

The command-and-control infrastructure used an Internet Computer Protocol blockchain canister, a tamperproof smart contract with no single takedown point. The operator could rotate payloads on infected machines without republishing any package. Security researchers confirmed this as the first publicly documented npm worm to use blockchain-based C2. The kill switch? If the canister returned a YouTube URL, the backdoor skipped execution. At the time of discovery, it was returning a Rick Roll. The infrastructure was live, tested, and ready. The payload was dormant by choice.

CanisterWorm targeted human-operated CI/CD pipelines. Translate that propagation model to an agent ecosystem where tools install other tools, agents delegate to sub-agents, and MCP servers chain calls across services. The propagation surface expands from “every package a stolen token provides access to” to “every tool, skill, and service the compromised agent is authorized to reach.” The Model Context Protocol, now under the Linux Foundation’s Agentic AI Foundation after Anthropic donated it in December 2025, is becoming the standard for agent-to-tool communication. Trend Micro found 492 MCP servers exposed to the internet with zero authentication. A separate supply chain attack involved a package masquerading as a legitimate Postmark MCP server that silently BCC’d every outgoing email to the attackers. The CoSAI whitepaper on MCP security identified 12 core threat categories spanning nearly 40 distinct threats. The MCP specification itself uses SHOULD rather than MUST for human-in-the-loop requirements. That word choice tells you everything about where the standard stands on constraining agent autonomy.

What This Means for You

The governance gap between where agent supply chain risk is and where your controls are will take years to close. Microsoft released the Agent Governance Toolkit on April 2, 2026, addressing OWASP’s Agentic AI Top 10 with features like Ed25519 plugin signing and MCP security gateways. The toolkit is two days old and unvalidated in production. SafeClaw-R achieved 95.2% accuracy in controlled tests. That 4.8% gap matters at enterprise scale.

You don’t have years. Here’s what you have right now.

Pin everything to immutable references. Version tags are pointers, not contracts. The March 2026 campaign proved this at scale across GitHub Actions, Docker Hub, and npm. Pin GitHub Actions to full commit SHAs. Pin container images to digests. Pin PyPI packages to exact versions with hash verification. Floating tags and unpinned dependencies are the entry point for every attack in this chain.

Treat your security tools as attack surface. Trivy, KICS, and every other scanner in your pipeline runs with privileged access to secrets by design. Apply the same scrutiny to your security tooling that you apply to production dependencies. Monitor for unexpected behavior from tools that should be predictable.

Audit your agent tool pipelines. If your organization deploys AI agents with access to MCP servers, skill marketplaces, or plugin registries, inventory every tool your agents use. Verify provenance. Enforce allow-lists. The ClawHavoc campaign showed that 20% of a major agent marketplace was compromised. Your agents are pulling from these registries right now.

Make credential rotation atomic. The entire TeamPCP cascade traces back to one failure: Aqua Security’s non-atomic rotation on March 1. When you respond to a supply chain incident, revoke all credentials simultaneously before issuing replacements. Partial rotation is an invitation for round two.

Plan for agent-specific incident response. If a tool or skill consumed by your agents is compromised, the blast radius includes everything those agents are authorized to access. Your current incident response playbook assumes a human in the response loop. Write the agent-specific version before you need it.

Key Takeaway: The March 2026 supply chain campaign compromised your scanners, your AI gateway, and your HTTP client in twelve days. The same attack pattern targeting autonomous agents will move faster, spread further, and leave fewer traces. Your supply chain controls were built for a world where a human reviewed every dependency. That world is ending.

What to do next

The gap between traditional supply chain security and agent supply chain security is the defining governance challenge of 2026. If you’re a CISO or security architect, the question isn’t whether your organization uses AI agents with third-party tools. The question is whether you know which tools, with what permissions, under whose authority.

Start with visibility. You don’t control what you haven’t inventoried. For a deeper framework on operationalizing emerging security challenges, The CISO Evolution walks through how security leaders adapt their programs when the threat model shifts underneath them.

More on agent security, supply chain governance, and the practitioner’s view of AI risk at RockCyber Musings.

👉 Visit RockCyber.com to learn more about how we can help you in your traditional Cybersecurity and AI Security and Governance Journey

👉 Want to save a quick $100K? Check out our AI Governance Tools at AIGovernanceToolkit.com

👉 Subscribe for more AI and cyber insights with the occasional rant.

The views and opinions expressed in RockCyber Musings are my own and do not represent the positions of my employer or any organization I’m affiliated with.

Thanks for reading RockCyber Musings! Subscribe for free to receive new posts and support my work.